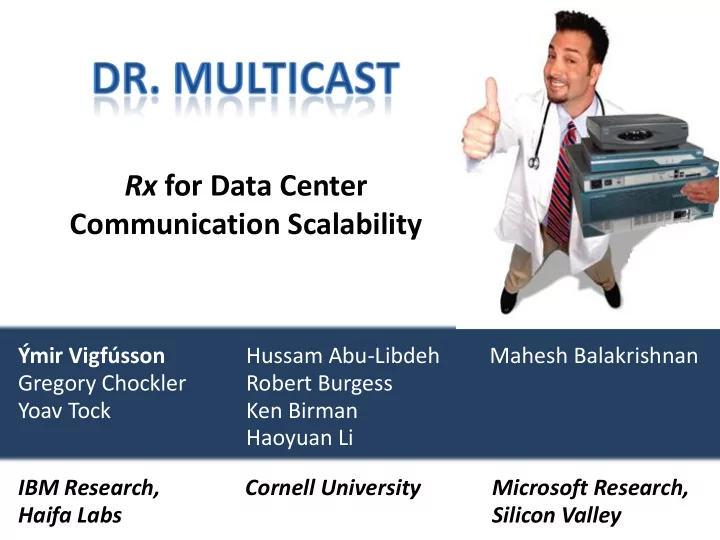

Rx for Data Center Communication Scalability mir Vigfsson Hussam - PowerPoint PPT Presentation

Rx for Data Center Communication Scalability mir Vigfsson Hussam Abu-Libdeh Mahesh Balakrishnan Gregory Chockler Robert Burgess Yoav Tock Ken Birman Haoyuan Li IBM Research, Cornell University Microsoft Research, Haifa Labs Silicon

Rx for Data Center Communication Scalability Ýmir Vigfússon Hussam Abu-Libdeh Mahesh Balakrishnan Gregory Chockler Robert Burgess Yoav Tock Ken Birman Haoyuan Li IBM Research, Cornell University Microsoft Research, Haifa Labs Silicon Valley

IP Multicast in Data Centers Useful – IPMC is fast, and widely supported – Multicast and pub/sub often used implicitly – Lots of redundant traffic in data centers [Anand et al. SIGMETRICS ’09] Rarely used – IP Multicast has scalability problems!

IP Multicast in Data Centers • Switching hierarchies

IP Multicast in Data Centers • Switches have limited state space Switch model (10Gbps) Group capacity Alcatel-Lucent OmniSwitch OS6850-4 260 Cisco Catalyst 3750E-48PD-EF 1,000 D-Link DGS-3650 864 Dell PowerConnect 6248P 69 Extreme Summit X450a-48t 792 Foundry FastIron Edge X 448+2XG 511 HP ProCurve 3500yl 1,499

IP Multicast in Data Centers

IP Multicast in Data Centers • NICs also have limited state space E.g. 16 exact addresses 512-bit Bloom filter

IP Multicast in Data Centers

IP Multicast in Data Centers • Kernel has to filter out unwanted packets!

IP Multicast in Data Centers • Packet loss triggers further problems – Reliability layer may aggravate loss – Major companies have suffered multicast storms IPMC has dangerous scalability issues

Dr. Multicast Key ideas • Treat IPMC groups as a scarce resource – Limit the number of physical IPMC groups – Translate logical IPMC groups into either physical IPMC groups or multicast by iterated unicast. • Merge similar groups together

Dr. Multicast • Transparent: Standard IPMC interface to user, standard IGMP interface to network. • Robust: Distributed, fault-tolerant service. • Optimizes resource use: Merges similar multicast groups together. • Scalable in number of groups: Limits number of physical IPMC groups.

Dr. Multicast • Library maps logical IPMC to physical IPMC or iterated unicast • Transparent to the application – IPMC calls intercepted and modified • Transparent to the network – Ordinary IPMC/IGMP traffic

Dr. Multicast • Transparent: Standard IPMC interface to user, standard IGMP interface to network. • Robust: Distributed, fault-tolerant service. • Optimizes resource use: Merges similar multicast groups together. • Scalable in number of groups: Limits number of physical IPMC groups.

Dr. Multicast • Per-node agent maintains global group membership and mapping – Library consults local agent • Leader agent periodically computes new mapping (see later). • State reconciled via gossip

Library Layer Overhead • Experiment measuring sends/sec at one sender • Sending to r addresses realizes roughly 1/ r ops/sec • Insignificant overhead when mapping logical IPMC group to physical IPMC group.

Network Overhead and Robustness • Experiment on 90 Emulab nodes Half of the nodes die Nodes introduced 10 at a time. Average traffic received per-node. Total network traffic grows linearly. Robust to major correlated failure

Dr. Multicast • Transparent: Standard IPMC interface to user, standard IGMP interface to network. • Robust: Distributed, fault-tolerant service. • Optimizes resource use: Merges similar multicast groups together. • Scalable in number of groups: Limits number of physical IPMC groups.

Optimization questions Multicast BLACK Users Groups Users Groups

Optimization Questions Assign IPMC and unicast addresses s.t. Min. receiver filtering Min. network traffic Min. # IPMC addresses … yet deliver all messages to interested parties

Optimization Questions Assign IPMC and unicast addresses s.t. receiver filtering ( 1 ) network traffic # IPMC addresses (hard) M • Knob to control relative costs of CPU filtering and of duplicate traffic. • Both and are part of administrative policy. M

MCMD Heuristic Groups in `user- interest’ space G RAD S TUDENTS F REE F OOD (1,1,1,1,1,0,1,0,1,0,1,1)

MCMD Heuristic Groups in `user- interest’ space Grow M meta-groups around the groups greedily while cost decreases

MCMD Heuristic Groups in `user- interest’ space Grow M meta-groups around the groups greedily while cost decreases

MCMD Heuristic Unicast Unicast Groups in `user- interest’ space 224.1.2.4 224.1.2.5 224.1.2.3

Data sets/models • Social: – Yahoo! Groups Users Groups – Amazon Recommendations – Wikipedia Edits – LiveJournal Communities – Mutual Interest Model

MCMD Heuristic • Total cost on samples of 1000 logical groups. – Costs drop exponentially with more IPMC addresses

Data sets/models • Social: – Yahoo! Groups Users Groups – Amazon Recommendations – Wikipedia Edits – LiveJournal Communities – Mutual Interest Model • Systems: – IBM Websphere

MCMD Heuristic • Total cost on IBM Websphere data set (simulation) – Negligible costs when using only 4 IPMC addresses

MCMD Heuristic • Parallel Websphere cells (127 nodes each) – Allow 1000 IPMC groups. Optimal until 250 cells. Filtering costs Duplication costs

Dr. Multicast • Transparent: Standard IPMC interface to user, standard IGMP interface to network. • Robust: Distributed, fault-tolerant service. • Optimizes resource use: Merges similar multicast groups together. • Scalable in number of groups: Limits number of physical IPMC groups.

Group Scalability • Experiment on Emulab with 1 receiver, 9 senders • MCMD prevents ill-effects when the # of groups scales up

Dr. Multicast IPMC is useful, but has scalability problems Dr. Multicast treats IPMC groups as scarce and sensitive resources – Transparent, backward-compatible – Scalable in the number of groups – Robust against failures – Optimizes resource use by merging similar groups • Enables safe and scalable use of multicast

Acceptable Use Policy • Assume a higher-level network management tool compiles policy into primitives. • Explicitly allow a process (user) to use IPMC groups. • allow-join(process ID, logical group ID) • allow-send(process ID, logical group ID) • Multicast by point-to-point unicast always permitted . • Additional restraints . • max-groups(process ID, limit) • force-unicast(process ID, logical group ID)

Group Similarity • IBM Websphere has remarkable structure • Typical for real-world systems? – Only one data point.

Group Similarity • Def: Similarity of groups is IBM Websphere

Social data sets • User and group degree distributions appear to follow power-laws. • Power-law degree distributions often modeled by preferential attachment. • Mutual Interest model: – Preferential attachment for bipartite graphs. Groups Users

IP Multicast in Data Centers • Useful, but rarely used. • Various problems: – Security – Stability – Scalability • Bottom line: Administrators have no control over IPMC. – Thus they choose to disable it.

Wishlist • Policy: Enable control of IPMC. • Transparency: Should be backward compatible with hardware and software. • Scalability: Needs to scale in number of groups. • Robustness: Solution should not bring in new problems.

Data sets/models • What’s in a ``group’’ ? • Social: Users Groups – Yahoo! Groups – Amazon Recommendations – Wikipedia Edits – LiveJournal Communities – Mutual Interest Model • Systems: – IBM Websphere – Hierarchy Model

Systems Data Set • Distributed systems tend to be hierarchically structured. • Hierarchy model – Motivated by Live Objects. Thm: Expect a pair of users to overlap in groups .

Group similarity • Def: Similarity of groups j,j’ is Wikipedia LiveJournal

Group similarity • Def: Similarity of groups j,j’ is Mutual Interest Model

Group communication • Most network traffic is unicast communication (one-to-one). • But a lot of content is identical: – Audio streams, video broadcasts, remote updates, etc. – Video traffic is forecast to be 90% of Internet traffic in 2013. • To minimize redundancy, would be nice to multicast communication (one-to-many).

IP Multicast in Data Centers Smaller scale – well defined hierarchy Single administrative domain Firewalled – can ignore malicious behavior

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.