4/17/2020 1

Reinforcement Learning for Control

Federico Nesti, f.nesti@santannapisa.it Federico Nesti, f.nesti@santannapisa.it

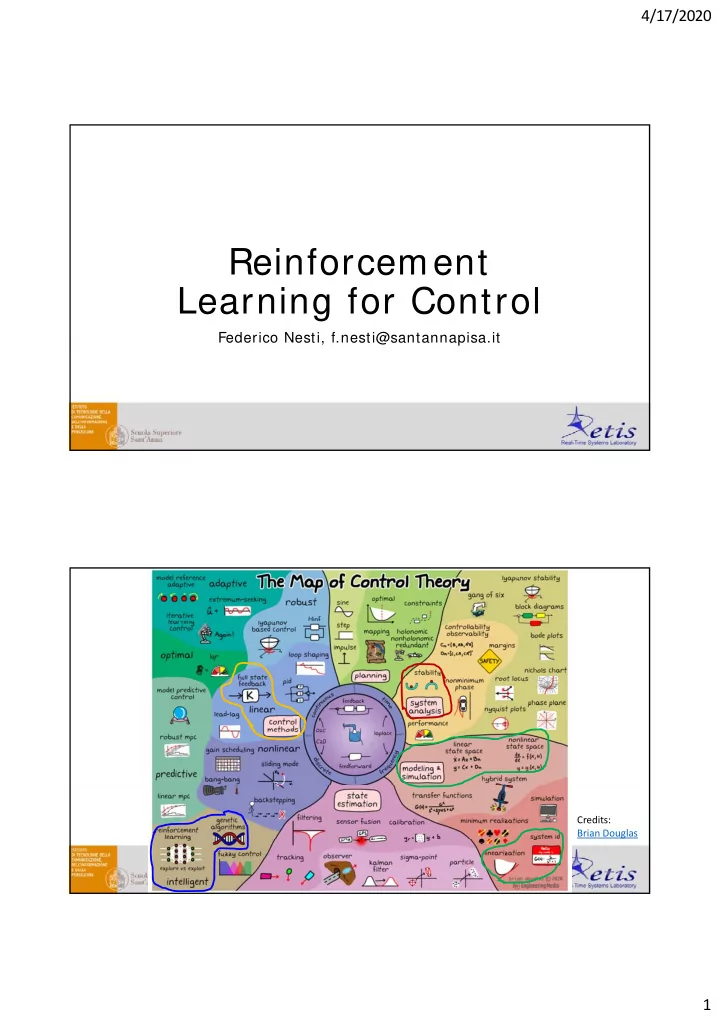

Credits: Brian Douglas

Reinforcement Learning for Control Federico Nesti, - - PDF document

4/17/2020 Reinforcement Learning for Control Federico Nesti, f.nesti@santannapisa.it Federico Nesti, f.nesti@santannapisa.it Credits: Brian Douglas 1 4/17/2020 Layout Reinforcement Learning Recap Applications: o Q-Learning o Deep

4/17/2020 1

Federico Nesti, f.nesti@santannapisa.it Federico Nesti, f.nesti@santannapisa.it

Credits: Brian Douglas

4/17/2020 2

M j Diffi lti i D RL

M j Diffi lti i D RL

4/17/2020 3

Environment Agent

Agent Environment

4/17/2020 4

Start State Terminal State

START

HOLE HOLE GOAL

4/17/2020 5

4/17/2020 6

M j Diffi lti i D RL

Policy search («primal» formulation): («primal» formulation): the search for the

directly in the policy space.

4/17/2020 7

Policy search («primal» formulation): («primal» formulation): the search for the

directly in the policy space.

4/17/2020 8

T t Target TD error TD error

4/17/2020 9

START

HOLE HOLE HOLE HOLE GOAL 1 2 3 4 5 6 7 8 9 1 0 1 1

1 2 3 4 5 6 7 8 9 1 0 1 1 1 2 1 3

1 2 1 3 1 4 1 5

1 3 1 4 1 5

4/17/2020 10

START

HOLE HOLE HOLE HOLE GOAL 1 2 3 4 5 6 7 8 9 1 0 1 1

1 2 3 4 5 6 7 8 9 1 0 1 1 1 2 1 3

1 2 1 3 1 4 1 5

1 3 1 4 1 5

Remember: Reward is 1 only when Goal is reached, else is 0.

each state has a correspondent optimal action.

BE FOUND, the actions were learned to avoid all possible actions that could lead to a hole.

4/17/2020 11

Extension of Q-learning for continuous state-space

Target TD error

Solution: use of TARGET NETW ORKS. Solution: use of REPLAY BUFFER.

4/17/2020 12

y r

Target Netw ork

Random Sampling

Replay Buffer

Netw ork

Evict old data

p g

Visual Gridw orld Agent Hole GOAL

4/17/2020 13

A few advices:

needed for satisfying solutions

4/17/2020 14

Policy search («primal» formulation): («primal» formulation): the search for the

directly in the policy space.

4/17/2020 15

Expand Expectation Expand Expectation Bring gradient under integral Return to expectation form Return to expectation form Expression for grad-log-prob

Full episode How to com pute p Return p this???

4/17/2020 16

to change to gradient descent

4/17/2020 17

W arnings:

p differentiation of TensorFlow to compute the gradient.

performance and has no meaning. There is no guarantee that this works.

the parameters (it should not in gradient descent) the parameters (it should not, in gradient descent).

performance indicator.

4/17/2020 18

4/17/2020 19

(Chain Rule)

4/17/2020 20

A few advices:

solutions and rank them in some clever way (e.g., prefer solutions with correspondent high average reward, or use some other performance measure – oscillations, for instance).

worry: if you find a set of parameters that had promising performance, save them and resume training starting from those parameters. This might require smaller learning rates. require smaller learning rates.

M j Diffi lti i D RL

4/17/2020 21

Sample Computational Efficiency On-Policy algorithms Off-Policy algorithms Model-based shallow RL

Model-based Deep RL Replay Buffer/ Value Estim ation m ethods

PG m ethods / Actor-Critic Fully online m ethods Gradient-free m ethods

(CMA-ES) Efficiency Efficiency

Search Q Learning

Actor Critic

m ethods

explains RL basics and the major Deep RL algorithms

basics book, full of examples. A bit hard to read at first but nice handbook.

for the main RL algorithms.

4/17/2020 22

in such a way that there is no «clever way» to maximize it. O i l l i i l f b l h d

approximation/ continuous spaces are used, no optimal solution is guaranteed;

scales exponentially with the number of state space dimensions.

(approximation and generalization, overfitting, high unpredictability, debugging difficulties)