Regression Diagnostics and the Forward Search 1

- A. C. Atkinson, London School of Economics

February 23 2009

The first section introduces the ideas of regression diagnostics for check- ing regression models and shows how deletion diagnostics may fail in the presence of several similar outliers. Section 2 describes the forward search for regression models and illustrates several of its properties.

1 Regression Diagnostics

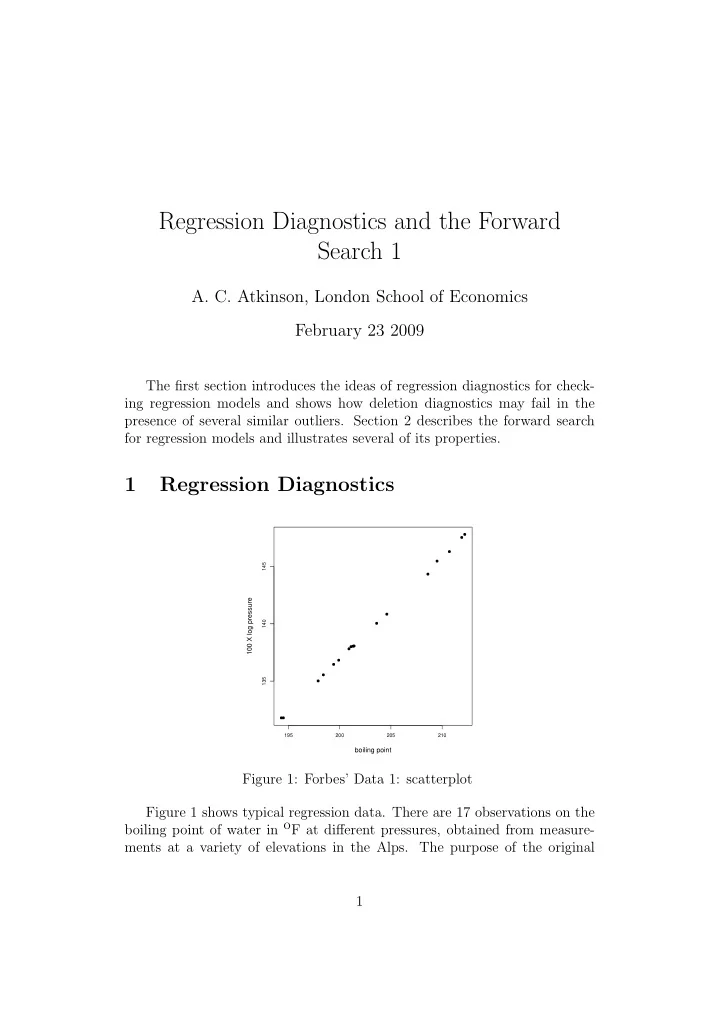

195 200 205 210 135 140 145

boiling point 100 X log pressure