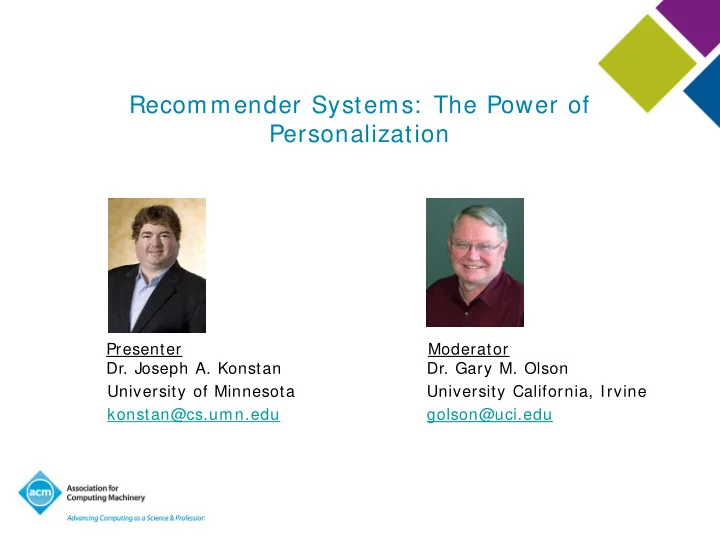

SLIDE 1 Recommender Systems: The Power of Personalization

Presenter Moderator

- Dr. Joseph A. Konstan

- Dr. Gary M. Olson

University of Minnesota University California, Irvine konstan@cs.umn.edu golson@uci.edu

SLIDE 2 ACM Learning Center ( http: / / learning.acm.org)

- 1,300+ trusted technical books and videos by leading

publishers including O’Reilly, Morgan Kaufmann, others

- Online courses with assessments and certification-track

mentoring, member discounts at partner institutions

- Learning W ebinars on big topics (Cloud Computing/ Mobile

Development, Cybersecurity, Big Data)

- ACM Tech Packs on big current computing topics: Annotated

Bibliographies compiled by subject experts

- Learning Paths (accessible entry points into popular languages)

- Popular video tutorials/ keynotes from ACM Digital Library,

Podcasts with industry leaders/ award winners

SLIDE 3

SLIDE 4 A Bit of History

- Ants, Cavemen, and Early Recommender Systems

– The emergence of critics

- Information Retrieval and Filtering

- Manual Collaborative Filtering

- Automated Collaborative Filtering

- The Commercial Era

SLIDE 5 A Bit of History

- Ants, Cavemen, and Early Recommender Systems

– The emergence of critics

- Information Retrieval and Filtering

- Manual Collaborative Filtering

- Automated Collaborative Filtering

- The Commercial Era

SLIDE 6 Information Retrieval

– Invest time in indexing content

– Queries presented in “real time”

term frequency inverse document frequency

– Rank documents by term overlap – Rank terms by frequency

SLIDE 7

SLIDE 8

SLIDE 9 Information Filtering

- Reverse assumptions from IR

– Static information need – Dynamic content base

- Invest effort in modeling user need

– Hand-created “profile” – Machine learned profile – Feedback/ updates

- Pass new content through filters

SLIDE 10

SLIDE 11 A Bit of History

- Ants, Cavemen, and Early Recommender Systems

– The emergence of critics

- Information Retrieval and Filtering

- Manual Collaborative Filtering

- Automated Collaborative Filtering

- The Commercial Era

SLIDE 12 Collaborative Filtering

– Information needs more complex than keywords or topics: quality and taste

– Tapestry – database of content & comments – Active CF – easy mechanisms for forwarding content to relevant readers

SLIDE 13 A Bit of History

- Ants, Cavemen, and Early Recommender Systems

– The emergence of critics

- Information Retrieval and Filtering

- Manual Collaborative Filtering

- Automated Collaborative Filtering

- The Commercial Era

SLIDE 14 Automated CF

- The GroupLens Project (CSCW ’94)

– ACF for Usenet News

- users rate items

- users are correlated with other users

- personal predictions for unrated items

– Nearest-Neighbor Approach

- find people with history of agreement

- assume stable tastes

SLIDE 15

Usenet Interface

SLIDE 16 Does it Work?

- Yes: The numbers don’t lie!

– Usenet trial: rating/ prediction correlation

- rec.humor: 0.62 (personalized) vs. 0.49 (avg.)

- comp.os.linux.system: 0.55 (pers.) vs. 0.41 (avg.)

- rec.food.recipes: 0.33 (pers.) vs. 0.05 (avg.)

– Significantly more accurate than predicting average or modal rating. – Higher accuracy when partitioned by newsgroup

SLIDE 17 It Works Meaningfully Well!

- Relationship with User Behavior

– Twice as likely to read 4/ 5 than 1/ 2/ 3

– Some users stayed 12 months after the trial!

SLIDE 18 A Bit of History

- Ants, Cavemen, and Early Recommender Systems

– The emergence of critics

- Information Retrieval and Filtering

- Manual Collaborative Filtering

- Automated Collaborative Filtering

- The Commercial Era

SLIDE 19

SLIDE 20

SLIDE 21

Amazon.com

SLIDE 22

SLIDE 23 Recommenders

- Tools to help identify worthwhile stuff

– Filtering interfaces

- E-mail filters, clipping services

– Recommendation interfaces

- Suggestion lists, “top-n,” offers and promotions

– Prediction interfaces

- Evaluate candidates, predicted ratings

SLIDE 24 Historical Challenges

- Collecting Opinion and Experience Data

- Finding the Relevant Data for a Purpose

- Presenting the Data in a Useful Way

SLIDE 25

Recommender Application Space

SLIDE 26 Scope of Recommenders

- Purely Editorial Recommenders

- Content Filtering Recommenders

- Collaborative Filtering Recommenders

- Hybrid Recommenders

SLIDE 27 Recommender Application Space

– Domain – Purpose – Whose Opinion – Personalization Level – Privacy and Trustworthiness – Interfaces – < Algorithms Inside>

SLIDE 28 Domains of Recommendation

– News, information, “text” – Products, vendors, bundles

SLIDE 29

Google: Content Example

SLIDE 30

C H

SLIDE 31 Purposes of Recommendation

- The recommendations themselves

– Sales – Information

- Education of user/ customer

- Build a community of users/ customers around products or

content

SLIDE 32

Buy.com customers also bought

SLIDE 33

Epinions Sienna overview

SLIDE 34

OWL Tips

SLIDE 35

ReferralWeb

SLIDE 36 Whose Opinion?

- “Experts”

- Ordinary “phoaks”

- People like you

SLIDE 37

Wine.com Expert recommendations

SLIDE 38

PHOAKS

SLIDE 39 Personalization Level

– Everyone receives same recommendations

– Matches a target group

– Matches current activity

– Matches long-term interests

SLIDE 40

Lands’ End

SLIDE 41

Brooks Brothers

SLIDE 42

Amazon.com

SLIDE 43

Cdnow album advisor

SLIDE 44

CDNow Album advisor recommendations

SLIDE 45

SLIDE 46 Privacy and Trustworthiness

– Personal information revealed – Identity – Deniability of preferences

- Is the recommendation honest?

– Biases built-in by operator

– Vulnerability to external manipulation

SLIDE 47 Interfaces

– Predictions – Recommendations – Filtering – Organic vs. explicit presentation

- Agent/ Discussion Interface Example

- Types of Input

– Explicit – Implicit

SLIDE 48 Wide Range of Algorithms

- Simple Keyword Vector Matches

- Pure Nearest-Neighbor Collaborative Filtering

- Machine Learning on Content or Ratings

SLIDE 49

Collaborative Filtering: Techniques and Issues

SLIDE 50 Collaborative Filtering Algorithms

- Non-Personalized Sum m ary Statistics

- K-Nearest Neighbor

- Dimensionality Reduction

- Content + Collaborative Filtering

- Graph Techniques

- Clustering

- Classifier Learning

SLIDE 51 Teaming Up to Find Cheap Travel

– “data it gathers anyway” – (Mostly) no cost to helper – Valuable information that is otherwise hard to acquire – Little processing, lots of collaboration

SLIDE 52

Expedia Fare Compare # 1

SLIDE 53

Expedia Fare Compare # 2

SLIDE 54

Zagat Guide Amsterdam Overview

SLIDE 55

Zagat Guide Detail

SLIDE 56 Zagat: Is Non-Personalized Good Enough?

- What happened to my favorite guide?

– They let you rate the restaurants!

– Personalized guides, from the people who “know good restaurants!”

SLIDE 57 Collaborative Filtering Algorithms

- Non-Personalized Summary Statistics

- K-Nearest Neighbor

– user-user – item-item

- Dimensionality Reduction

- Content + Collaborative Filtering

- Graph Techniques

- Clustering

- Classifier Learning

SLIDE 58

CF Classic: K-Nearest Neighbor User-User

C.F. Engine

Ratings Correlations

SLIDE 59

CF Classic: Submit Ratings

C.F. Engine

Ratings Correlations ratings

SLIDE 60

CF Classic: Store Ratings

C.F. Engine

Ratings Correlations ratings

SLIDE 61

CF Classic: Compute Correlations

C.F. Engine

Ratings Correlations pairwise corr.

SLIDE 62

CF Classic: Request Recommendations

C.F. Engine

Ratings Correlations request

SLIDE 63

CF Classic: Identify Neighbors

C.F. Engine

Ratings Correlations find good … Neighborhood

SLIDE 64

CF Classic: Select Items; Predict Ratings

C.F. Engine

Ratings Correlations Neighborhood

predictions recommendations

SLIDE 65

Understanding the Computation

Hoop Dreams Star Wars Pretty Woman Titanic Blimp Rocky XV

Joe

D A B D ? ?

John

A F D F

Susan

A A A A A A

Pat

D A C

Jean

A C A C A

Ben

F A F

Nathan

D A A

SLIDE 66

Hoop Dreams Star Wars Pretty Woman Titanic Blimp Rocky XV

Joe

D A B D ? ?

John

A F D F

Susan

A A A A A A

Pat

D A C

Jean

A C A C A

Ben

F A F

Nathan

D A A

Understanding the Computation

SLIDE 67

Hoop Dreams Star Wars Pretty Woman Titanic Blimp Rocky XV

Joe

D A B D ? ?

John

A F D F

Susan

A A A A A A

Pat

D A C

Jean

A C A C A

Ben

F A F

Nathan

D A A

Understanding the Computation

SLIDE 68

Hoop Dreams Star Wars Pretty Woman Titanic Blimp Rocky XV

Joe

D A B D ? ?

John

A F D F

Susan

A A A A A A

Pat

D A C

Jean

A C A C A

Ben

F A F

Nathan

D A A

Understanding the Computation

SLIDE 69

Hoop Dreams Star Wars Pretty Woman Titanic Blimp Rocky XV

Joe

D A B D ? ?

John

A F D F

Susan

A A A A A A

Pat

D A C

Jean

A C A C A

Ben

F A F

Nathan

D A A

Understanding the Computation

SLIDE 70

Hoop Dreams Star Wars Pretty Woman Titanic Blimp Rocky XV

Joe

D A B D ? ?

John

A F D F

Susan

A A A A A A

Pat

D A C

Jean

A C A C A

Ben

F A F

Nathan

D A A

Understanding the Computation

SLIDE 71

Hoop Dreams Star Wars Pretty Woman Titanic Blimp Rocky XV

Joe

D A B D ? ?

John

A F D F

Susan

A A A A A A

Pat

D A C

Jean

A C A C A

Ben

F A F

Nathan

D A A

Understanding the Computation

SLIDE 72

ML-home

SLIDE 73

ML-scifi-search

SLIDE 74

ML-clist

SLIDE 75

ML-rate

SLIDE 76

ML-search

SLIDE 77

ML-buddies

SLIDE 78

User-User Collaborative Filtering

?

Target Customer

Weighted Sum

3

SLIDE 79 A Challenge: Sparsity

- Many E-commerce and content applications have many

more customers than products

- Many customers have no relationship

- Most products have some relationship

SLIDE 80 Another challenge: Synonymy

– Similar products treated differently

- Have skim milk? Want whole milk too?

– Increases apparent sparsity – Results in poor quality

SLIDE 81

Item-Item Collaborative Filtering

I I I I I I I I I I I I I I I I I

SLIDE 82

Item-Item Collaborative Filtering

I I I I I I I I I I I I I I I I I

SLIDE 83

Item-Item Collaborative Filtering

I I I I I I I I I I I I I I I I I

SLIDE 84 Item Similarities 1 2 3 i n-1 n 1 2 u m m-1 j R

R R R R

si,j=?

Used for similarity computation

SLIDE 85 1 2 u m

2nd 1st 3rd 5th 4th

5 closest neighbors

R R R R

R R R R

i 1 2 3 i-1 m-1 m

si,1 si,3 si,i-1 si,m

weighted sum regression-based

Raw scores for prediction generation Approximation based on linear regression Target item

Item-Item Matrix Formulation

SLIDE 86 Item-Item Discussion

- Good quality, in sparse situations

- Promising for incremental model building

– Small quality degradation

- Nature of recommendations changes

– Big performance gain

SLIDE 87 Collaborative Filtering Algorithms

- Non-Personalized Summary Statistics

- K-Nearest Neighbor

- Dimensionality Reduction

– Singular Value Decomposition – Factor Analysis

- Content + Collaborative Filtering

- Graph Techniques

- Clustering

- Classifier Learning

SLIDE 88 Dimensionality Reduction

– Used by the IR community – Worked well with the vector space model – Used Singular Value Decomposition (SVD)

– Term-document matching in feature space – Captures latent association – Reduced space is less noisy

SLIDE 89

SVD: Mathematical Background

= R m X n U m X r S r X r V’ r X n Sk k X k Uk m X k Vk’ k X n

The reconstructed matrix Rk = Uk.Sk.Vk’ is the closest rank-k matrix to the original matrix R.

Rk

SLIDE 90 SVD for Collaborative Filtering

.

Prediction m x n

- 1. Low dimensional representation

O(m+n) storage requirement m x k k x n

SLIDE 91

Singular Value Decomposition

Reduce dimensionality of problem

– Results in small, fast model – Richer Neighbor Network

Incremental Update

– Folding in – Model Update

Trend

– Towards use of probabilistic LSI

SLIDE 92 Collaborative Filtering Algorithms

- Non-Personalized Summary Statistics

- K-Nearest Neighbor

- Dimensionality Reduction

- Content + Collaborative Filtering

- Graph Techniques

– Horting: Navigate Similarity Graph

- Clustering

- Classifier Learning

– Rule-Induction Learning – Bayesian Belief Networks

SLIDE 93 Resources

– Recommender Systems: From Algorithms to User Experience (2012): http: / / www.grouplens.org/ node/ 480 – Collaborative Filtering Recommender Systems (2011): http: / / www.grouplens.org/ node/ 475

– Recommender Systems: An Introduction (2010) buy Jannach et al. – Recommender Systems Handbook (2010) by Ricci et al.

– LensKit – http: / / lenskit.grouplens.org – MyMedia – http: / / www.mymediaproject.org – Mahout – http: / / mahout.apache.org

SLIDE 94 ACM: The Learning Continues

- Questions about this webinar? learning@acm.org

- ACM Learning Center: http: / / learning.acm.org

- ACM SIGCHI: http: / / www.sigchi.org

- ACM Conference on Recommender Systems

http: / / recsys.acm.org