course overview

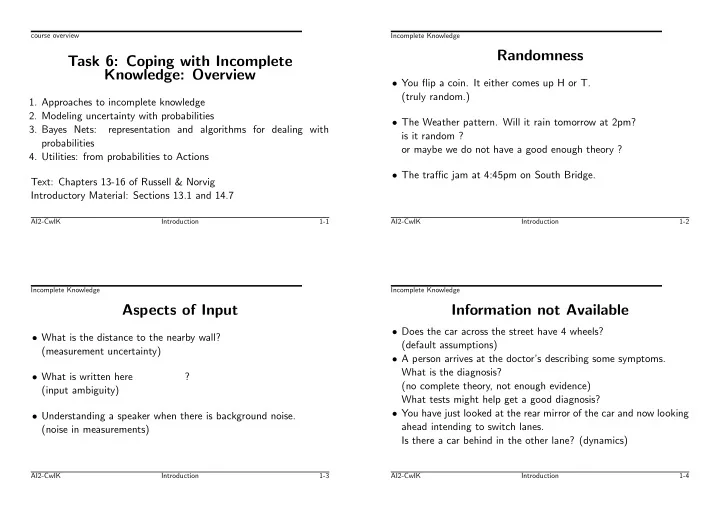

Task 6: Coping with Incomplete Knowledge: Overview

- 1. Approaches to incomplete knowledge

- 2. Modeling uncertainty with probabilities

- 3. Bayes Nets:

representation and algorithms for dealing with probabilities

- 4. Utilities: from probabilities to Actions

Text: Chapters 13-16 of Russell & Norvig Introductory Material: Sections 13.1 and 14.7

AI2-CwIK Introduction 1-1 Incomplete Knowledge

Randomness

- You flip a coin. It either comes up H or T.

(truly random.)

- The Weather pattern. Will it rain tomorrow at 2pm?

is it random ?

- r maybe we do not have a good enough theory ?

- The traffic jam at 4:45pm on South Bridge.

AI2-CwIK Introduction 1-2 Incomplete Knowledge

Aspects of Input

- What is the distance to the nearby wall?

(measurement uncertainty)

- What is written here

? (input ambiguity)

- Understanding a speaker when there is background noise.

(noise in measurements)

AI2-CwIK Introduction 1-3 Incomplete Knowledge

Information not Available

- Does the car across the street have 4 wheels?

(default assumptions)

- A person arrives at the doctor’s describing some symptoms.

What is the diagnosis? (no complete theory, not enough evidence) What tests might help get a good diagnosis?

- You have just looked at the rear mirror of the car and now looking

ahead intending to switch lanes. Is there a car behind in the other lane? (dynamics)

AI2-CwIK Introduction 1-4