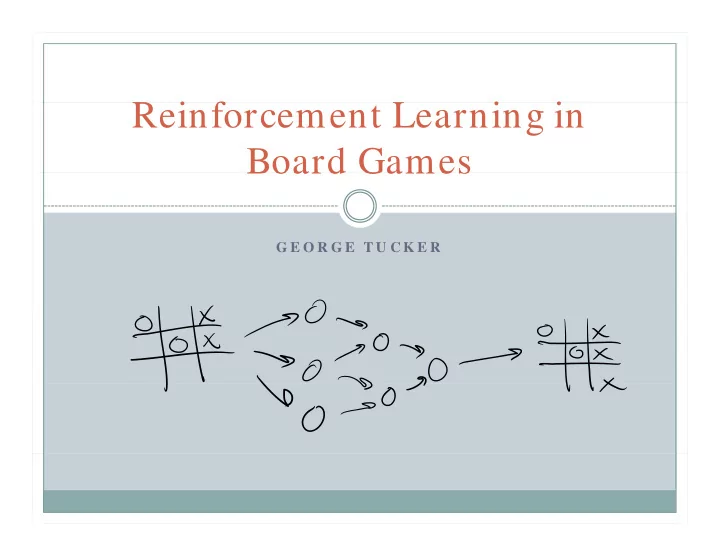

SLIDE 1 R i f L i i Reinforcement Learning in Board Games

G E O R G E T U C K E R

Board Games

G E O R G E T U C K E R

SLIDE 2 Paper Background

Reinforcement learning in board games

g g

Imran Ghory 2004

Surveys progress in last decade Suggests improvements Formalizes key game properties Develops a TD-learning game system

SLIDE 3 Why board games?

Regarded as a sign of intelligence and learning

g g g g

Chess

Games as simplified models

Battleship

Existing methods of comparison

i

Rating systems

SLIDE 4 What is reinforcement learning?

After a sequence of actions get a reward

q g

Positive or negative

Temporal credit assignment problem

Determine credit for the reward Temporal Difference Methods TD-lambda TD-lambda

SLIDE 5 History

Basics developed by Arthur Samuel

p y

Checkers

Richard Sutton introduced TD-lambda Gerald Tesauro creates TD-Gammon Chess and Go

Worse then conventional AI

SLIDE 6 History

Othello

Contradictory results

Substantial growth since then TD-lambda has potential to learn game variants

SLIDE 7 Conventional Strategies

Most methods use an evaluation function Use minimax/ alpha-beta search Hand-designed feature detectors

g

Evaluation function is a weighted sum

So why TD learning?

Does not need hand coded features

li i

Generalization

SLIDE 8

Temporal Difference Learning

SLIDE 9

Temporal Difference Learning

SLIDE 10 Disadvantage

Requires lots of training

q g

Self-play

Short-term pathologies Randomization

SLIDE 11 TD Algorithm Variants

TD-Leaf

Evaluation function search

TD-Directed

Minimax search

TD-Mu

i d

Fixed opponent Use evaluation function on opponent’s moves

SLIDE 12 Current State

Many improvements

y p

Sparse and dubious validation Hard to check

Tuning weights

Nonlinear combinations Differentiate between effective and ineffective Differentiate between effective and ineffective

Automated evolution method of feature generation

Turian Turian

SLIDE 13 Important Game Properties

Board Smoothness

Capabilities tied to smoothness Based on the board representation

Divergence rate Divergence rate

Measure how a single move changes the board Backgammon and Chess – low to medium Othello – high

Forced exploration

St t s l it

State space complexity

Longer training Possibly the most important factor

y p

SLIDE 14

Importance of State space complexity

SLIDE 15 Training Data

Random play

Limited use

Fixed opponent

Game environment and opponent are one Game environment and opponent are one

Database play

Speed

p

Self-play

No outside sources for data

Sl

Slow Learns what works

Hybrid methods

Hybrid methods

SLIDE 16 Improvement: General

Reward size

Fixed value Based on end board

Board encoding Board encoding When to learn?

Every move?

y

Random moves?

Repetitive learning

d

Board inversion Batch learning

SLIDE 17 Improvement: Neural Network

Functions in Neural Network

Radial Basis Functions

Training algorithm

RPROP

Random weight initialization

Si ifi

Significance

SLIDE 18 Improvement: Self-play

Asymmetry

y y

Game-tree + function approximator

Player handling

Tesauro adds an extra unit Negate score (zero-sum game) Reverse colors Reverse colors

Random moves

Algorithm Algorithm

Informed final board evaluation

SLIDE 19 Evaluation

Tic-tac-toe and Connect 4

Amenable to TD-learning Human board encoding is near optimal

Networks across multiple games

A general game player Plays perfectly near end game Plays perfectly near end game Randomly otherwise Random-decay handicap % of moves are random Common system

SLIDE 20

Random Initializations

Significant impact on learning

g p g

SLIDE 21

Inverted Board

Speeds up initial training

p p g

SLIDE 22

Random Move Selection

More sophisticated techniques are required

p q q

SLIDE 23

Reversed Color Evaluation

SLIDE 24

Batch Learning

Similar to control

SLIDE 25

Repetitive learning

No advantage

g

SLIDE 26

Informed Final Board Evaluation

Extremely significant

y g

SLIDE 27

Conclusion

Inverted boards and reverse color evaluation Initialization is important Biased randomization techniques

q

Batch learning has promise Informed final board evaluation is important

p