SLIDE 1

Context

- Many try to explain CNN predictions

- Good overview: CVPR 2018 tutorial on Interpretable ML for CV

- https://interpretablevision.github.io/

- Studies show existing methods that use gradients are problematic

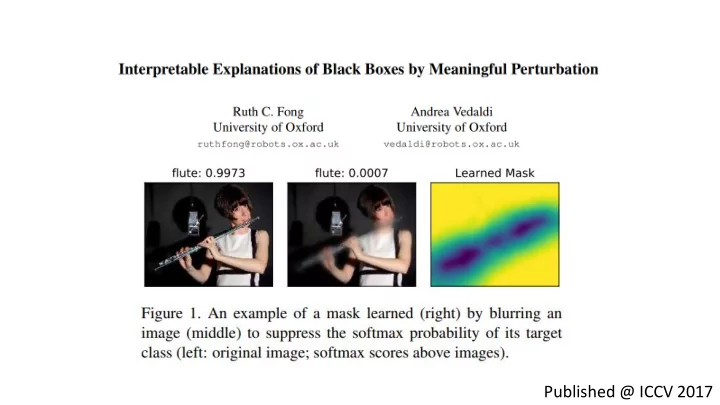

- Today: a 'good' explenation method