209

Chapter 11

Processes

The process architecture brings us closer to the system’s physical level. We focus on distribution and execution, and work with processes and objects as

- pposed to components and classes. We also deal with the physical devices

that the system will be executed on and consider whether we need to coordi- nate shared resources. This process view complements the logical structur- ing expressed in the component architecture. The process activity is structured according to two levels of abstraction. The first is the overall level, where we define the distribution of program components on the available system processors. The second level deals with the processes that structure collaboration among the objects present during

- execution. The process activity is quickly complete if we are designing a

stand-alone administrative system. However, the complexity of process-ar- chitecture design increases significantly for monitoring and control sys-

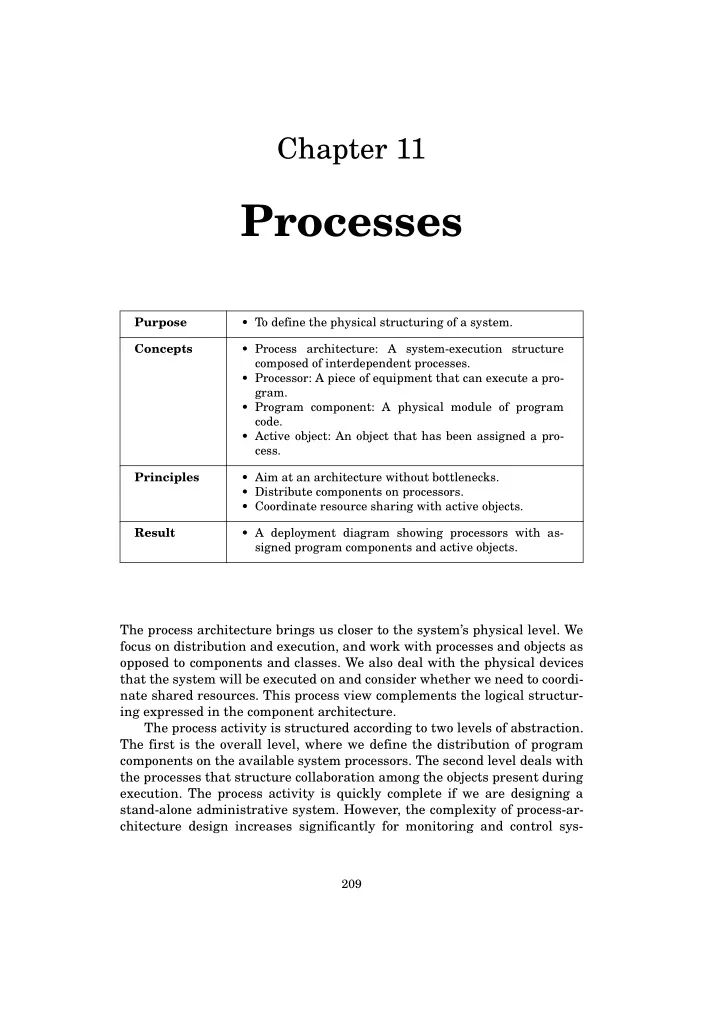

Purpose

- To define the physical structuring of a system.

Concepts

- Process architecture: A system-execution structure

composed of interdependent processes.

- Processor: A piece of equipment that can execute a pro-

gram.

- Program component: A physical module of program

code.

- Active object: An object that has been assigned a pro-

cess. Principles

- Aim at an architecture without bottlenecks.

- Distribute components on processors.

- Coordinate resource sharing with active objects.

Result

- A deployment diagram showing processors with as-

signed program components and active objects.