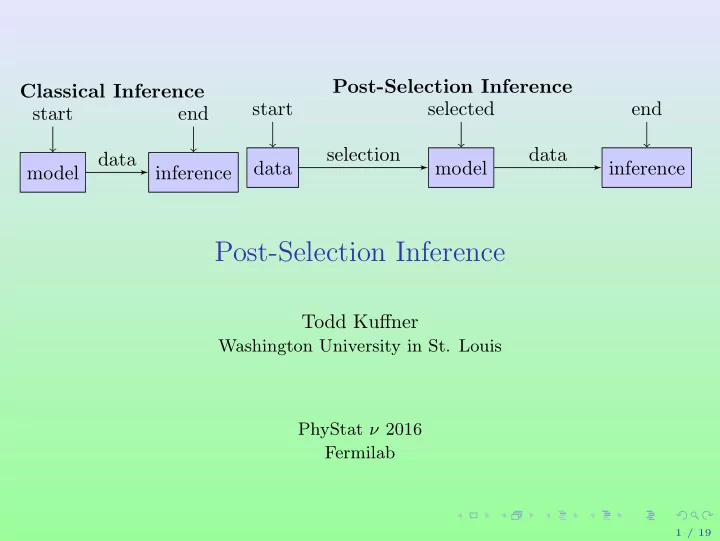

Classical Inference model start inference end data Post-Selection Inference data start model selected inference end selection data

Post-Selection Inference

Todd Kuffner

Washington University in St. Louis PhyStat ν 2016 Fermilab

1 / 19

Post-Selection Inference Todd Kuffner Washington University in St. - - PowerPoint PPT Presentation

Post-Selection Inference Classical Inference start selected end start end selection data data data model inference model inference Post-Selection Inference Todd Kuffner Washington University in St. Louis PhyStat 2016 Fermilab 1

1 / 19

2 / 19

3 / 19

3 / 19

3 / 19

4 / 19

0<α<1{α : S(X) ∈ Rα}.

5 / 19

0<α<1{α : ˜

6 / 19

i.i.d.

7 / 19

i.i.d.

7 / 19

i.i.d.

7 / 19

i.i.d.

i=1 Xi

7 / 19

i.i.d.

i=1 Xi

7 / 19

i.i.d.

i=1 Xi

7 / 19

i.i.d.

i=1 Xi

7 / 19

8 / 19

8 / 19

8 / 19

8 / 19

8 / 19

9 / 19

9 / 19

9 / 19

1

10 / 19

1 (under null)

1 under null

11 / 19

1 variable, when βk = 0 ∀k = 1, . . . , p.

1 distribution; dotted line is 0.95 quantile of χ2 1

1 quantile (3.84) has actual type I error probability of 0.39

12 / 19

13 / 19

1 n0

1 n0

14 / 19

15 / 19

16 / 19

p

M

17 / 19

18 / 19

19 / 19