1

1

Grammatical Inference 2005

c d l h

1

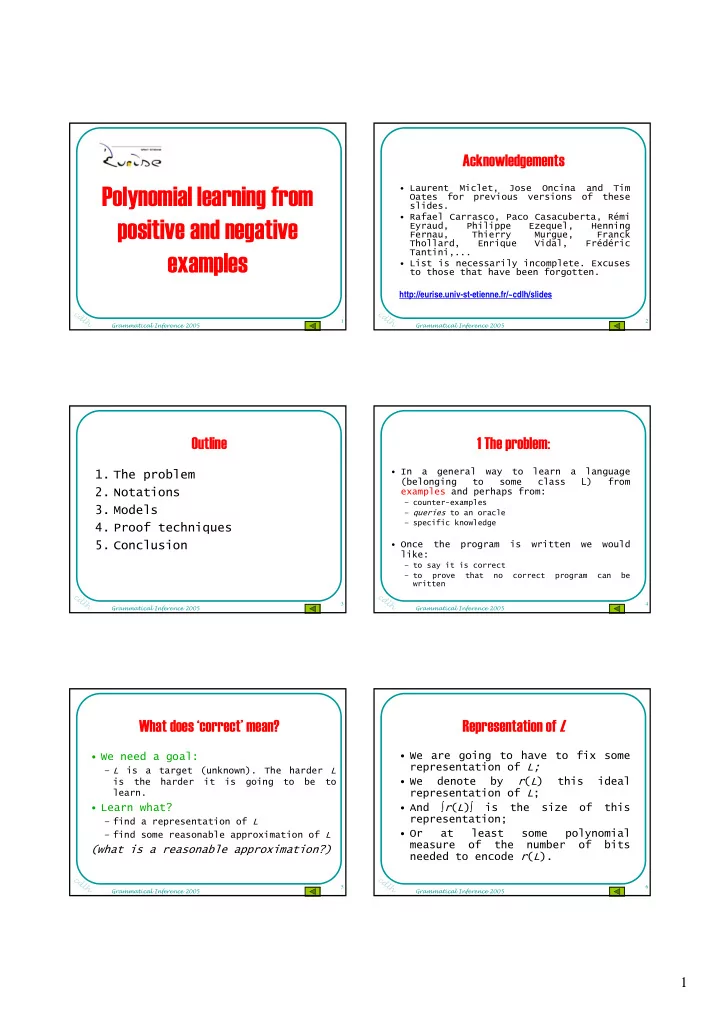

Polynomial learning from positive and negative examples

2

Grammatical Inference 2005

c d l h

2

Acknowledgements

- Laurent Miclet, Jose Oncina

and Tim Oates for previous versions of these slides.

- Rafael Carrasco, Paco Casacuberta, Rémi

Eyraud, Philippe Ezequel, Henning Fernau, Thierry Murgue, Franck Thollard, Enrique Vidal, Frédéric Tantini,...

- List is necessarily incomplete. Excuses

to those that have been forgotten. http://eurise.univ-st-etienne.fr/~cdlh/slides

3

Grammatical Inference 2005

c d l h

3

Outline

- 1. The problem

- 2. Notations

- 3. Models

- 4. Proof techniques

- 5. Conclusion

4

Grammatical Inference 2005

c d l h

4

1 The problem:

- In a general way to learn a language

(belonging to some class L) from examples and perhaps from:

– counter-examples – queries to an oracle – specific knowledge

- Once the program is written we would

like:

– to say it is correct – to prove that no correct program can be written

5

Grammatical Inference 2005

c d l h

5

What does ‘correct’ mean?

- We need a goal:

– L is a target (unknown). The harder L is the harder it is going to be to learn.

- Learn what?

– find a representation of L – find some reasonable approximation of L

(what is a reasonable approximation?)

6

Grammatical Inference 2005

c d l h

6

Representation of L

- We are going to have to fix some

representation of L;

- We denote by r(L) this ideal

representation of L;

- And

∫r(L)∫ is the size of this representation;

- Or

at least some polynomial measure of the number of bits needed to encode r(L).