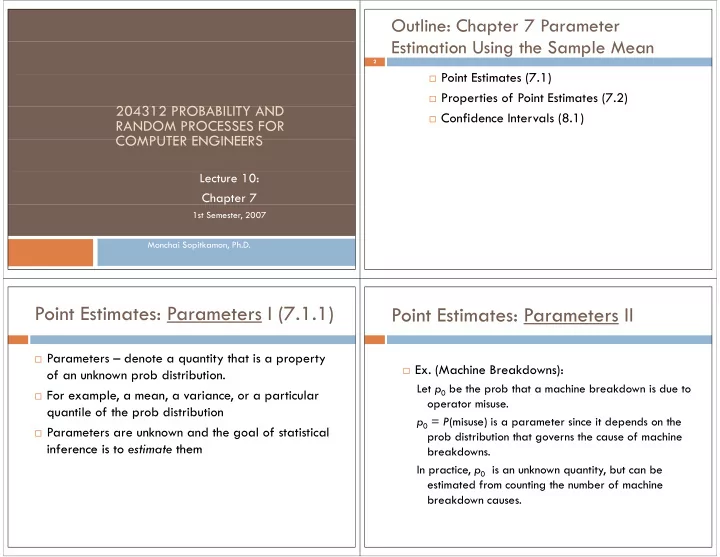

204312 PROBABILITY AND 204312 PROBABILITY AND RANDOM PROCESSES FOR COMPUTER ENGINEERS COMPUTER ENGINEERS

Lecture 10: Chapter 7 p

1st Semester, 2007 Monchai Sopitkamon, Ph.D.

Outline: Chapter 7 Parameter E U h S l M Estimation Using the Sample Mean

P i E i (7 1)

2

Point Estimates (7.1) Properties of Point Estimates (7.2) Confidence Intervals (8.1)

Point Estimates: Parameters I (7.1.1) Point Estimates: Parameters I (7.1.1)

Parameters – denote a quantity that is a property

- f an unknown prob distribution.

For example, a mean, a variance, or a particular

quantile of the prob distribution q p

Parameters are unknown and the goal of statistical

inference is to estimate them inference is to estimate them

Point Estimates: Parameters II Point Estimates: Parameters II

- Ex. (Machine Breakdowns):

Let p0 be the prob that a machine breakdown is due to

- perator misuse.