Multithreaded Parallelism and Performance Measures

Marc Moreno Maza

University of Western Ontario, London, Ontario (Canada)

CS 4435 - CS 9624

(Moreno Maza) Multithreaded Parallelism and Performance Measures CS 4435 - CS 9624 1 / 62

Plan

1

Parallelism Complexity Measures

2

cilk for Loops

3

Scheduling Theory and Implementation

4

Measuring Parallelism in Practice

5

Announcements

(Moreno Maza) Multithreaded Parallelism and Performance Measures CS 4435 - CS 9624 2 / 62 Parallelism Complexity Measures

Plan

1

Parallelism Complexity Measures

2

cilk for Loops

3

Scheduling Theory and Implementation

4

Measuring Parallelism in Practice

5

Announcements

(Moreno Maza) Multithreaded Parallelism and Performance Measures CS 4435 - CS 9624 3 / 62 Parallelism Complexity Measures

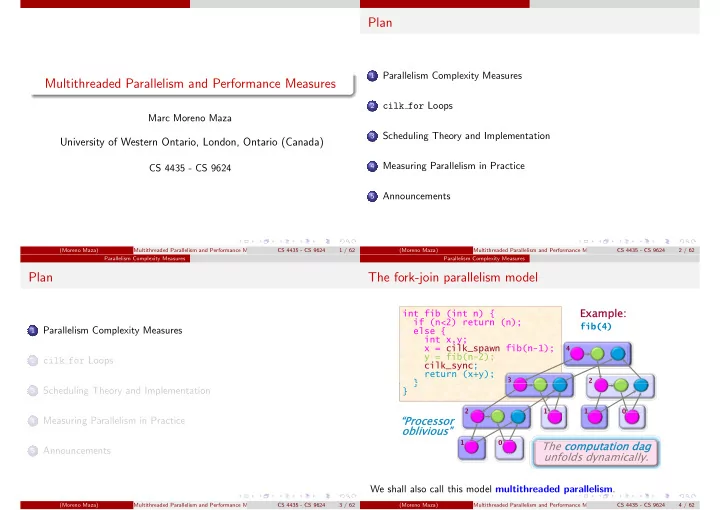

The fork-join parallelism model

int fib (int n) { if (n<2) return (n); int fib (int n) { if (n<2) return (n);

Example: Example:

fib(4) ( ) ( ); else { int x,y; x = cilk_spawn fib(n-1); y fib(n 2); ( ) ( ); else { int x,y; x = cilk_spawn fib(n-1); y fib(n 2); fib(4)

4

y = fib(n-2); cilk_sync; return (x+y); } y = fib(n-2); cilk_sync; return (x+y); }

3 2

} } } }

2 1 1

“Processor “Processor

- blivious”

- blivious”

2 1 1 1

The computation dag computation dag unfolds dynamically.

1

We shall also call this model multithreaded parallelism.

(Moreno Maza) Multithreaded Parallelism and Performance Measures CS 4435 - CS 9624 4 / 62