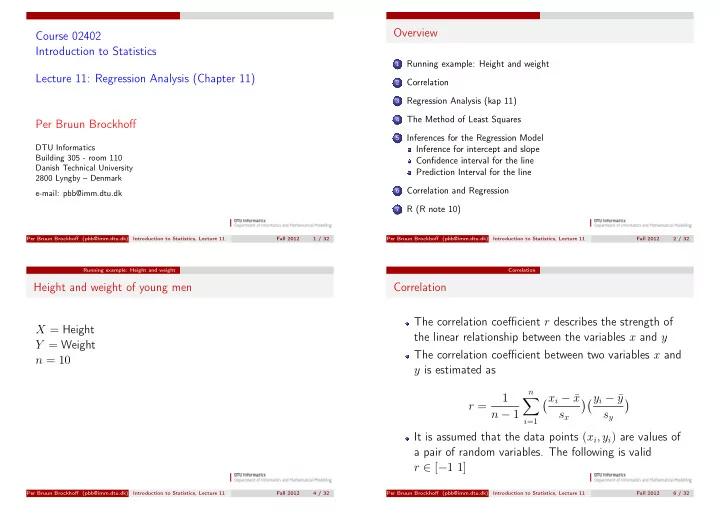

Course 02402 Introduction to Statistics Lecture 11: Regression Analysis (Chapter 11) Per Bruun Brockhoff

DTU Informatics Building 305 - room 110 Danish Technical University 2800 Lyngby – Denmark e-mail: pbb@imm.dtu.dk

Per Bruun Brockhoff (pbb@imm.dtu.dk) Introduction to Statistics, Lecture 11 Fall 2012 1 / 32

Overview

1

Running example: Height and weight

2

Correlation

3

Regression Analysis (kap 11)

4

The Method of Least Squares

5

Inferences for the Regression Model Inference for intercept and slope Confidence interval for the line Prediction Interval for the line

6

Correlation and Regression

7

R (R note 10)

Per Bruun Brockhoff (pbb@imm.dtu.dk) Introduction to Statistics, Lecture 11 Fall 2012 2 / 32 Running example: Height and weight

Height and weight of young men X = Height Y = Weight n = 10

Per Bruun Brockhoff (pbb@imm.dtu.dk) Introduction to Statistics, Lecture 11 Fall 2012 4 / 32 Correlation

Correlation The correlation coefficient r describes the strength of the linear relationship between the variables x and y The correlation coefficient between two variables x and y is estimated as r = 1 n − 1

n

- i=1

xi − ¯ x sx yi − ¯ y sy

- It is assumed that the data points (xi, yi) are values of

a pair of random variables. The following is valid r ∈ [−1 1]

Per Bruun Brockhoff (pbb@imm.dtu.dk) Introduction to Statistics, Lecture 11 Fall 2012 6 / 32