Feature Selection Feature Extraction

Reducing Dimensionality

Steven J Zeil

Old Dominion Univ.

Fall 2010

1 Feature Selection Feature Extraction

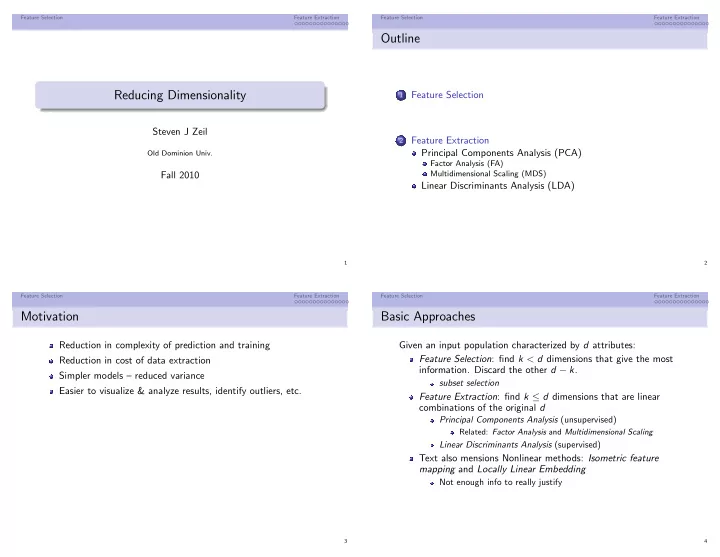

Outline

1

Feature Selection

2

Feature Extraction Principal Components Analysis (PCA)

Factor Analysis (FA) Multidimensional Scaling (MDS)

Linear Discriminants Analysis (LDA)

2 Feature Selection Feature Extraction

Motivation

Reduction in complexity of prediction and training Reduction in cost of data extraction Simpler models – reduced variance Easier to visualize & analyze results, identify outliers, etc.

3 Feature Selection Feature Extraction

Basic Approaches

Given an input population characterized by d attributes: Feature Selection: find k < d dimensions that give the most

- information. Discard the other d − k.

subset selection

Feature Extraction: find k ≤ d dimensions that are linear combinations of the original d

Principal Components Analysis (unsupervised)

Related: Factor Analysis and Multidimensional Scaling

Linear Discriminants Analysis (supervised)

Text also mensions Nonlinear methods: Isometric feature mapping and Locally Linear Embedding

Not enough info to really justify

4