- The Impact Of Computer Architectures

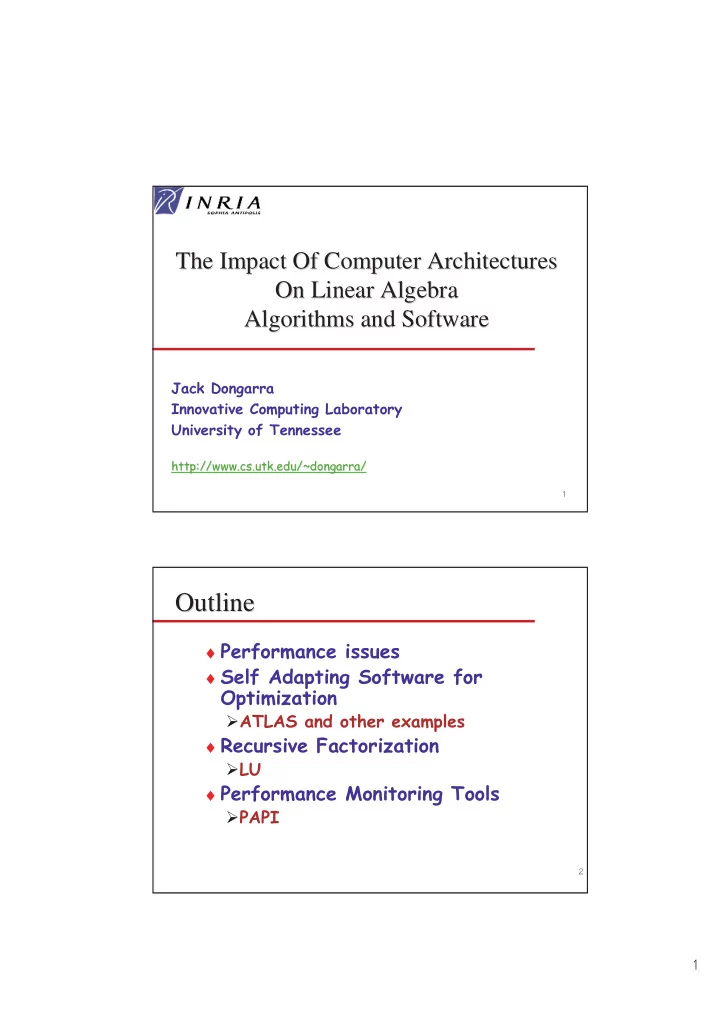

The Impact Of Computer Architectures On Linear Algebra On Linear Algebra Algorithms and Software Algorithms and Software

- Outline

Outline

♦ ♦

!"

#$ ♦ %&"

- ♦ '

Outline Outline - - PDF document

The Impact Of Computer Architectures The Impact Of Computer Architectures On Linear Algebra On Linear Algebra Algorithms and Software Algorithms and Software

♦ ()* +$+*,&!-.'-/ ♦ (+* +$+*0 &!-.1-/

23#4 # 54

♦ ( +$+*+)&!-.-/

6#444 4-

♦ (+* +$+*+7&!-.-/

''64-36 '4 # 44 $4'

µProc 60%/yr. (2X/1.5yr) DRAM 9%/yr. (2X/10 yrs)

1980 1981 1983 1984 1985 1986 1987 1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000

DRAM CPU

1982

“Moore’s Law” Processor-DRAM Memory Gap (latency) Processor-Memory Performance Gap: (grows 50% / year)

♦ "

#8.9/:.-/:'#"

8.+/:.+-/:.;7*'#"/<;7*'&!- =8.+/:.)-/:.)>7?1#"/<7*,*'&!- #8.)/:.+-/:.,**'#"/<+)**'&!- ?8.)/:.)-/:.?@7'#"/<+7**'&!-

♦ !8

α α α <$ 8

).+,5/)A ;7*'- B+@**'C- #

<α α α α $D8 ?.)=5/)A

;7*'- B)77*'C- #

♦ '"

#8. #/:./

8.?)/:.+??'#"/<7?)'5-<,,>7'C- =8.?)/:.7??'#"/<)+?)'5-<),,'C- #8.,=/:.+??'#"/<+*,='5-<+??'C- ?8.+);/:.+**'#"/<+,**'5-<)**'C-

♦ 5#8

# ## ###> ## #>

Control Datapath Secondary Storage (Disk) Processor Registers Main Memory (DRAM) Level 2 and 3 Cache (SRAM) On-Chip Cache 1s 10,000,000s (10s ms) 100,000 s (.1s ms) Speed (ns): 10s 100s 100s Gs Size (bytes): Ks Ms Tertiary Storage (Disk/Tape) 10,000,000,000s (10s sec) 10,000,000 s (10s ms) Ts Distributed Memory Remote Cluster Memory

♦ +5

α α α α

$%& $%& %$ # %'$

♦ #

500 1000 1500 2000 10 100 200 300 400 500

Order of vector/Matrices Mflop/s

for _ = 1:n; for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← +

*

for _ = 1:n; for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← + "+, "-+ .-, ,-+

for _ = 1:n; for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← + "+, ",+ "-+ .-, ,-+

for _ = 1:n; for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← + "+, ",+ ,"+ "-+ .-, ,-+

for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← + "+, ",+ ,"+ "-+ .-, ,-+ ,+"

for _ = 1:n; for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← + "+, ",+ ,"+ +," "-+ .-, ,-+ ,+"

*

for _ = 1:n; for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← + "+, ",+ ,"+ +," +", "-+ .-, ,-+ ,+"

(

for _ = 1:n; for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← + "+, ",+ ,"+ +," +", "-+ .-, ,-+ #$ #$ #$ #$ ,+"

for _ = 1:n; for _ = 1:n; for _ = 1:n; end end end C C A B

i j i j i k k j , , , ,

← + "+, ",+ ,"+ +," +", "-+ .-, ,-+ #$ #$ #$ #$ /0-$##&# /0-$##&# /0-$##&# /0-$##&# ,+"

)

(

&0??'6" +#+,F5.#+#+,F5/

♦ )#)7,F5 B.)7,F-;/<+@0

For a Pentium III 550 MHz L1 data cache 16 KB (also has a L1 instruction cache 16 KB)

)

Pentium III 933 MHz f77 -O3

100 200 300 400 500 600 700 800 1 16 31 46 61 76 91 106 121 136 151 166 181 196 211 226 241 256 271 286 301 316 331 346 361 376 391 406 421 436 451 466 481 496 511 526 541 556 571 586

M flop/s ijk jik jki kji kij ikj atlas

f77 -O3

100 200 300 400 500 600 700 800 1 6 11 16 21 26 31 36 41 46 51 56 61 66 71 76 81 86 91 96 101 106 111 116 121 126 131 136 141 146 151 156 161 166 171 176 181 186 191 196

M flop/s ijk jik jki kji kij ikj atlas

f77 -O3

50 100 150 200 250 300 350 400 1 21 41 61 81 101 121 141 161 181 201 221 241 261 281 301 321 341 361 381 401 421 441 461 481 501 521 541 561 581 Order Mflop/s ijk jik jki kji kji ikj dgemm

f77 -O3

50 100 150 200 250 300 350 400 1 10 19 28 37 46 55 64 73 82 91 100 109 118 127 136 145 154 163 172 181 190 199 208 217 226 235 244 253 Order Mflop/s ijk jik jki kji kji ikj dgemm

!?*<+4' !)*<+4' !+*F<+4 .4/<.4/D.4F/:5.F4/ +*!HHI )*!HHI ?*!HHI

♦8 )4) 4 > H

!?*<+4'4) !)*D+4'4) ++<.4/ +)<.4D+/ )+<.D+4/ ))<.D+4D+/ !+*F<+4 ++<++D.4F/ :5.F4/ +)<+)D.4F/ :5.F4D+/ )+<)+D.D+4F/:5.F4/ ))<))D.D+4F/:5.F4D+/ +*!HHI .4/<++ .4D+/<+) .D+4/<)+ .D+4D+/<)) )*!HHI ?*!HHI

♦8 =4; 4 > %

+= * I J K J I

♦&0??'6"

0??'- 5)-?4# ## # )#+,F5)7,F5

♦H=

5# +#4#$ )#4#$ #

♦#4 ♦

454HH #<-H#" <+H J<+H K.4J/L <+H K.4/L K5.4J/L .4J/<.4J/D.4/:5.4J/K $L K .4J/ L

= + *

C(i,j) C(i,j) A(i,k) B(k,j)

n is the size of the matrix, N blocks of size b; n = N*b

M

N K N M K

*

b

♦ #

♦ !+#

♦ ##

!+

♦ M###E4

#B$#E#>

♦ !#5BE#-

!#

♦ 6 44 #

5"44 #4 44 #> ### E# >

♦ HB-"> ♦ E

)

♦

3 > C8

H5 5

♦ %# H

'4'#4 !4'44 5 4I4N

♦ +#

"8

5 +# & '# % # " ♦ ?*

>

♦ OH P##

# ">

*

♦ ##5

##E #>

0.0 500.0 1000.0 1500.0 2000.0 2500.0 3000.0 3500.0 A M D A t h l

D E C e v 5 6

3 3 D E C e v 6

H P 9 / 7 3 5 / 1 3 5 I B M P P C 6 4

1 2 I B M P

e r 2

6 I B M P

e r 3

I n t e l P

I I 9 3 3 M H z I n t e l P

1 . 5 G H z w / S S E 2 S G I R 1 i p 2 8

S G I R 1 2 i p 3

7 S u n U l t r a S p a r c 2

Architectures MFLOP/S

Vendor BLAS ATLAS BLAS F77 BLAS

♦ 7**4***'5 ♦ '

& ♦ CN ♦ '55

1# 5"'5 ♦

♦ . >>-/.4C#/ ♦ 6. >>>-(-#/

.54424/

♦ $4.Q4 34IJ#/ ♦ ./ ♦ &&

&&C. > >/ C+000C"H %. >>>-(/ I$#4 6&& I$##4

♦ )>7?16"4=**'6"4+,F+3

)7,F)#4#)>7? 1-4##

♦ 'I$).I)/

##+== 'III

,=)R.7>*,1-/ ?)=R.+*>+)1-/

'+);E #> M# I)

2000 3000 4000 5000 6000 7000 8000 100 200 300 400 500 600 700 800 900 1000 Size Mflop/s

Intel P4 2.53 GHz 32-bit SSE2 Intel P4 2.53GHz 64-bit SSE2

!

"#$$$%&'("$)*++

*

100 200 300 400 500 600 700 800 1 2 3 4 5 6 7 8 9 1 Size M flo p /s

Intel BLAS 1 proc ATLAS 1proc Intel BLAS 2 proc ATLAS 2 proc

(

Intel Pentium II 400MHz Linux and IBM 1.1.8 JDK Compaq A lpha 21264 500 MHz Jav a 2 SDK 1.2.2 w ith Fas t V M 1.2.2 IBM Pow er 3 375 MHz

☞ ✌✍☞✎☞ ✏✎☞✎☞ ✑✎☞✒☞ ✓ ☞✒☞ ✔ ☞✎☞ ✕✎☞✎☞ ✖ ☞✎☞ ✗✎☞✎☞ ✘✙✚ ✛✜ ✢✣♦ % +

♦ H

)

(

♦ ##5

# #E#>

100 200 300 400 500 600 700 800 DGEMM DSYMM DSYRK DSYR2K DTRMM DTRSM

BLAS MFLOP/S

Vendor BLAS ATLAS BLAS

)

(PII 8 Way Cluster with 100 Mb/s switched network) (PII 8 Way Cluster with 100 Mb/s switched network)

Message Size Optimal algorithm Buffer Size (bytes) (bytes) 8 binomial 8 16 binomial 16 32 binary 32 64 binomial 64 128 binomial 128 256 binomial 256 512 binomial 512 1K sequential 1K 2K binary 2K 4K binary 2K 8K binary 2K 16K binary 4K 32K binary 4K 64K ring 4K 128K ring 4K 256K ring 4K 512K ring 4K 1M binary 4K

Root Sequential Binary Binomial Ring

♦ I$#

♦ &## ♦ % ♦ %S?*T

new T T new T new new

♦

2

$ >

♦

♦

C#

>

♦

7*T ">

♦

2

$ >

♦

♦

C#

>

♦

7*T ">

*

♦ &" ♦ #

♦

♦ '$ ♦ U%4U4%U4U

♦ &UU ♦ !#

./6

♦ &@@ B

♦ I %424' ♦ HF 44$4$ ♦ 5#+4)4?5

123$#"!%"%"##& 123$#"!%"%"##& 123$#"!%"%"##& 123$#"!%"%"##&+ + + +#&

#& #& #& %3-04#$"

%3-04#$" %3-04#$" %3-04#$" /%-"5 /%-"5 /%-"5 /%-"5

&$$$'4%3#'-0%$ &$$$'4%3#'-0%$ &$$$'4%3#'-0%$ %3# %3# %3# %3#3 3 3 3+

+ + + $'

$' $' $' 3 3 3 3++

++ ++ ++ #&23$#"6

#&23$#"6 #&23$#"6 #&23$#"6

A a A a a A A U u u U U U u U u U

j j T jj j T T j T j T jj T j T j jj j T 11 13 13 33 11 13 33 11 13 33

α α µ µ

j T j

11

jj j T j

jj

2

T j j 11

jj jj j T j 2 =

)

♦ 6##HF

DO 30 J = 1, N INFO = J S = 0.0E0 JM1 = J - 1 IF( JM1.LT.1 ) GO TO 20 DO 10 K = 1, JM1 T = A( K, J ) - SDOT( K-1, A( 1, K ), 1,A( 1, J ), 1 ) T = T / A( K, K ) A( K, J ) = T S = S + T*T 10 CONTINUE 20 CONTINUE S = A( J, J ) - S C ...EXIT IF( S.LE.0.0E0 ) GO TO 40 A( J, J ) = SQRT( S ) 30 CONTINUE

*

DO 10 J = 1, N CALL STRSV( 'Upper', 'Transpose', 'Non-Unit’, J-1, A, LDA, A( 1, J ), 1 ) S = A( J, J ) - SDOT( J-1, A( 1, J ), 1, A( 1, J ), 1 ) IF( S.LE.ZERO ) GO TO 20 A( J, J ) = SQRT( S ) 10 CONTINUE

♦ ##

♦ &)?;?+)'-$

♦ 6 +4@**'-> ♦ # >

123$#"!%"%"#%'4%,%3-04#$" 123$#"!%"%"#%'4%,%3-04#$" 123$#"!%"%"#%'4%,%3-04#$" 123$#"!%"%"#%'4%,%3-04#$" /%-"5 /%-"5 /%-"5 /%-"5

&$$$'4%3#'-0%$ &$$$'4%3#'-0%$ &$$$'4%3#'-0%$ %3#5 %3#5 %3#5 %3#5

$#&3#"#&0"!23$#" $#&3#"#&0"!23$#" $#&3#"#&0"!23$#" 4$%$##&3#"76 4$%$##&3#"76 4$%$##&3#"76 4$%$##&3#"76

A A A A A A A A A U U U U U U U U U U U U

T T T T T T T T T T 11 12 13 12 22 12 13 12 33 11 12 22 13 23 33 11 12 13 22 23 33

T 12 11 12

T T 22 12 12 22 22

U U A

T 11 12 12

= U U A U U

T T 22 22 22 12 12

= −

DO 10 J = 1, N, NB CALL STRSM( 'Left', 'Upper', 'Transpose','Non-Unit', J-1, JB, ONE, A, LDA, $ A( 1, J ), LDA ) CALL SSYRK( 'Upper', 'Transpose', JB, J-1,-ONE, A( 1, J ), LDA, ONE, $ A( J, J ), LDA ) CALL SPOTF2( 'Upper', JB, A( J, J ), LDA, INFO ) IF( INFO.NE.0 ) GO TO 20 10 CONTINUE

!=4?5B"+

%I$ =+>@16" H<7** +),)'- ?52 ?+)'- )52 )?;'- .+5/

♦ #HF

♦ 5#+4)?54

♦ F

*

x x x x

. . .

,%

&

x

. . .

%3& x

nb

% &4

$ $ $ $

$ $ $

8 8 8$ $ $ $

$ $ $

$ $ $

$ $ $

$ $ $

$ $ $

$ $ $

. . .

nb

%3

(

#&46&47 %#&8%9: 0%-50& 0%& 690#'-#0# &4&0'00#;0#7 0%-50&

0#

9:3#$ $'97'

*&4&% *<88=8>

♦ # ♦ ♦

+?5V

♦ I$$ ♦ 6# ♦ #J

#E HF #

♦ #.

#$# OP />

)

(

1I''31I%&% '#+16".(W++**/

100 200 300 400 500 1000 1500 2000 2500 3000 Order M F lo p /s

Pentium III 550 MHz Dual Processor LU Factorization

200 400 600 800 500 1000 1500 2000 2500 3000 3500 4000 4500 5000 Order M flo p /s

?9-5 ?9-5

E

♦ ##8

✂✁☎✄✝✆✟✞✂✠ ✡☛✄✌☞✎✍ ✁☎✏ ✑☛✒ ✓✝✔☎✕ ✠ ✄ ☞✎✍ ✁☎✏ ✑✗✖✘✖✙✒✛✚ ☞ ✔ ✆✜✁✢☞ ✣✜✠ ✤ ✔ ✆✟✥✦✍ ✍ ✑✗✧✘✖ ← ✑✗✧✂✖✩★☎✪✬✫ ✖ ✏ ✑✗✖✘✖✙✒✛✚ ✭✟✮✰✯✲✱✴✳✌✏ ✒✵✡✦✄✌✁☎✶✷✶ ✔ ☞✸✞✂☞✎✠ ✥☛✄ ✕ ✁✷✍ ✥✦☞✸✣✜✁ ✓☎✹ ✥☎✞✂☞✎✠ ✭ ✑✗✖✘✧ ← ✺ ✖✘✫ ✖ ✏ ✑✗✖✘✖✙✒✰★✝✑✗✖✂✧✻✚✼✭✟✮✰✯✲✱✴✳✌✏ ✒✵✡✦✄✽✍ ✡☎✾ ✔ ☞✸✞✂☞✎✠ ✥✦✄ ✕ ✁✷✍ ✥✦☞✸✣✜✁ ✓☎✹ ✥☎✞✂☞✎✠ ✭ ✑✗✧✘✧ ← ✑✗✧✂✧✙✿✎✑✗✧✘✖✻★ ✑✗✖✘✧✻✚ ✭✜❀❂❁❃✳✌✳✌✏ ✒ ☞✎✍ ✁☎✏ ✑✗✧✘✧✙✒✛✚ ☞ ✔ ✆✜✁✢☞ ✣✜✠ ✤ ✔ ✆✟✥✦✍ ✍ ✔ ✄✝❄✦❅♦ %$%' $1I'' #

RLU(A) RLU(A11) A21 := A21 U-1 (A11) A12 := L1

A22 := A22 – A21 A12 RLU(A22)

" #4 >

*

+>

&"

)>

## E"

?>

#

H"

**

♦ AR>>

>

#8 B 8 #=*T'

# #+* Y 8E # #+**

4B4 44 4> ♦ I

#>Z%45#4 3'44)=+000[

+>@04#5 H>

SuperLU Recursion Name N nonzeros Time[s] FERR L+U Time[s] FERR L+U af23560 23560 460598 44.19 5.80E-14 132.2 31.34 1.80E-14 149.7 ex11 16614 1096948 109.67 2.50E-05 210.2 55.3 1.30E-06 150.6 goodwin 7320 324772 6.49 1.20E-08 31.3 6.74 4.60E-06 35 jpwh_991 991 6027 0.19 2.90E-15 1.4 0.25 2.60E-15 2.3 mcfe 765 24382 0.07 1.20E-13 0.9 0.22 9.10E-13 1.8 memplus 17758 126150 0.29 2.10E-12 5.9 12.67 6.60E-13 195.7

16146 1015156 26.16 1.10E-06 83.9 22.1 3.70E-09 96.1

2205 14133 0.46 1.30E-13 3.6 0.45 2.10E-13 3.9 psmigr_1 3140 543162 110.79 7.90E-11 64.6 88.61 1.20E-05 78.4 raefsky3 21200 1488768 62.07 1.40E-09 147.2 69.67 4.40E-13 193.9 raefsky4 19779 1316789 82.45 2.30E-06 156.2 104.29 3.50E-06 234.4 saylr4 3564 22316 0.85 3.20E-11 6 0.95 1.20E-11 7.2 sherman3 5005 20033 0.61 6.00E-13 5 0.67 4.80E-13 7.3 sherman5 3312 20793 0.28 1.40E-13 3 0.32 6.20E-15 3.1 wang3 26064 177168 84.14 2.40E-14 116.7 79.18 1.60E-14 256.7

*

0% 10% 20% 30% 40% 50% 60% 70% 80% 90% 100% af23560 ex11 goodwin jpwh_991 mcfe memplus

saylr4 sherman3 sherman5 wang3 Dif f erent Test Mat r ices

Breakdown of Time Across Phases

Numerical fact. Ebedded blocking Recursive conversion Block conversion Symbolical fact.

5 # &#%&"

♦ F

.'3/'

♦ !##

#

♦ H #

#

♦ &!I4H&4% ♦ 5'46E$4&J4

HI44144H14'4N

3

)

*

♦ '&@@ #J ♦ 5 5 5

2'4'45'4%?44' #>

5 ♦ F $-B # H#

♦ !JE B# > $

443EE ♦ #$

♦ #5

♦ 5#55 ♦ $ 1IRRR.'4H4.4/44>>>/ 1IRRR.'4H4444I4>>>/

%$; < %$; < %$; < %$; <

; < ; < ; < ;=;.999 ;=;.999 ;=;.999 ;=;.999 ; ; ; ; :4$ %$

*

♦ + ♦ +E)E

(

♦ '$E3 ♦ )EE#

7$7$)$) )$)

♦ %-

>

♦ #$.

5454543'/##>

♦ C' ##

#)E ## #'# ###

♦ ##

##

♦ ##

O G H YP

H8#$# #4"#N

♦ 4##

)

(

♦ F

'#M # ##M ♦ ' ##

)

♦ ##

♦ F #

1

Natural Data (A,b)

Middleware Application Library (e.g. LAPACK, ScaLAPACK, PETSc,…)

Natural Answer (x) Structured Data (A’,b’) Structured Answer (x’)

User A b Stage data to disk

User A b Library Middle-ware

User A b Library Middle-ware NWS Autopilot MDS Resource Selection Time function minimization

User A b Library Middle-ware NWS Autopilot MDS Resource Selection 0,0 0,1 … Time function minimization

Uses Grid infrastructure, i.e.Globus/NWS, but doesn’t have to.

*

♦

5 #---'-

). 4/?.44/

♦ 1

>> >

>> > >> > >> > >> >

>> >

>> >

>> >

>> > >> > >> > >> >

>> >

>> >

>> >

>> >

>> >

Bandwidth Latency Load CPU Performance Memory

FA<+ FA<Z?4?4;4;4;4;[ &A<Z)4?4=4,4;4;[

♦ &

## #

# > #$ #1

6

♦

1>

# >

♦ 84

#4U%4 4 H

♦ '#'

#'

'># ##

> ' #$ ## # 44> ## # #>

()

*

(

♦ ####> ♦ H###> ♦ #

'

♦ I

.#4I$ H/ &

;,)**)> J'>

♦ &

' 'E).!\/

♦ CF

I # >

(

(J. Plank, J. Dongarra)

♦ '#

$## 4##

♦ #

#B %##E #

♦

C''444U%4

(

(*

(

( (

)

(( ))

)

♦ #

#

M#

)

♦ ##

# >

♦ 4#

>

♦ !#4#

$4# >

♦ I$

B#I2,3,-@ 1'%+**** 5' %Q?I $C

E,= 6E% 6# &J HI

5 5

)*

)

♦ Y./ ♦ YY./ H# ♦ YY./ ♦ YY./ I #

%

♦ "

♦ U$ ♦ ."]+7/ ♦ ###

)

PAPI Low Level PAPI High Level Hardware Performance Counter Operating System Kernel Extensions PAPI Machine Dependant Substrate *

*

♦ 444=44 $)>=4)>)4)>* # ♦ 5' ?4,*=4,*=. =/ &R=>? .=>?>=/ .^>>/ ♦ 443 )>; ♦ 1%R-' ♦ '# $)>= # ♦ ?I42+42) ♦ C )FR ♦ 8

#8-->>>-- CB# &44'5

♦ II-./

♦

♦ #

♦ > ♦

♦

♦ B4J$

" " #"

*

GUI Server Application

Application Application

(

♦ % E H

4$4

♦

>

#4

#>

+,4?)4,=4+);>

♦ %44 ♦

#>

*)

(

♦ 7**

I##45 6'4'#

♦

4F C#4F

♦ %"

""4F 2IJ#4F

♦

#5 4F F4F #'4F F#4F 4F

& N >>-7**- >>>-- >>>-- >>>-(- *% B