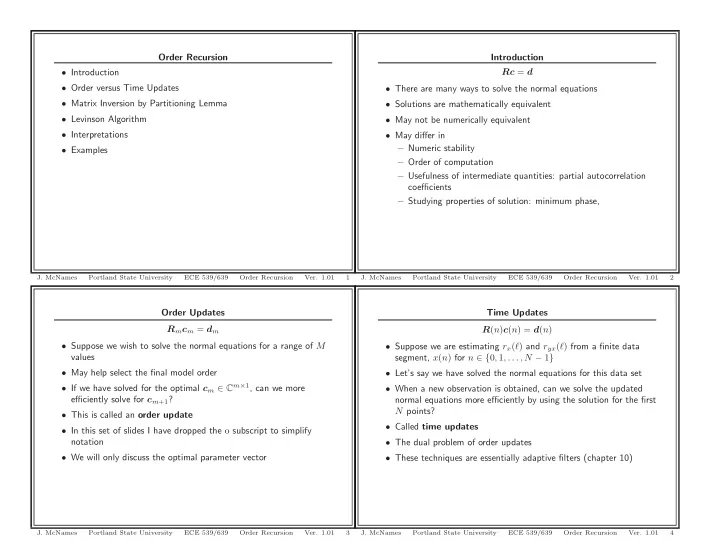

SLIDE 1

Order Updates Rmcm = dm

- Suppose we wish to solve the normal equations for a range of M

values

- May help select the final model order

- If we have solved for the optimal cm ∈ Cm×1, can we more

efficiently solve for cm+1?

- This is called an order update

- In this set of slides I have dropped the o subscript to simplify

notation

- We will only discuss the optimal parameter vector

- J. McNames

Portland State University ECE 539/639 Order Recursion

- Ver. 1.01

3

Order Recursion

- Introduction

- Order versus Time Updates

- Matrix Inversion by Partitioning Lemma

- Levinson Algorithm

- Interpretations

- Examples

- J. McNames

Portland State University ECE 539/639 Order Recursion

- Ver. 1.01

1

Time Updates R(n)c(n) = d(n)

- Suppose we are estimating rx(ℓ) and ryx(ℓ) from a finite data

segment, x(n) for n ∈ {0, 1, . . . , N − 1}

- Let’s say we have solved the normal equations for this data set

- When a new observation is obtained, can we solve the updated

normal equations more efficiently by using the solution for the first N points?

- Called time updates

- The dual problem of order updates

- These techniques are essentially adaptive filters (chapter 10)

- J. McNames

Portland State University ECE 539/639 Order Recursion

- Ver. 1.01

4

Introduction Rc = d

- There are many ways to solve the normal equations

- Solutions are mathematically equivalent

- May not be numerically equivalent

- May differ in

– Numeric stability – Order of computation – Usefulness of intermediate quantities: partial autocorrelation coefficients – Studying properties of solution: minimum phase,

- J. McNames

Portland State University ECE 539/639 Order Recursion

- Ver. 1.01