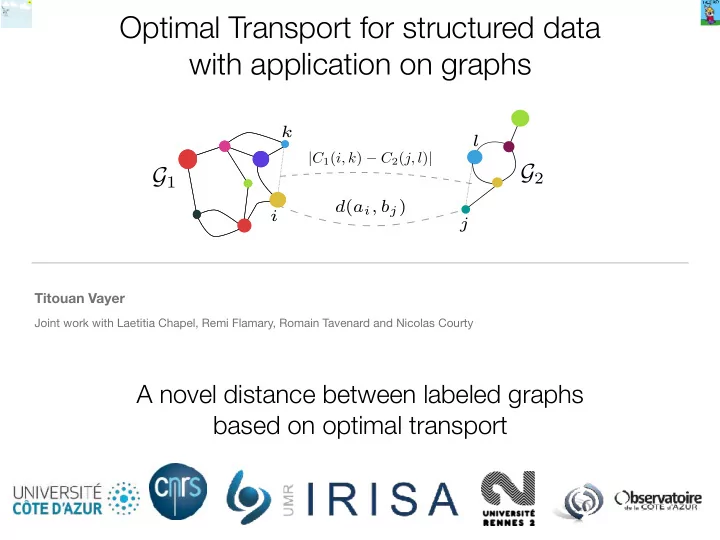

SLIDE 1 Optimal Transport for structured data with application on graphs

Titouan Vayer

Joint work with Laetitia Chapel, Remi Flamary, Romain Tavenard and Nicolas Courty

A novel distance between labeled graphs based on optimal transport

SLIDE 2 Contributions:

- Differentiable distance between labeled graphs.

Jointly considers the features and the structures

SLIDE 3 Contributions:

- Differentiable distance between labeled graphs.

Jointly considers the features and the structures Optimal transport: soft assignment between the nodes Distance = 1.41

SLIDE 4 Contributions:

Computing average

= +

1 2 (

)

- Differentiable distance between labeled graphs.

Jointly considers the features and the structures

SLIDE 5

Structured data as probability distribution

SLIDE 6

Structured data as probability distribution

Features

(ai)i

SLIDE 7

Structured data as probability distribution

Features

(ai)i

nodes in the metric space of the graph

(xi)i

SLIDE 8

Structured data as probability distribution

Features

(ai)i

nodes in the metric space of the graph

(xi)i

weighted by their masses (hi)i

SLIDE 9

Optimal transport in a nutshell

Wasserstein distance

μX

νY

Gromov-Wasserstein distance

Compare two probability distributions by transporting one onto another

d(ai, bj)

μA νB

SLIDE 10

Optimal transport in a nutshell

Wasserstein distance

μX

νY

Gromov-Wasserstein distance

Compare two probability distributions by transporting one onto another

d(ai, bj)

μA νB

SLIDE 11

Optimal transport in a nutshell

Wasserstein distance

μX

νY

Gromov-Wasserstein distance

Compare two probability distributions by transporting one onto another

d(ai, bj)

μA νB

SLIDE 12 Fused Gromov-Wasserstein distance

FGWq,α(μ, ν) = min

π∈Π(μ,ν) ∑ i,j,k,l

( (1 − α)d(ai, bj)q + α|C1(i, k) − C2(j, l)|q )πi,jπk,l

where is the soft assignment matrix

π

α is a trade-off features/structures

SLIDE 13 Fused Gromov-Wasserstein distance

Properties

- Interpolate between Wasserstein distance on features and Gromov-Wasserstein distance on the structures

- Distance on labeled graph: vanishes iff graphs have same labels and weights at the same place up to a permutation

Optimization problem

- Non convex Quadratic Program: hard !

- Conditional Gradient Descent (aka Frank Wolfe)

- Suitable for entropic regularization + Sinkhorn iteraterations

SLIDE 14 Applications

Noiseless graph Sample 1 Sample 2 Sample 3 Sample 4 Sample 5 Sample 6 Bary n= 15 Bary n= 7

Classification

Graph Barycenter + k-means clustering of graphs

SLIDE 15

Check out our poster at Pacific Ballroom #133!!