SLIDE 1

Open Source Bug Fixes: Characterization and Prediction

Vikash Balasubramanian Kashif Khan November 27, 2019

University of Waterloo

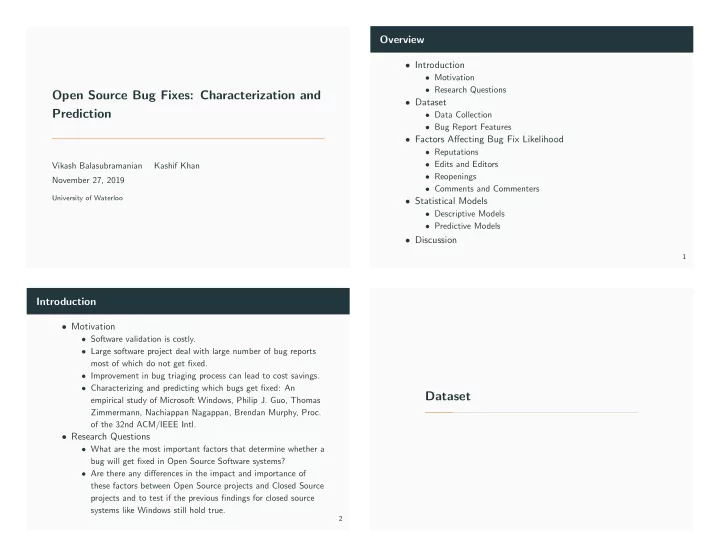

Overview

- Introduction

- Motivation

- Research Questions

- Dataset

- Data Collection

- Bug Report Features

- Factors Affecting Bug Fix Likelihood

- Reputations

- Edits and Editors

- Reopenings

- Comments and Commenters

- Statistical Models

- Descriptive Models

- Predictive Models

- Discussion

1

Introduction

- Motivation

- Software validation is costly.

- Large software project deal with large number of bug reports

most of which do not get fixed.

- Improvement in bug triaging process can lead to cost savings.

- Characterizing and predicting which bugs get fixed: An

empirical study of Microsoft Windows, Philip J. Guo, Thomas Zimmermann, Nachiappan Nagappan, Brendan Murphy, Proc.

- f the 32nd ACM/IEEE Intl.

- Research Questions

- What are the most important factors that determine whether a

bug will get fixed in Open Source Software systems?

- Are there any differences in the impact and importance of