SLIDE 1

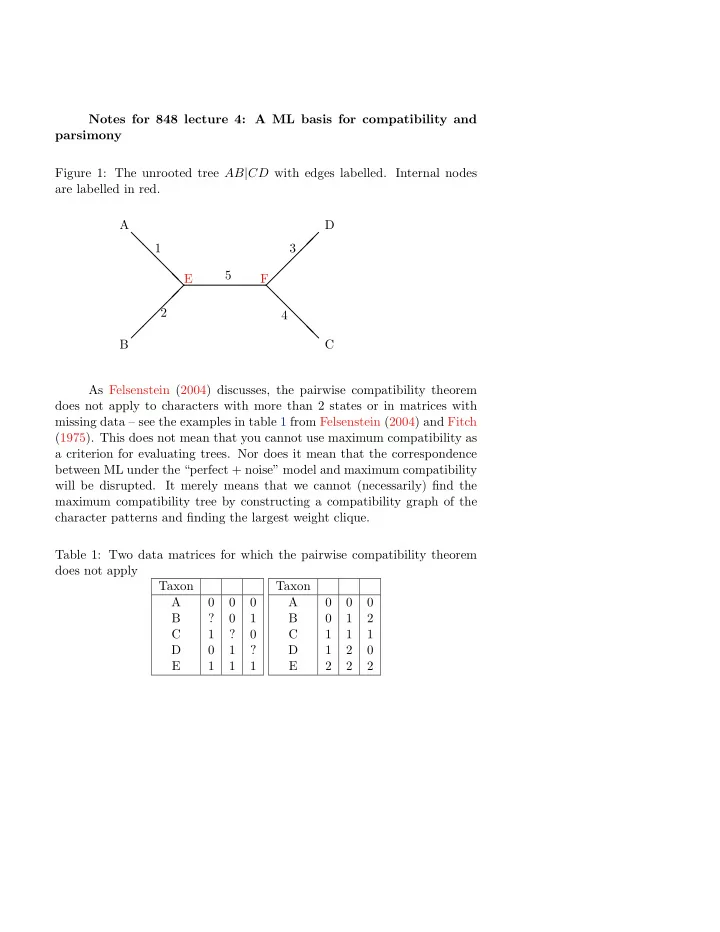

Notes for 848 lecture 4: A ML basis for compatibility and parsimony Figure 1: The unrooted tree AB|CD with edges labelled. Internal nodes are labelled in red. A B D C 1 2 3 4 5

❅ ❅ ❅ ❅ ❅

- E

F

- ❅

Notes for 848 lecture 4: A ML basis for compatibility and parsimony - - PDF document

Notes for 848 lecture 4: A ML basis for compatibility and parsimony Figure 1: The unrooted tree AB | CD with edges labelled. Internal nodes are labelled in red. A D 1 3 5 E F