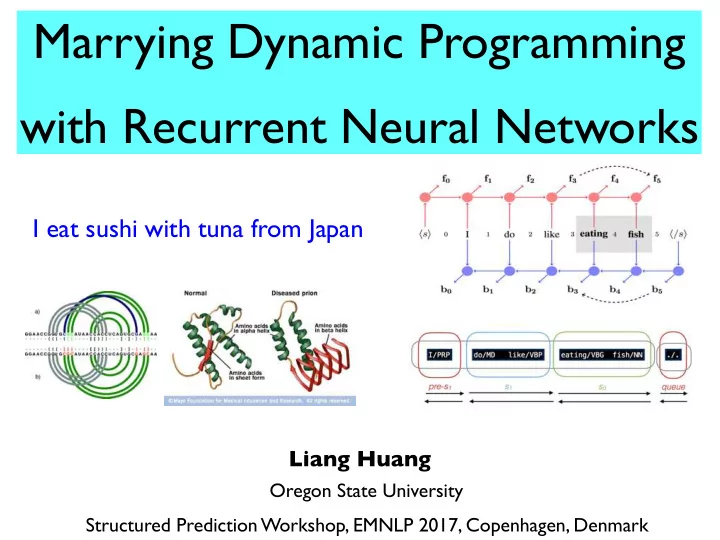

Marrying Dynamic Programming with Recurrent Neural Networks

I eat sushi with tuna from Japan

Liang Huang

Oregon State University Structured Prediction Workshop, EMNLP 2017, Copenhagen, Denmark

Marrying Dynamic Programming with Recurrent Neural Networks I eat - - PowerPoint PPT Presentation

Marrying Dynamic Programming with Recurrent Neural Networks I eat sushi with tuna from Japan Liang Huang Oregon State University Structured Prediction Workshop, EMNLP 2017, Copenhagen, Denmark Marrying Dynamic Programming with Recurrent

I eat sushi with tuna from Japan

Liang Huang

Oregon State University Structured Prediction Workshop, EMNLP 2017, Copenhagen, Denmark

I eat sushi with tuna from Japan

Liang Huang

Oregon State University Structured Prediction Workshop, EMNLP 2017, Copenhagen, Denmark

I eat sushi with tuna from Japan

Liang Huang

Oregon State University Structured Prediction Workshop, EMNLP 2017, Copenhagen, Denmark

James Cross

2

3

4

5

I eat sushi with tuna from Japan

6

I eat sushi with tuna from Japan

6

I eat sushi with tuna from Japan

7

8

Feedforward NNs

(Chen + Manning 14)

Stack LSTM (Dyer+ 15) biRNN dependency

(Kiperwaser+Goldberg 16; Cross+Huang 16a)

biRNN span-based constituency

(Cross+Huang 16b)

minimal span-based constituency

(Stern+ ACL 17)

minimal dependency

(Shi+ EMNLP 17)

edge-factored

(McDonald+ 05a)

biRNN graph-based dependency

(Kiperwaser+Goldberg 16; Wang+Chang 16)

DP incremental parsing

(Huang+Sagae 10, Kuhlmann+ 11)

RNNG

(Dyer+ 16)

DP impossible enables slow DP enables fast DP fastest DP: O(n3)

all tree info (summarize output y) minimal or no tree info (summarize input x)

constituency dependency bottom-up

8

Feedforward NNs

(Chen + Manning 14)

Stack LSTM (Dyer+ 15) biRNN dependency

(Kiperwaser+Goldberg 16; Cross+Huang 16a)

biRNN span-based constituency

(Cross+Huang 16b)

minimal span-based constituency

(Stern+ ACL 17)

minimal dependency

(Shi+ EMNLP 17)

edge-factored

(McDonald+ 05a)

biRNN graph-based dependency

(Kiperwaser+Goldberg 16; Wang+Chang 16)

DP incremental parsing

(Huang+Sagae 10, Kuhlmann+ 11)

RNNG

(Dyer+ 16)

DP impossible enables slow DP enables fast DP fastest DP: O(n3)

all tree info (summarize output y) minimal or no tree info (summarize input x)

constituency dependency bottom-up

(Huang & Sagae, ACL 2010*; Kuhlmann et al., ACL 2011; Mi & Huang, ACL 2015)

* best paper nominee

(Huang & Sagae, ACL 2010*; Kuhlmann et al., ACL 2011; Mi & Huang, ACL 2015)

* best paper nominee

Liang Huang (Oregon State)

10

action stack queue

I eat sushi with tuna from Japan in a restaurant

Liang Huang (Oregon State)

10

action stack queue

I eat sushi ...

Liang Huang (Oregon State)

10

action stack queue

I eat sushi ... eat sushi with ...

I

shift

I eat sushi with tuna from Japan in a restaurant

Liang Huang (Oregon State)

10

action stack queue

I eat sushi ... eat sushi with ... sushi with tuna ...

I eat I

shift 2 shift

I eat sushi with tuna from Japan in a restaurant

Liang Huang (Oregon State)

10

action stack queue

I eat sushi ... eat sushi with ... sushi with tuna ... sushi with tuna ...

I eat I eat I

shift 2 shift 3 l-reduce

I eat sushi with tuna from Japan in a restaurant

Liang Huang (Oregon State)

10

action stack queue

I eat sushi ... eat sushi with ... sushi with tuna ... sushi with tuna ... with tuna from ...

I eat I eat I eat sushi I

shift 2 shift 3 l-reduce 4 shift

I eat sushi with tuna from Japan in a restaurant

Liang Huang (Oregon State)

10

action stack queue

I eat sushi ... eat sushi with ... sushi with tuna ... sushi with tuna ... with tuna from ... with tuna from ...

I eat I eat I eat sushi I eat I sushi

shift 2 shift 3 l-reduce 4 shift 5a r-reduce

I eat sushi with tuna from Japan in a restaurant

Liang Huang (Oregon State)

10

action stack queue

I eat sushi ... eat sushi with ... sushi with tuna ... sushi with tuna ... with tuna from ... with tuna from ... tuna from Japan ...

I eat I eat I eat sushi I eat I sushi eat sushi with I

shift 2 shift 3 l-reduce 4 shift 5a r-reduce 5b shift

I eat sushi with tuna from Japan in a restaurant

Liang Huang (Oregon State)

10

action stack queue

shift-reduce conflict

I eat sushi ... eat sushi with ... sushi with tuna ... sushi with tuna ... with tuna from ... with tuna from ... tuna from Japan ...

I eat I eat I eat sushi I eat I sushi eat sushi with I

shift 2 shift 3 l-reduce 4 shift 5a r-reduce 5b shift

I eat sushi with tuna from Japan in a restaurant

Liang Huang (Oregon State)

11

sh l-re r-re

Liang Huang (Oregon State)

12

Liang Huang (Oregon State)

13

Liang Huang (Oregon State)

13

Liang Huang (Oregon State)

14

(Huang and Sagae, 2010)

Liang Huang (Oregon State)

15

(Huang and Sagae, 2010)

Liang Huang (Oregon State)

16

(Huang and Sagae, 2010)

Liang Huang (Oregon State)

16

each DP state corresponds to exponentially many non-DP states

(Huang and Sagae, 2010)

graph-structured stack

(Tomita, 1986)

Liang Huang (Oregon State)

17

each DP state corresponds to exponentially many non-DP states

(Huang and Sagae, 2010)

100 102 104 106 108 1010 0 10 20 30 40 50 60 70 sentence length

DP: exponential

non-DP beam search

Liang Huang (Oregon State)

17

each DP state corresponds to exponentially many non-DP states

(Huang and Sagae, 2010)

100 102 104 106 108 1010 0 10 20 30 40 50 60 70 sentence length

DP: exponential

non-DP beam search

graph-structured stack

(Tomita, 1986)

Liang Huang (Oregon State)

I sushi I eat sushi eat sushi

(Huang and Sagae, 2010)

Liang Huang (Oregon State)

I sushi I eat sushi eat sushi

(Huang and Sagae, 2010)

... eat sushi ... I sushi

Liang Huang (Oregon State)

I sushi I eat sushi eat sushi

(Huang and Sagae, 2010)

psycholinguistic evidence (eye-tracking experiments): delayed disambiguation

John and Mary had 2 papers John and Mary had 2 papers

Frazier and Rayner (1990), Frazier (1999)

... eat sushi ... I sushi

Liang Huang (Oregon State)

I sushi I eat sushi eat sushi

(Huang and Sagae, 2010)

psycholinguistic evidence (eye-tracking experiments): delayed disambiguation

John and Mary had 2 papers John and Mary had 2 papers

Frazier and Rayner (1990), Frazier (1999)

... eat sushi ... I sushi

each together

Liang Huang (Oregon State)

19

0.2 0.4 0.6 0.8 1 1.2 1.4 0 10 20 30 40 50 60 70 parsing time (secs) sentence length

Liang Huang (Oregon State)

19

C h a r n i a k Berkeley MST this work

0.2 0.4 0.6 0.8 1 1.2 1.4 0 10 20 30 40 50 60 70 parsing time (secs) sentence length

O(n2) O(n) O(n2.4) O(n2.5)

Liang Huang (Oregon State)

19

C h a r n i a k Berkeley MST this work

0.2 0.4 0.6 0.8 1 1.2 1.4 0 10 20 30 40 50 60 70 parsing time (secs) sentence length 100 102 104 106 108 1010 0 10 20 30 40 50 60 70 sentence length

DP: exponential

non-DP beam search

O(n2) O(n) O(n2.4) O(n2.5)

20

Liang Huang (Oregon State)

21

... s2 s1 s0 q0 q1 ...

← stack queue →

(Huang+Sagae, 2010)

Liang Huang (Oregon State)

21

... s2 s1 s0 q0 q1 ...

← stack queue → ← stack queue →

... feed cats I nearby

in the garden ...

(Huang+Sagae, 2010)

Liang Huang (Oregon State)

21

... s2 s1 s0 q0 q1 ...

← stack queue → features: (s0.w, s0.rc, q0, ...) = (cats, nearby, in, ...) ← stack queue →

... feed cats I nearby

in the garden ...

(Huang+Sagae, 2010)

Liang Huang (Oregon State)

22

… … … …

(Chen+Manning 2014)

Liang Huang (Oregon State)

manually designed atomic features on the stack

22

… … … …

(Chen+Manning 2014)

Liang Huang (Oregon State)

manually designed atomic features on the stack

stack LSTM / RNNG (Dyer+ 15, 16)

biLSTM dependency parsing (Kiperwaser+Goldberg 16, Cross+Huang 16a) biLSTM constituency parsing (Cross+Huang 16b)

22

… … … …

(Chen+Manning 2014)

Liang Huang (Oregon State)

manually designed atomic features on the stack

stack LSTM / RNNG (Dyer+ 15, 16)

biLSTM dependency parsing (Kiperwaser+Goldberg 16, Cross+Huang 16a) biLSTM constituency parsing (Cross+Huang 16b)

22

rules out DP! :( enables DP! :)

… … … …

(Chen+Manning 2014)

23

Feedforward NNs

(Chen + Manning 14)

Stack LSTM (Dyer+ 15) biRNN dependency

(Kiperwaser+Goldberg 16; Cross+Huang 16a)

biRNN span-based constituency

(Cross+Huang 16b)

minimal span-based constituency

(Stern+ ACL 17)

minimal dependency

(Shi+ EMNLP 17)

edge-factored

(McDonald+ 05a)

biRNN graph-based dependency

(Kiperwaser+Goldberg 16; Wang+Chang 16)

DP incremental parsing

(Huang+Sagae 10, Kuhlmann+ 11)

RNNG

(Dyer+ 16)

DP impossible enables slow DP enables fast DP fastest DP: O(n3)

all tree info (summarize output y) minimal or no tree info (summarize input x)

constituency dependency bottom-up

24

, e.g. graph-based, but also incremental!

25

… … … …

these developments lead to state-of-the-art in dependency parsing

(Cross and Huang, ACL 2016) (Kiperwaser and Goldberg 2016)

Liang Huang (Oregon State)

26 do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

Stack Queue

do/MD I/PRP eating/VBG fish/NN

Stack Queue VP’ NP

like/VBP

previous work

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

current brackets

27

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {}

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

current brackets

27

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {} Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

current brackets

27

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {} Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Label-NP

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

current brackets

27

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {} Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Label-NP

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

current brackets

27

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {} Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Label-NP t = {0NP1} No-Label

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

current brackets

27

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {} Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Label-NP t = {0NP1} No-Label

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

current brackets

27

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {} Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

Shift do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Label-NP t = {0NP1} No-Label t = {0NP1} No-Label

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 28

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1}

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 28

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 28

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1} No-Label

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 28

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

Shift do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1} No-Label

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 28

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

Shift do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1} No-Label t = {0NP1} No-Label

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 28

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

Shift do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1} No-Label t = {0NP1} No-Label Shift do/MD like/VBP I/PRP

1 3 5

eating/VBG fish/NN

4

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 28

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD I/PRP like/VBP

1 2

eating/VBG fish/NN

3 4 5

t = {0NP1} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

Shift do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1} No-Label t = {0NP1} No-Label Label-NP t = {0NP1, 4NP5} Shift do/MD like/VBP I/PRP

1 3 5

eating/VBG fish/NN

4

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 29

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1, 4NP5}

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 29

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1, 4NP5} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 5

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 29

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1, 4NP5} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 5

Label-S-VP t = {0NP1, 4NP5,

3S5, 3VP5}

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 29

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1, 4NP5} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 5

Combine do/MD like/VBP eating/VBG fish/NN I/PRP

1 5

Label-S-VP t = {0NP1, 4NP5,

3S5, 3VP5}

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 29

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1, 4NP5} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 5

Combine do/MD like/VBP eating/VBG fish/NN I/PRP

1 5

Label-S-VP t = {0NP1, 4NP5,

3S5, 3VP5}

Label-VP t = {0NP1, 4NP5,

3S5, 3VP5, 1VP5}

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 29

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1, 4NP5} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 5

Combine do/MD like/VBP eating/VBG fish/NN I/PRP

1 5

Combine I/PRP do/MD like/VBP eating/VBG fish/NN

5

Label-S-VP t = {0NP1, 4NP5,

3S5, 3VP5}

Label-VP t = {0NP1, 4NP5,

3S5, 3VP5, 1VP5}

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State) 29

Structural (even step) Shift Structural (even step) Combine Label (odd step) Label-X Label (odd step) No-Label

do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 4 5

t = {0NP1, 4NP5} Combine do/MD like/VBP I/PRP

1

eating/VBG fish/NN

3 5

Combine do/MD like/VBP eating/VBG fish/NN I/PRP

1 5

Combine I/PRP do/MD like/VBP eating/VBG fish/NN

5

Label-S-VP t = {0NP1, 4NP5,

3S5, 3VP5}

Label-VP t = {0NP1, 4NP5,

3S5, 3VP5, 1VP5}

Label-S t = {0NP1, 4NP5,

3S5, 3VP5, 1VP5, 0S5}

S VP S VP NP NN fish VBG eating VBP like MD do NP PRP I

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

30

hsi I do like eating fish h/si f0 b0

1

f1 b1

2

f2 b2

3

f3 b3

4

f4 b4

5

f5 b5

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

31

pre-s1 s1 s0 queue do/MD like/VBP I/PRP eating/VBG fish/NN ./. pre-s0 s0 queue do/MD like/VBP eating/VBG fish/NN I/PRP ./.

Structural Action: 4 spans Label Action: 3 spans

Liang Huang (Oregon State)

32

Parser Search Recall Prec. F1 Carreras et al. (2008) cubic 90.7 91.4 91.1 Shindo et al. (2012) cubic 91.1 Thang et al. (2015) ~cubic 91.1 Watanabe et al. (2015) beam 90.7 Static Oracle greedy 90.7 91.4 91.0 Dynamic + Exploration greedy 90.5 92.1 91.3

small embeddings trained from scratch

(Cross and Huang, EMNLP 2016)

Liang Huang (Oregon State)

33

(Kai and Huang, EMNLP 2017)

RST discourse tree

+PTB

discourse-level s y n t a x

e v e l

34

35

35

pre-s1 s1 s0 queue

do/MD like/VBP I/PRP eating/VBG fish/NN ./.

i j k

pre-s0 s0 queue

do/MD like/VBP eating/VBG fish/NN I/PRP ./.

i j

(Cross+Huang, EMNLP16)

35

pre-s1 s1 s0 queue

do/MD like/VBP I/PRP eating/VBG fish/NN ./.

i j k

pre-s0 s0 queue

do/MD like/VBP eating/VBG fish/NN I/PRP ./.

i j

score action (i, k, j)

structural action

score label (i, j)

label action

(Cross+Huang, EMNLP16)

35

(Stern+, ACL 2017)

pre-s1 s1 s0 queue

do/MD like/VBP I/PRP eating/VBG fish/NN ./.

i j k

pre-s0 s0 queue

do/MD like/VBP eating/VBG fish/NN I/PRP ./.

i j

score action (i, k, j)

structural action

score label (i, j)

label action

(Cross+Huang, EMNLP16)

max label score label (i, j) max k best (i, k)+best (k, j)

35

(Stern+, ACL 2017)

pre-s1 s1 s0 queue

do/MD like/VBP I/PRP eating/VBG fish/NN ./.

i j k

pre-s0 s0 queue

do/MD like/VBP eating/VBG fish/NN I/PRP ./.

i j

score action (i, k, j)

structural action

score label (i, j)

label action

(Cross+Huang, EMNLP16)

max label score label (i, j) max k best (i, k)+best (k, j)

best (i, j) =

Liang Huang (Oregon State)

want for all and larger margin for worse trees: loss-augmented decoding in training (find the most-violated tree, i.e., a bad tree with good score) loss-augmented decoding for Hamming loss (approximating F1): simply replace score label (i, j) with score label (i, j) + 1(label ≠ label*ij) gold tree label for span (i, j) (could be “nolabel”) bad tree good score

(Stern+, ACL 2017)

Liang Huang (Oregon State)

Parser F1 Score Hall et al. (2014) 89.2 Vinyals et al. (2015) 88.3 Cross and Huang (2016b) 91.3 Dyer et al. (2016) corrected 91.7 Liu and Zhang (2017) 91.7 Chart Parser 91.7

+refinement

91.8

(Stern+, ACL 2017)

38

… … … …

(Cross and Huang, ACL 2016) arc-standard (Kiperwaser and Goldberg 2016) arc-eager

38

… … … …

(Cross and Huang, ACL 2016) arc-standard (Kiperwaser and Goldberg 2016) arc-eager

… …

(Shi, Huang, Lee, EMNLP 2017) Saturday talk! arc-hybrid and arc-eager works for both greedy and O(n3) DP

39

40

Feedforward NNs

(Chen + Manning 14)

Stack LSTM (Dyer+ 15) biRNN dependency

(Kiperwaser+Goldberg 16; Cross+Huang 16a)

biRNN span-based constituency

(Cross+Huang 16b)

minimal span-based constituency

(Stern+ ACL 17)

minimal dependency

(Shi+ EMNLP 17)

edge-factored

(McDonald+ 05a)

biRNN graph-based dependency

(Kiperwaser+Goldberg 16; Wang+Chang 16)

DP incremental parsing

(Huang+Sagae 10, Kuhlmann+ 11)

RNNG

(Dyer+ 16)

DP impossible enables slow DP enables fast DP fastest DP: O(n3)

all tree info (summarize output y) minimal or no tree info (summarize input x)

constituency dependency bottom-up

40

Feedforward NNs

(Chen + Manning 14)

Stack LSTM (Dyer+ 15) biRNN dependency

(Kiperwaser+Goldberg 16; Cross+Huang 16a)

biRNN span-based constituency

(Cross+Huang 16b)

minimal span-based constituency

(Stern+ ACL 17)

minimal dependency

(Shi+ EMNLP 17)

edge-factored

(McDonald+ 05a)

biRNN graph-based dependency

(Kiperwaser+Goldberg 16; Wang+Chang 16)

DP incremental parsing

(Huang+Sagae 10, Kuhlmann+ 11)

RNNG

(Dyer+ 16)

DP impossible enables slow DP enables fast DP fastest DP: O(n3)

all tree info (summarize output y) minimal or no tree info (summarize input x)

constituency dependency bottom-up

41

41

41

41

41

fēi cháng gǎn xiè

James Cross

fēi cháng gǎn xiè

James Cross