1

Lower Bounds

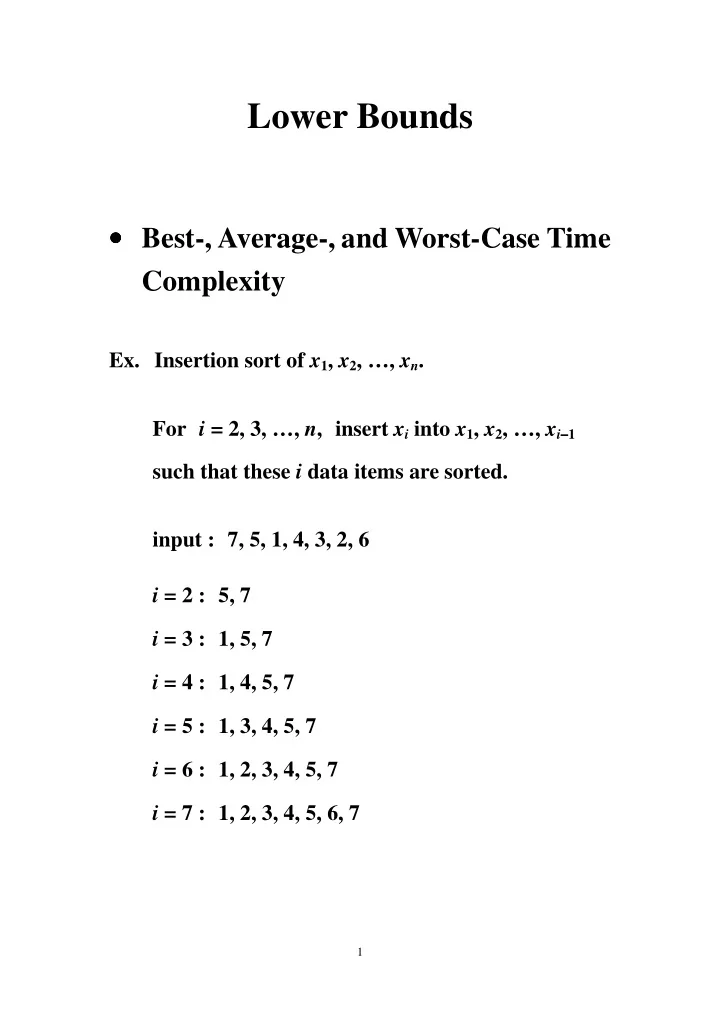

- Best-, Average-, and Worst-Case Time

Complexity

- Ex. Insertion sort of x1, x2, …, xn.

For i = 2, 3, …, n, insert xi into x1, x2, …, xi−

− − −1

Lower Bounds Best-, Average-, and Worst-Case Time Complexity - - PDF document

Lower Bounds Best-, Average-, and Worst-Case Time Complexity Ex. Insertion sort of x 1 , x 2 , , x n . For i = 2, 3, , n , insert x i into x 1 , x 2 , , x i 1 such that these i data items are sorted.

1

− − −1

2

−

( ) n i

− =

2

= O(n2).

3

i

−1

( ) i k i

− =

1 1

= n

×

4

( ) k i i

− =

1 1

= 2k(k −

5

6

7

: (1) x A

Failure Failure Failure Failure : (2) x A : ( ) x A n 1 : ( ) 2 n x A + 1 : ( ) 4 n x A + 3 1 : ( ) 4 n x A + 1 : ( 1) 2 n x A + − 1 : ( 1) 2 n x A + + : (1) x A : ( ) x A n

Failure Failure Failure Failure Failure Failure Failure Failure

8

9

10

11

min

12

− − −1 (x2 is even)

−

− − −1)

−

− − −1)h

min

= Ω

13

14

15

C C C C A A B D H H E H G E F

16

17

18

01 00 10 11 1

H1 H2

001 000 010 011 101 100 110 111

H3

− − −1 : the (n −

10n-1 0n 01n-1

19

− − −1 is n + 1.

− − −1 ≥

− − −1 ≤

− − −1 = n + 1.

20

21

− − −), k(± ± ± ±)) be a state, where

− − −) : the number of ai’s that have lost but never

± ± ±) : the number of ai’s that have both won and

22

− − −), k(± ± ± ±)) to one of the following states:

+ 1, k(− − − −) + 1, k(± ± ± ±));

− − −) + 1, k(± ± ± ±)) or (k −

− − −), k(± ± ± ±))

− − −), k(± ± ± ±) + 1);

− − −), k(± ± ± ±) + 1);

− − −) −

± ± ±) + 1),

− − −);

≥

− − −) ≥

− − −) are

23

± ± ±)

come from k(+)

− − −),

− − −2 (0, 1, 1, 2p −

− − −2

24

∝ ∝ ∝ : the reduction time.

∝ ∝ ∝ : O(1).

25

∝ ∝ ∝ : O(n + m).

26

∝ ∝ ∝ + L2 + T

∝ ∝ ∝, T are known and T∝ ∝ ∝ ∝ ≤

27

∝ ∝ ∝ : O(n).

1 ), (x2, x 2 2 ), …, (xn, n

(x4, x4

2)

(x3, x3

2)

(x1, x1

2)

(x2, x2

2)

28

29

∝ ∝ ∝ : O(n).

(x1, 0) (x2, 0) (x3, 0) (xn, 0) (xn-1, 0)

30

31