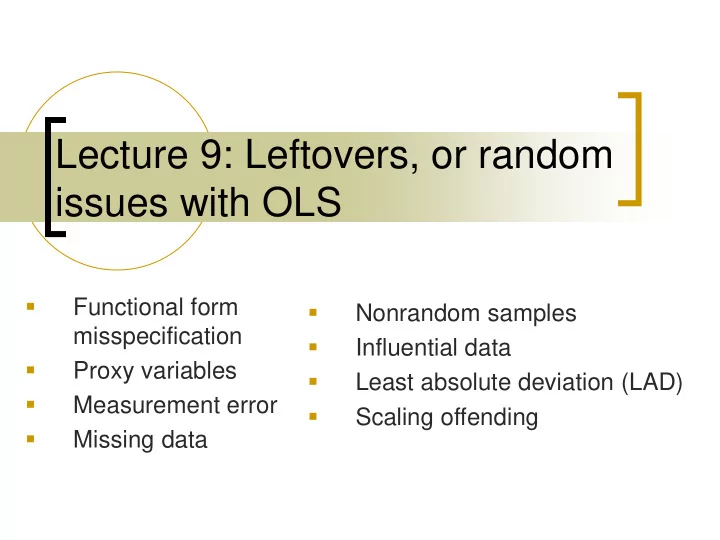

Lecture 9: Leftovers, or random issues with OLS

- Functional form

misspecification

- Proxy variables

- Measurement error

- Missing data

- Nonrandom samples

- Influential data

- Least absolute deviation (LAD)

- Scaling offending

Lecture 9: Leftovers, or random issues with OLS Functional form - - PowerPoint PPT Presentation

Lecture 9: Leftovers, or random issues with OLS Functional form Nonrandom samples misspecification Influential data Proxy variables Least absolute deviation (LAD) Measurement error Scaling offending

2 3 1 1 1 2

k k

doesn’t bias the estimates for the represented age cohort.

. graph matrix homrate poverty IQ het gradrate fem_hh, half

. scatter homrate poverty, mlabel(state)

poverty IQ het gradrate fem_hh homrate

5 10 15 20 95 100 105 95 100 105 .5 1 .5 1 50 100 50 100 8 10 12 14 8 10 12 14 5 10 15

New Hampshire New Jersey Vermont Minnesota Hawaii Utah Delaware Virginia Connecticut Nebraska Maryland Idaho Alaska Massachusetts Wisconsin Washington Wyoming Nevada Florida North Dakota Pennsylvania Iowa Colorado Illinois Missouri South Dakota Michigan Oregon Rhode Island Ohio Kansas Maine Indiana North Carolina California Montana Arkansas Georgia New York Kentucky Tennessee South Carolina Arizona West Virginia Oklahoma Texas Alabama New Mexico Louisiana Mississippi

5 10 15 5 10 15 20 poverty

*

i i i i

ˆ ( )

j

j j ji i

6160 1199 434 200 89 45 25

1000 2000 3000 4000 5000 6000 7000 1 2 3 4 5 6

Variety of Offenses

6399 731 320 281 209 128 73 37 15 11 5

1000 2000 3000 4000 5000 6000 7000 0.5 1 1.5 2 2.5 3

latent criminality Frequency

Recent work estimates model in single step (Osgood & Schreck, 2007)