Orthogonal Matrices

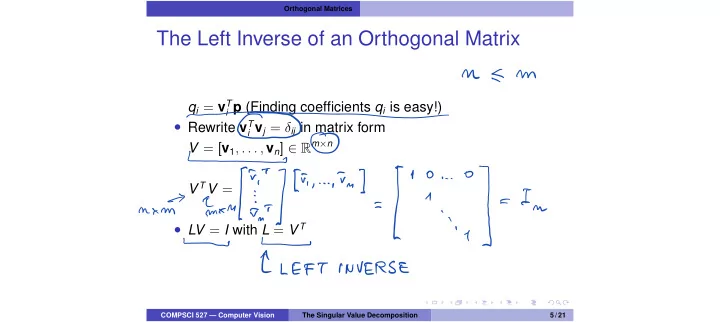

The Left Inverse of an Orthogonal Matrix

qi = vT

i p (Finding coefficients qi is easy!)

- Rewrite vT

i vj = δij in matrix form

V = [v1, . . . , vn] 2 Rm×n V TV =

- LV = I with L = V T

COMPSCI 527 — Computer Vision The Singular Value Decomposition 5 / 21

M E m

O_O

mm

It

L

In

LEFT INVERSE