Introduction to Machine Learning k-Nearest Neighbors Regression

Learning goals

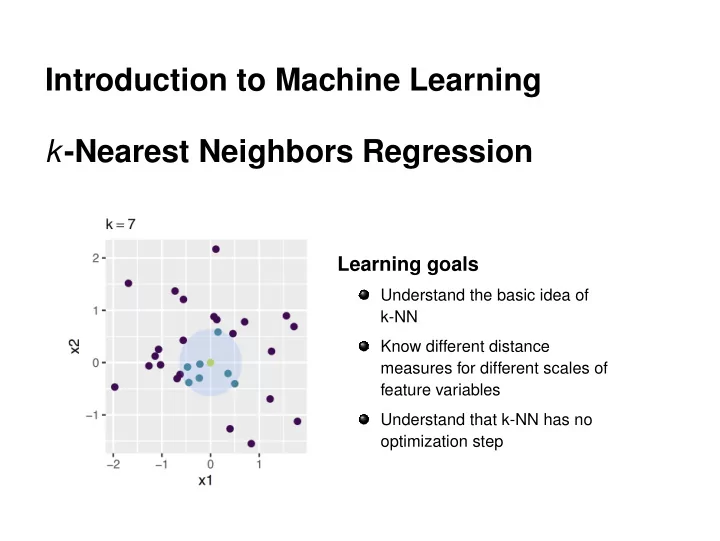

Understand the basic idea of k-NN Know different distance measures for different scales of feature variables Understand that k-NN has no

- ptimization step

Introduction to Machine Learning k -Nearest Neighbors Regression - - PowerPoint PPT Presentation

Introduction to Machine Learning k -Nearest Neighbors Regression Learning goals Understand the basic idea of k-NN Know different distance measures for different scales of feature variables Understand that k-NN has no optimization step

c

−1 1 2 −2 −1 1

x1 x2 k = 15

−1 1 2 −2 −1 1

x1 x2 k = 7

−1 1 2 −2 −1 1

x1 x2 k = 3 c

c

p

c

1 2 3 4 5 6 1 2 3 4 5 Dimension 1 Dimension 2 x x ~ Manhattan Euclidean d(x, x ~) = |5−1| + |4−1| = 7 d( x , x ~) = ( 5 − 1)2 +( 4 − 1)2 = 5

c

p

xj · dgower(xj, ˜

p

xj

xj is 0 or 1. It becomes 0 when the j-th variable is missing in at

c

c

p

xj ·dgower(xj,˜

xj)

p

xj

1·1+1· |2340−2100|

|2680−2100|

1+1

1+ 240

580

2

2

0·1+1· |2340−2680|

|2680−2100|

0+1

0+ 340

580

1

1

0·1+1· |2100−2680|

|2680−2100|

0+1

0+ 580

580

1

1

c

Euclidean (x, ˜

c

1 d(x(i),x)

c

c

c