SLIDE 1

LABELS VS PROBABILITIES

In classification we predict:

1

Class labels → ˆ h(x) = ˆ y

2

Class probabilities → ˆ

πk(x) → We evaluate based on those

c

- Introduction to Machine Learning – 1 / 9

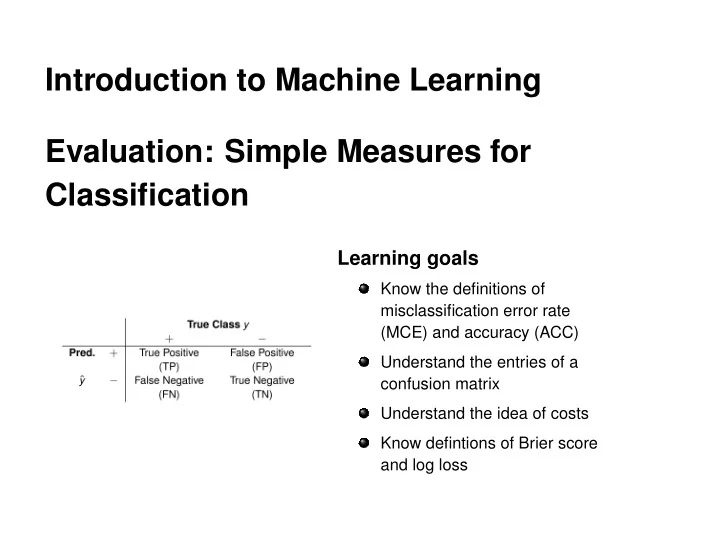

Introduction to Machine Learning Evaluation: Simple Measures for - - PowerPoint PPT Presentation

Introduction to Machine Learning Evaluation: Simple Measures for Classification Learning goals Know the definitions of misclassification error rate (MCE) and accuracy (ACC) Understand the entries of a confusion matrix Understand the idea of

1

2

c

n

n

c

c

c

n

http: //www.oslobilder.no/OMU/OB.%C3%9864/2902 c

n

c

n

right wrong wrong right

0.00 0.25 0.50 0.75 1.00 0.00 0.25 0.50 0.75 1.00

π ^(x) BS true.label

1 c

n

g

k

k

c

n

wrong wrong right

1 2 3 4 0.00 0.25 0.50 0.75 1.00

π ^(x) LL true.label

1

n n

g

k log(ˆ

c