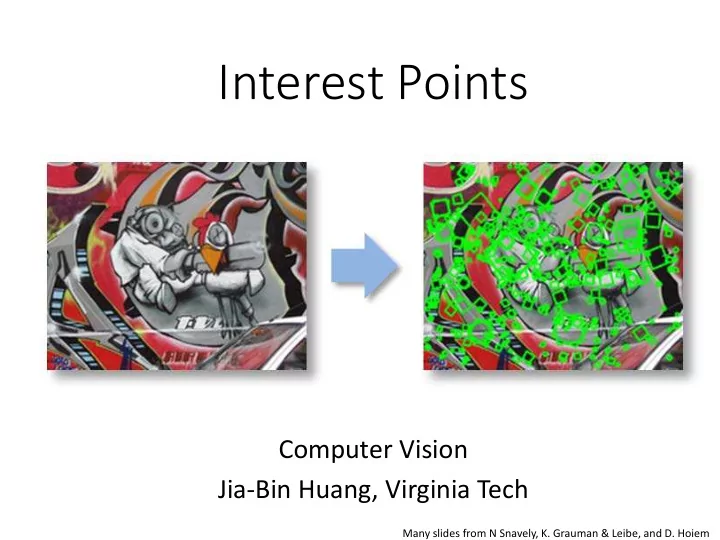

Interest Points

Computer Vision Jia-Bin Huang, Virginia Tech

Many slides from N Snavely, K. Grauman & Leibe, and D. Hoiem

Interest Points Computer Vision Jia-Bin Huang, Virginia Tech Many - - PowerPoint PPT Presentation

Interest Points Computer Vision Jia-Bin Huang, Virginia Tech Many slides from N Snavely, K. Grauman & Leibe, and D. Hoiem Administrative Stuffs HW 1 posted, due 11:59 PM Sept 25 Submission through Canvas Frequently Asked

Many slides from N Snavely, K. Grauman & Leibe, and D. Hoiem

– What an image records

multi-scale detection

threshold -> link

Slide credit: Derek Hoiem

Slide credit: Derek Hoiem

Takeo Kanade (CMU)

Credit: Matt Brown

Slide from Silvio Savarese

Slide from Silvio Savarese

Slide from Silvio Savarese

frame 0 frame 22 frame 49 x x x

Step 1: extract features Step 2: match features

Step 1: extract features Step 2: match features Step 3: align images

by Diva Sian by swashford

by Diva Sian by scgbt

NASA Mars Rover images with SIFT feature matches

A

f

B

f

A1 A2 A3

T f f d

B A

< ) , (

distinctive key- points

normalize the region content

around each keypoint

descriptor from the normalized region

descriptors

More Repeatable More Points

A1 A2 A3

More Distinctive More Flexible

Robust to occlusion Works with less texture Minimize wrong matches Robust to expected variations Maximize correct matches Robust detection Precise localization

“flat” region: no change in all directions “edge”: no change along the edge direction “corner”: significant change in all directions

Credit: S. Seitz, D. Frolova, D. Simakov

summing up the squared differences (SSD)

W

Taylor Series expansion of I: If the motion (u,v) is small, then first order approximation is good Plugging this into the formula on the previous slide…

W

(Shorthand: )

W

The surface E(u,v) is locally approximated by a quadratic form.

Let’s try to understand its shape.

Horizontal edge: u v E(u,v)

Vertical edge: u v E(u,v)

The shape of H tells us something about the distribution

We can visualize H as an ellipse with axis lengths determined by the eigenvalues of H and orientation determined by the eigenvectors of H

direction of the slowest change direction of the fastest change

(λmax)-1/2 (λmin)-1/2 const ] [ = v u H v u Ellipse equation: λmax, λmin : eigenvalues of H

The eigenvectors of a matrix A are the vectors x that satisfy: The scalar λ is the eigenvalue corresponding to x

Once you know λ, you find x by solving

Eigenvalues and eigenvectors of H

xmin xmax

How are λmax, xmax, λmin, and xmin relevant for feature detection?

How are λmax, xmax, λmin, and xmin relevant for feature detection?

Want E(u,v) to be large for small shifts in all directions

λ1 λ2 “Corner” λ1 and λ2 are large, λ1 ~ λ2; E increases in all

directions

λ1 and λ2 are small; E is almost constant

in all directions

“Edge” λ1 >> λ2 “Edge” λ2 >> λ1 “Flat” region

Classification of image points using eigenvalues of M:

Here’s what you do

Here’s what you do

λmin is a variant of the “Harris operator” for feature detection

Harris

Effect: A very precise corner detector.

Ellipse rotates but its shape (i.e. eigenvalues) remains the same Corner response is invariant to image rotation

Only derivatives are used => invariance to intensity shift I → I + b Intensity scale: I → a I R x (image coordinate)

threshold

R x (image coordinate) Partially invariant to affine intensity change

All points will be classified as edges Corner

Not invariant to scaling

Suppose you’re looking for corners Key idea: find scale that gives local maximum of f

)) , ( ( )) , ( (

1 1

σ σ ′ ′ = x I f x I f

m m

i i i i

How to find corresponding patch sizes?

)) , ( (

1

σ x I f

m

i i

)) , ( (

1

σ x I f

m

i i

′

)) , ( (

1

σ x I f

m

i i

)) , ( (

1

σ x I f

m

i i

′

)) , ( (

1

σ x I f

m

i i

)) , ( (

1

σ x I f

m

i i

′

)) , ( (

1

σ x I f

m

i i

)) , ( (

1

σ x I f

m

i i

′

)) , ( (

1

σ x I f

m

i i

)) , ( (

1

σ x I f

m

i i

′

)) , ( (

1

σ x I f

m

i i

)) , ( (

1

σ′ ′ x I f

m

i i

(sometimes need to create in- between levels, e.g. a ¾-size image)

σ Original image

4 1

2 = σ

Sampling with step σ4 =2 σ σ σ

) ( ) ( σ σ

yy xx

L L +

σ σ2 σ3 σ4 σ5 ⇒ List st o

(x, x, y y, , s)

2π

[Lowe, SIFT, 1999]

73

– http://www.robots.ox.ac.uk/~vgg/research/affine – http://www.cs.ubc.ca/~lowe/keypoints/ – http://www.vision.ee.ethz.ch/~surf

– Robust – Distinctive – Compact – Efficient

– Capture texture information – Color rarely used

Basic idea:

Adapted from slide by David Lowe

2π angle histogram

Full version

Adapted from slide by David Lowe

[Lowe, ICCV 1999]

Histogram of oriented gradients

information

affine deformations

– Find maxima in location/scale space – Remove edge points

– Bin orientations into 36 bin histogram

– Return orientations within 0.8 of peak

– Sample 16x16 gradient mag. and rel. orientation – Bin 4x4 samples into 4x4 histograms – Threshold values to max of 0.2, divide by L2 norm – Final descriptor: 4x4x8 normalized histograms

Lowe IJCV 2004

sift

868 SIFT features

I1 I2

'

I1 I2

51 matches

58 matches

How can we measure the performance of a feature matcher?

50 75 200 feature distance

The distance threshold affects performance

50 75 200

false match true match

feature distance

How can we measure the performance of a feature matcher?

0.7

1 1

false positive rate true positive rate # true positives # correctly matched features (positives)

0.1

How can we measure the performance of a feature matcher?

“recall”

# false positives # incorrectly matched features (negatives)

1 - “precision”

0.7

1 1

false positive rate true positive rate

0.1

ROC curve (“Receiver Operator Characteristic”)

How can we measure the performance of a feature matcher?

# true positives # correctly matched features (positives)

“recall”

# false positives # incorrectly matched features (negatives)

1 - “precision”

Lowe IJCV 2004

Lowe IJCV 2004

Lowe IJCV 2004

integral images ⇒ 6 times faster than SIFT

identification

[Bay, ECCV’06], [Cornelis, CVGPU’08]

(detector + descriptor, 640×480 img)

Many other efficient descriptors are also available

Count the number of points inside each bin, e.g.: Count = 4 Count = 10 ... Log-polar binning: more precision for nearby points, more flexibility for farther points.

Belongie & Malik, ICCV 2001

Example descriptor

Compute edges at four

Extract a patch in each channel

Apply spatially varying blur and sub-sample

(Idealized signal)

Berg & Malik, CVPR 2001

– Precise localization in x-y: Harris – Good localization in scale: Difference of Gaussian – Flexible region shape: MSER

– Harris-/Hessian-Laplace/DoG work well for many natural categories – MSER works well for buildings and printed things

– Get more points with more detectors

– [Mikolajczyk et al., IJCV’05, PAMI’05] – All detectors/descriptors shown here work well

Tuytelaars Mikolajczyk 2008

Features from Accelerated Segment Test, ECCV 06

Binary feature descriptors