Interactions Animating Dexterous Motions How can we easily animate - - PowerPoint PPT Presentation

Interactions Animating Dexterous Motions How can we easily animate - - PowerPoint PPT Presentation

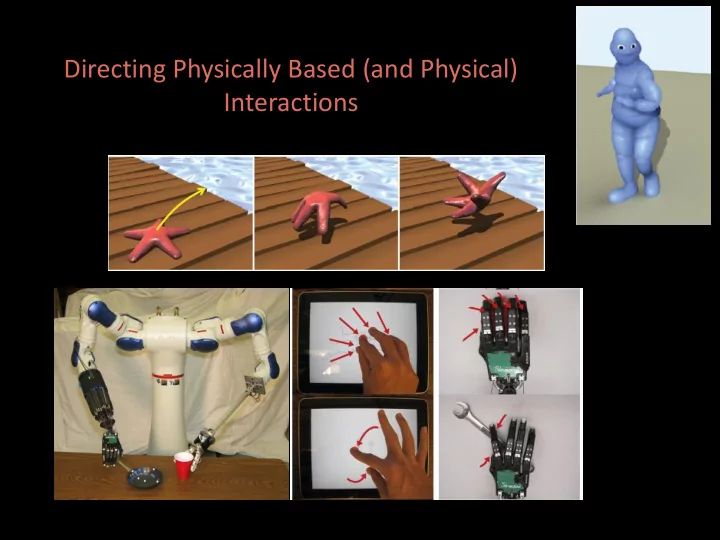

Directing Physically Based (and Physical) Interactions Animating Dexterous Motions How can we easily animate the starfishs escape? Appearance of intelligent motion Believable physical interaction with the glass box Dynamic,

Animating Dexterous Motions

How can we easily animate the starfish’s escape?

- Appearance of intelligent motion

- Believable physical interaction with the glass box

- Dynamic, fun actions

- Animation tools accessible to anyone

Animating Dexterous Motions

Videos created by two novice users using our system.

Background: Classical Approaches

- Motion Capture

- Not available for leaping starfish!

- Traditional Keyframing

- Keyframing complex dynamic

interactions is hard

- Physically based simulation

- Great for passive objects

- Difficult to create “intelligent” motions

- Physically based controllers /

Physically based optimization

- How do we build a controller or

- bjective function for this task?

- No reference motions are available

Factors that appear to contribute to human motion selection

Lillian Y. Chang and Nancy S. Pollard, “Pre-Grasp Interaction for Object Acquisition in Difficult Tasks,” forthcoming book chapter

Background: Better Alternatives

- Operational space / task space

control

- Great concept, and we will use it

- Direct control of physically based

systems

- Goal: a more general animation

system, motivated by demonstrations like these!

- We found that a variety of control

modalities are needed and can be incorporated easily

Sentis and Khatib Laszlo, van de Panne, and Fiume van de Panne

Ski Stunt Simulator

What Control Modes are Intuitive?

User Interface Example

Interface Modes

Manipulate Bones

- Drag a bone to control its motion

- direct control of head position

- Constrain a bone to a fixed

position / orientation

- constrain base to orientation shown

Interface Modes

Manipulate Center of Gravity

- Drag the CG of the lamp in a

tightly controlled manner to keep it balanced

- Drag the CG of the starfish

abruptly to create a jump

- Drag the CG of the donut in

a free form manner to create the desired animation

Interface Modes

Manipulate Character Root Orientation

- Drag a special rotation

widget for 3D rotational motions

Interface Modes

Manipulate Joints

- Keyframe a leaping action

for the worm

- Set and maintain joint limits

- Run a passive controller for

a soft landing

- How? Set a single desired

configuration and low stiffness

Interface Modes

Previewing

- Observe the effect of

maintaining current command for a given period of time

Interface Modes

Speed up, slow down, advance, back up the simulation

- Trial and error to learn the character dynamics and achieve desired result

Animating Dexterous Motions

Our observation: Different control modes are needed at different times to create animations sophisticated enough to tell a story Our solution: Put a variety of control modes into the animators hands and make them as intuitive as possible

Overview of Our System

Character model: Coarse volumetric model -> fast simulation Fine surface detail for appearance, contacts and collision User Interface: Real-time, trial and error (e.g., Jump like this!) Results: Compute muscle forces for the character to best achieve the user’s goals

Junggon Kim and Nancy S. Pollard, “Direct Control of Simulated Non-Human Characters,” IEEE CG&A, 2011

Interface Modes Under the Hood

The user is placing a variety of constraints on the character’s motion How do we determine how the character should behave, in a physically realistic manner, to best meet those constraints?? Our only “lever” is accelerations or torques that must be applied at the character’s joints to advance the simulation Algebra on the equations of motion?

Complex, local-minimum prone, prioritized optimization??

Interface Modes Under the Hood

Most quantities we care to measure or control have a locally linear relationship to joint accelerations and joint torques

Evangelos Kokkevis, Practical Physics for Articulated Characters, Game Developer's Conference 2004.

Example: Bone Constraints

Express bone constraint as a linear function of joint accelerations: Straightforward differentiation of equations of motion Desired bone accelerations Bone accelerations when joint accelerations are zero Obtaining desired bone accelerations:

Interface Modes Under the Hood

(1) Express all constraints as a linear function of joint accelerations: (2) Solve a Quadratic Program to obtain joint accelerations: (3) Use these accelerations for the next timestep to advance the simulation

Final Demos

Realistic Physical Behavior?

http://www.youtube.com/watch?v=a-1AiExU3Vk Huai-Ti Lin, Tufts Biomimetic Devices Laboratory

Notes

Constraint priorities: Mouse drags are satisfied after everything else Contact modeling: “hallucinate” constraints to account for pushoff forces Objective functions: minimize joint accelerations, torques, or velocities Speed: Simulations are real-time or better; users preferred 3X-8X slower Ease of use: Starfish escape animations created by novices in minutes

What Control Modes are Intuitive?

References

Junggon Kim and Nancy S. Pollard, “Direct Control of Simulated Non-Human Characters,” IEEE CG&A, 2011 Junggon Kim and Nancy S. Pollard, “Fast Simulation of Skeleton-Driven Deformable Body Characters,” ACM ToG, 2011

http://www.cs.cmu.edu/~junggon/ http://www.cs.cmu.edu/~junggon/

Sticky Finger Manipulation With a Multi-Touch Interface

Ken Toh MS Thesis

Motivation

- User interaction is a key feature in most graphical

and robotic applications.

Sticky Finger Manipulation With a Multi-Touch Interface 28

Manipulating virtual cloth Teleoperating a robot with a multi-fingered hand

Motivation

- Traditional User Input Devices are effective for

many simple high-level interaction tasks..

Sticky Finger Manipulation With a Multi-Touch Interface 29

Common user input devices with simple command spaces

On/off Up, down, left, right

Motivation

- Dexterous manipulation of simulated/real world

- bjects with high DOFs can however be quite

awkward to achieve with these existing input devices

Realistic cloth tearing requires more than a single cursor to execute

Sticky Finger Manipulation With a Multi-Touch Interface 30

A panel of buttons is not the most intuitive interface for dexterous tele-manipulation

Motivation

Sticky Finger Manipulation With a Multi-Touch Interface 31

- Key Question:

Can we design an intuitive user interface that allows us to feel natural when manipulating objects by proxy, almost as though we are interacting with them directly?

Cloth Manipulation: Modes

Sticky Finger Manipulation With a Multi-Touch Interface 32

Creation Mode Sticky-Finger Mode Cut Mode

Sticky Fingers for Cloth Manipulation

Sticky Finger Manipulation With a Multi-Touch Interface 33

Underlying cloth particles within radius of each active fingertip center are stuck to that finger and moves with it

Sticky-finger Lifting

- User activates toggle which changes the plane of

control from the x-z plane to x-y plane.

Sticky Finger Manipulation With a Multi-Touch Interface 34

Pinch-lifting

- Automatic detection of pinch event when two finger

touches are close together.

Sticky Finger Manipulation With a Multi-Touch Interface 35

Cloth Simulation Model

Sticky Finger Manipulation With a Multi-Touch Interface 36

A mesh of particles connected by bend, shear and stretch constraints

Verlet Integration

- Key: Position-based dynamics essential because we

need to stick particles kinematically to fingers (ie. modify positions directly)

Sticky Finger Manipulation With a Multi-Touch Interface 37

Iterative Constraint Satisfaction

Sticky Finger Manipulation With a Multi-Touch Interface 38

- Must handle cases with stuck fingers

x2 r x1 Case (a): x1 and x2 not stuck Correction vector Case (b): x1 stuck, x2 not stuck Case (c): if both are stuck, both = 0

Tearing

- Sticky finger pins down relevant particles and constraints, allowing

unconstrained regions to elongate and eventually tear. Finger size matters too.

Sticky Finger Manipulation With a Multi-Touch Interface 39

Cutting

Sticky Finger Manipulation With a Multi-Touch Interface 40

Similar to tearing but in a more controlled fashion

Direct Cloth Manipulation

Robotic Telemanipulation

Goal: intuitive interactive control of dexterous manipulation for a robot arm / hand system

- Remote dexterous

manipulation

- Scripting new

behaviors

- Learning from

demonstration

What is available?

http://www.youtube.com/watch?v=x9Bjs99A0k0

Master-slave systems: Origami with the DaVinci surgical robot

What is available?

http://www.youtube.com/watch?v=jOnp2M5qibs&feature=player_detailpage TVO: Doing the Dirty Work: Robots for Hire on the NASA Robonaut

Glove interfaces for dexterous hand control: Cyberglove interface

What is available?

http://www.youtube.com/watch?v=_R40j64C7t8 Video from Shadow Robot Company

Glove interfaces for dexterous hand control: Cyberglove interface

Robotic Telemanipulation

Our observations: Manipulation operations often depend on precise fingertip motions Existing interfaces control them only indirectly Our solution: An inexpensive interface based on maintaining relative fingertip positions and trajectories

Multi-touch Sticky Finger Teleoperation

Multi-touch for teleoperation of manipulation tasks

- Portable

- Intuitive

- Accessible

- Affordable

- Capable of dexterous actions

Yue Peng Toh and Nancy S. Pollard, “Sticky-Finger Teleoperation with A Multi-Touch Interface”, submitting to ICRA 2012.

Multi-touch Sticky Finger Teleoperation

Pose Control

Jacobian pseudoinverse control with a nullspace constraint to reduce roll of the palm

Primary objective: control fingertip velocities Secondary objective: minimize palm roll Solution: joint velocities hand and arm

Interface modes: Horizontal Scrolling

Interface modes: Vertical Scrolling

Demo! (3X speed)

Reference

Yue Peng Toh and Nancy S. Pollard, “Sticky-Finger Teleoperation with A Multi-Touch Interface”, submitting to ICRA 2012.

What Control Modes are Intuitive?

55