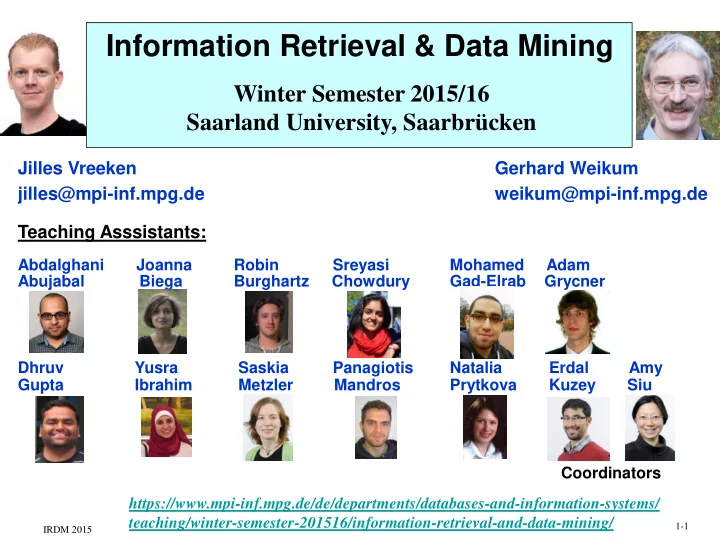

Information Retrieval & Data Mining

Winter Semester 2015/16 Saarland University, Saarbrücken

https://www.mpi-inf.mpg.de/de/departments/databases-and-information-systems/ teaching/winter-semester-201516/information-retrieval-and-data-mining/

Jilles Vreeken Gerhard Weikum jilles@mpi-inf.mpg.de weikum@mpi-inf.mpg.de Teaching Asssistants:

Abdalghani Joanna Robin Sreyasi Mohamed Adam Abujabal Biega Burghartz Chowdury Gad-Elrab Grycner Dhruv Yusra Saskia Panagiotis Natalia Erdal Amy Gupta Ibrahim Metzler Mandros Prytkova Kuzey Siu Coordinators

IRDM 2015 1-1