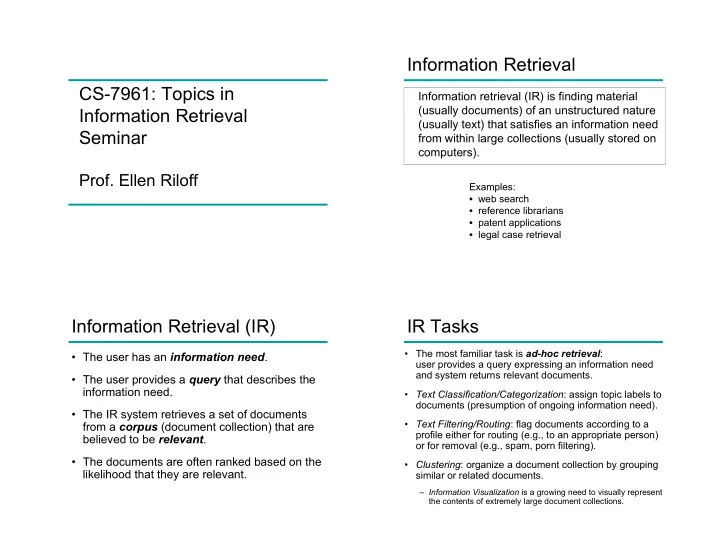

CS-7961: Topics in Information Retrieval Seminar

- Prof. Ellen Riloff

Information Retrieval

Information retrieval (IR) is finding material (usually documents) of an unstructured nature (usually text) that satisfies an information need from within large collections (usually stored on computers).

Examples:

- web search

- reference librarians

- patent applications

- legal case retrieval

Information Retrieval (IR)

- The user has an information need.

- The user provides a query that describes the

information need.

- The IR system retrieves a set of documents

from a corpus (document collection) that are believed to be relevant.

- The documents are often ranked based on the

likelihood that they are relevant.

IR Tasks

- The most familiar task is ad-hoc retrieval:

user provides a query expressing an information need and system returns relevant documents.

- Text Classification/Categorization: assign topic labels to

documents (presumption of ongoing information need).

- Text Filtering/Routing: flag documents according to a

profile either for routing (e.g., to an appropriate person)

- r for removal (e.g., spam, porn filtering).

- Clustering: organize a document collection by grouping

similar or related documents.

– Information Visualization is a growing need to visually represent the contents of extremely large document collections.