Eugene Agichtein, Emory University 11 July 2010 AAAI 2010 Tutorial: Inferring Searcher Intent 1

AAAI 2010 Tutorial: Inferring Searcher Intent 7/11/2010

Eugene Agichtein Emory University

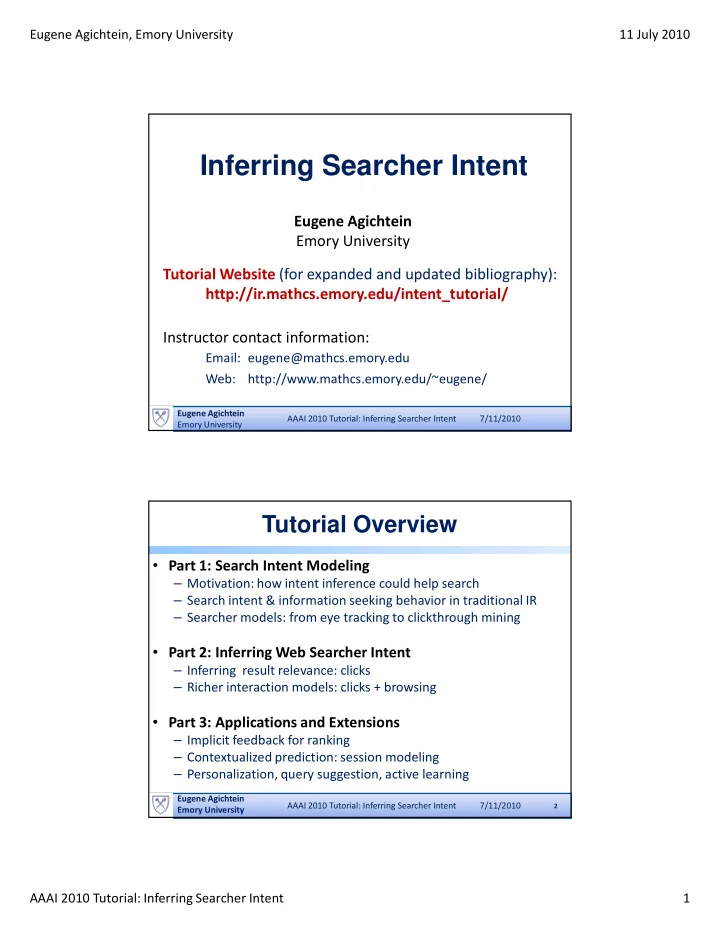

Inferring Searcher Intent

Eugene Agichtein Emory University

Tutorial Website (for expanded and updated bibliography): http://ir.mathcs.emory.edu/intent_tutorial/ Instructor contact information:

Email: eugene@mathcs.emory.edu Web: http://www.mathcs.emory.edu/~eugene/

AAAI 2010 Tutorial: Inferring Searcher Intent 7/11/2010

Tutorial Overview

- Part 1: Search Intent Modeling

– Motivation: how intent inference could help search – Search intent & information seeking behavior in traditional IR – Searcher models: from eye tracking to clickthrough mining

- Part 2: Inferring Web Searcher Intent

– Inferring result relevance: clicks – Richer interaction models: clicks + browsing

- Part 3: Applications and Extensions

– Implicit feedback for ranking – Contextualized prediction: session modeling – Personalization, query suggestion, active learning

2

Eugene Agichtein Emory University