Inductive Visual Localisation: Factorised Training for Superior Generalisation

Visual Geometry Group (VGG) University of OxfordInductive Visual Localisation: Factorised Training for Superior - - PowerPoint PPT Presentation

Inductive Visual Localisation: Factorised Training for Superior - - PowerPoint PPT Presentation

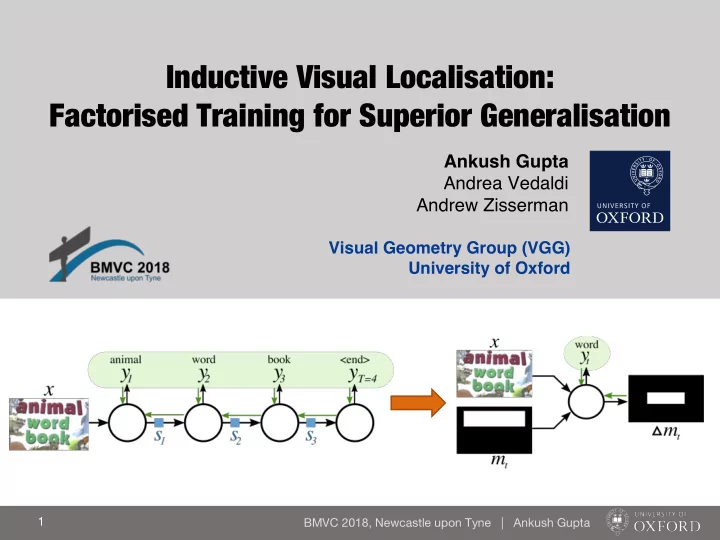

Inductive Visual Localisation: Factorised Training for Superior Generalisation Ankush Gupta Andrea Vedaldi Andrew Zisserman Visual Geometry Group (VGG) University of Oxford 1 BMVC 2018, Newcastle upon Tyne | Ankush Gupta RNNs have a

RNNs have a problem. Poor generalization to sequence lengths beyond those in the training set.

Training Testing

Example: Enumerative Counting

Counting objects one-by-one. Total count = 3

Training Stop? 1

Example: Enumerative Counting

Failure when tested on >3 length input Total count = 6

Testing Stop? 1

Why? Non-interpretable recurrent state (st) which is trained end-to-end may not learn the correct loop-invariant.

Our Solution

- 1. Train for one-step inductive

updates (not end-to-end).

- 2. Restrict the recurrent state to a

spatial-memory map, which tracks the progress made so far.

Inductive Training

end-to-endinput image Spatial memory map

Stop?

Updated memory

Train for

- ne-step

updates

Results: Enumerative Counting

Coloured Shapes & DOTA Airplanes

train on 3-5 objects, test on >5 objects

Multi-line Text Recognition

Read one line at each step

Results: Multi-line Text Recognition

Synth Text Blocks

train on 1-4 lines, test on up to 10 lines

Results: Multi-line Text Recognition

- Vs. State-of-the-art @ ICDAR 2013 Blocks

- utperform (in terms of Recall, F-score)

Inductive Visual Localisation: Factorised Training for Superior Generalisation

Visual Geometry Group (VGG) University of Oxford#111

Poster