1

Implementing DNNs

- n Embedded

GPU-based Platforms GPU based Platforms

Alessandro Biondi

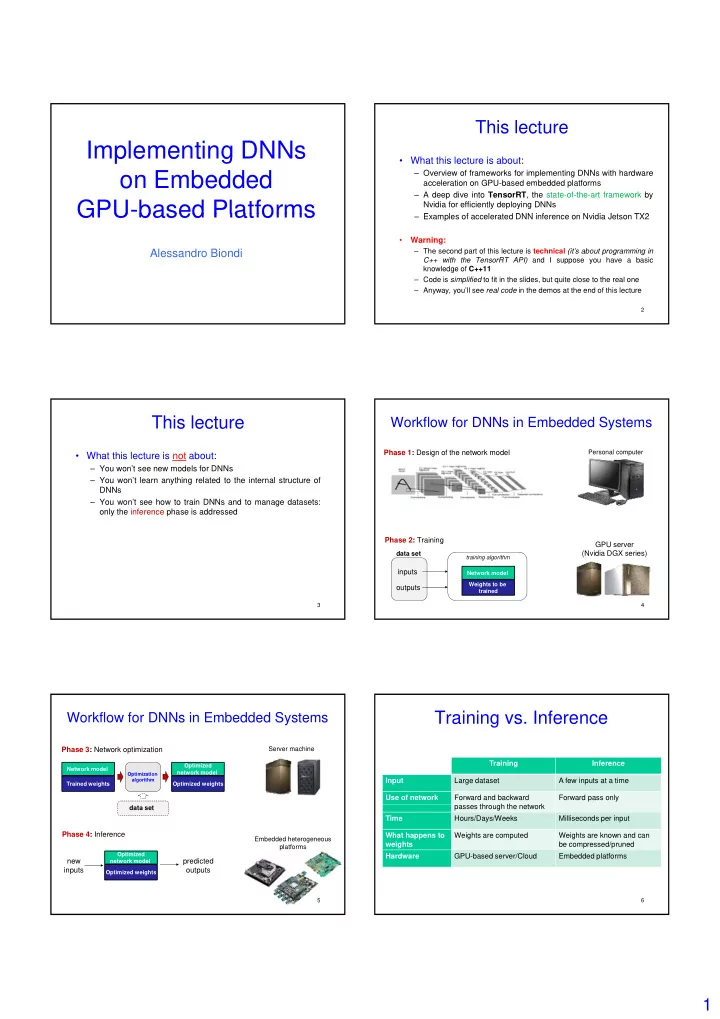

This lecture

- What this lecture is about:

– Overview of frameworks for implementing DNNs with hardware acceleration on GPU-based embedded platforms – A deep dive into TensorRT, the state-of-the-art framework by Nvidia for efficiently deploying DNNs E l f l t d DNN i f N idi J t TX2

2

– Examples of accelerated DNN inference on Nvidia Jetson TX2

- Warning:

– The second part of this lecture is technical (it’s about programming in C++ with the TensorRT API) and I suppose you have a basic knowledge of C++11 – Code is simplified to fit in the slides, but quite close to the real one – Anyway, you’ll see real code in the demos at the end of this lecture

This lecture

- What this lecture is not about:

– You won’t see new models for DNNs – You won’t learn anything related to the internal structure of DNNs – You won’t see how to train DNNs and to manage datasets: l th i f h i dd d

3

- nly the inference phase is addressed

Workflow for DNNs in Embedded Systems

Phase 1: Design of the network model

Personal computer

4 Network model

inputs

- utputs

Weights to be trained training algorithm

data set

GPU server (Nvidia DGX series) Phase 2: Training Phase 3: Network optimization

Server machine

Network model Trained weights Optimized network model Optimized weights

Optimization algorithm

Workflow for DNNs in Embedded Systems

5

data set

Phase 4: Inference

Optimized network model Optimized weights

new inputs predicted

- utputs

Embedded heterogeneous platforms

Training vs. Inference

Training Inference Input Large dataset A few inputs at a time Use of network Forward and backward th h th t k Forward pass only

6

passes through the network Time Hours/Days/Weeks Milliseconds per input What happens to weights Weights are computed Weights are known and can be compressed/pruned Hardware GPU-based server/Cloud Embedded platforms