3/18/2020 1

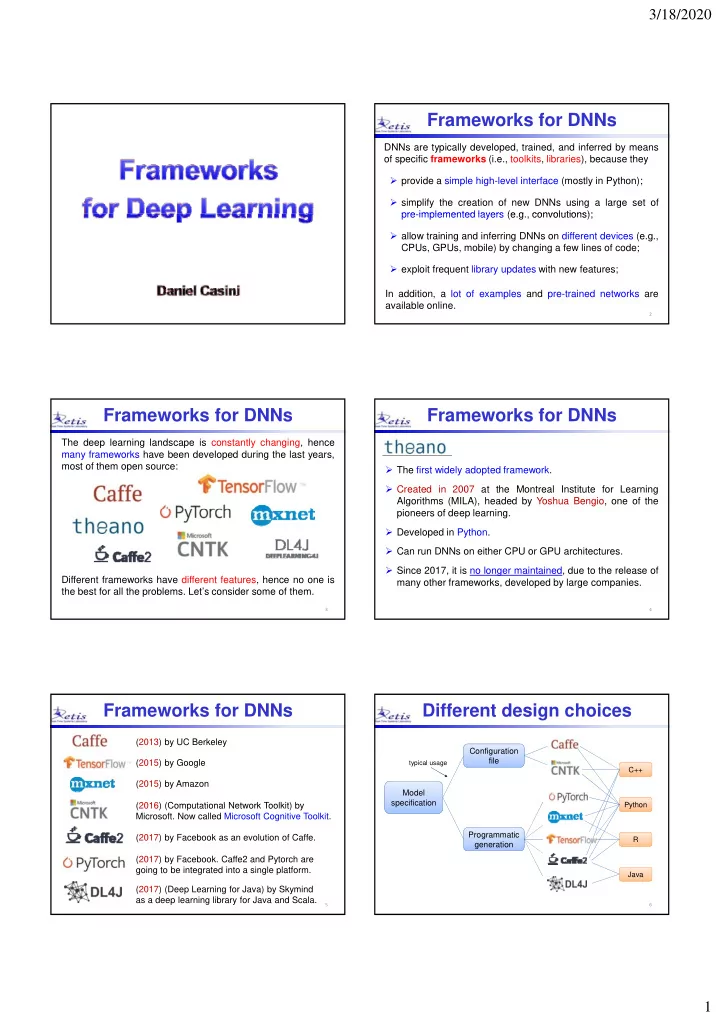

Frameworks for DNNs

DNNs are typically developed, trained, and inferred by means

- f specific frameworks (i.e., toolkits, libraries), because they

- provide a simple high-level interface (mostly in Python);

- simplify the creation of new DNNs using a large set of

pre implemented layers (e g convolutions); pre-implemented layers (e.g., convolutions);

- allow training and inferring DNNs on different devices (e.g.,

CPUs, GPUs, mobile) by changing a few lines of code;

- exploit frequent library updates with new features;

In addition, a lot of examples and pre-trained networks are available online.

2

Frameworks for DNNs

The deep learning landscape is constantly changing, hence many frameworks have been developed during the last years, most of them open source: Different frameworks have different features, hence no one is the best for all the problems. Let’s consider some of them.

3

Frameworks for DNNs

- The first widely adopted framework.

- Created in 2007 at the Montreal Institute for Learning

Algorithms (MILA), headed by Yoshua Bengio, one of the i f d l i pioneers of deep learning.

- Developed in Python.

- Can run DNNs on either CPU or GPU architectures.

- Since 2017, it is no longer maintained, due to the release of

many other frameworks, developed by large companies.

4

Frameworks for DNNs

(2013) by UC Berkeley (2015) by Google (2015) by Amazon (2016) (Computational Network Toolkit) by (2016) (Computational Network Toolkit) by

- Microsoft. Now called Microsoft Cognitive Toolkit.

(2017) by Facebook as an evolution of Caffe. (2017) by Facebook. Caffe2 and Pytorch are going to be integrated into a single platform. (2017) (Deep Learning for Java) by Skymind as a deep learning library for Java and Scala.

5

Different design choices

Model specification Configuration file

Python C++

typical usage

6

specification Programmatic generation

Python Java R