SLIDE 8 NO FALSE POSITIVES

43

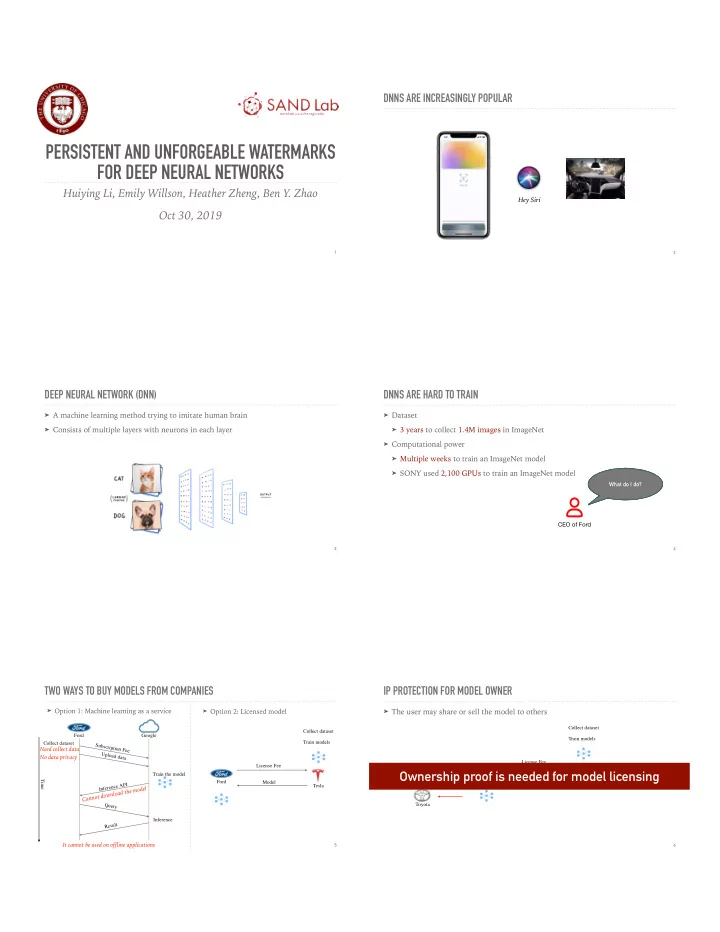

Theorem 1: Any model not containing a watermark will fail the

verification process with .

Fθ 핎 = < W, yw > 핎 Tacc > > Pr(random guess)

Yes if Verify(sig, Fθ, Opub) =

acc(Fθ, W, yW) ≥ Tacc We verify the presence of a watermark in a model using the following equation: Task Normal and Null Embedding Accuracy Overall False Positive Rate

Random guess

Digit 9.97% 10.07% 10% 0% Traffic 2.59% 3.05% 2.33% 0% Face 0.16% 0.08% 0.08% 0%

풜x⊕W− 풜x⊕W

Pr(min(풜x⊕W, 풜x⊕W−)) > Tacc

A non-watermarked model decisively fails the watermark verification test with .

Tacc = 0.8

AUTHENTICATION

44

The watermark can inherently be authenticated, since it is constructed using a cryptographic signature.

= 핎

PIRACY RESISTANCE

45

As an adversary attempts to train a new watermark into the model, the original watermark accuracy remains high.

0.2 0.4 0.6 0.8 1 20 40 60 80 100

Normal Classification Normal - W Null - W Normal - WA Null - WA

Accuracy Number of Epochs

Meanwhile, the attacker’s watermark cannot be successfully added, even after 100 training epochs. Theorem 2: Once a model is trained and includes a watermark , it is impossible to add the null embedding of a different watermark into the model.

Fθ 핎 핎A

0.2 0.4 0.6 0.8 1 20 40 60 80 100

Normal Classification Normal - W Null - W

Accuracy Number of Epochs

풜x 풜x⊕W 풜x⊕W− 풜x⊕W 풜x⊕W−

PERSISTENCE

46

If the watermark is unknown, the attacker cannot remove the watermark by fine-tuning or pruning the model. For all three tasks, the watermark is not degraded by fine-tuning.

Fine-tuning

0.85 0.9 0.95 1 20 40 60 80 100 Accuracy Number of Epochs Normal Classification Normal Embedding Null Embedding

For all three tasks, neuron pruning reduces normal classification accuracy before it reduces watermark accuracy.

Neuron Pruning

0.2 0.4 0.6 0.8 1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Accuracy Ratio of Neurons Pruned Normal Classification Normal Embedding Null Embedding 풜x 풜x⊕W 풜x⊕W−

PERSISTENCE

47

An attacker cannot corrupt our watermark if they do not know it. But how easily could they find it? And could they corrupt it if they did?

PERSISTENCE

Pr(W = WA) = 1 m ⋅ Y ⋅ 2n2 m = (height(x) − n + 1) × (width(x) − n + 1) Task Digit Traffic Face

Pr(W = WA)

2.75 × 10−15 1.83 × 10−16 5.40 × 10−18 Task Query Time (in years) Digit Traffic Face 6.92 × 107 2.42 × 108 2.75 × 108 Theorem 4: The probability that a single random guess can reveal the watermark embedded in the model is:

핎

Where Theorem 3: Given a model containing watermark , in order to identify and , an attacker cannot apply any loss or gradient-based

- ptimization to reduce the cost of querying

. Instead, the attacker needs to randomly query .

Fθ 핎 W yW Fθ Fθ

48

(Assuming 1s per verification)