- Int. Secure Systems Lab

Vienna University of Technology

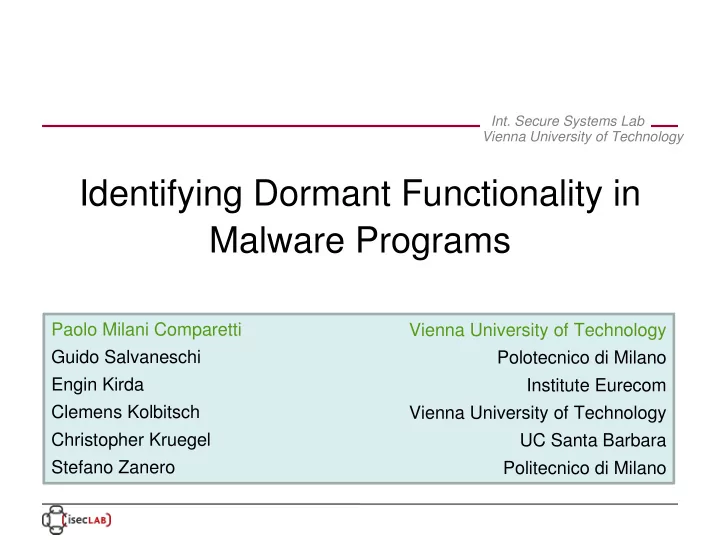

Identifying Dormant Functionality in Malware Programs Paolo Milani - - PowerPoint PPT Presentation

Int. Secure Systems Lab Vienna University of Technology Identifying Dormant Functionality in Malware Programs Paolo Milani Comparetti Vienna University of Technology Guido Salvaneschi Polotecnico di Milano Engin Kirda Institute Eurecom

Vienna University of Technology

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 2

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 3

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 4

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 5

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 6

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 7

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 8

B of system/API call instances

B is the output of the behavior identification phase

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 9

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 10

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 11

B of relevant system/API calls

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 12

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 13

B

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 14

B

B

B

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 15

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 16

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 17

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 18

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 19

– nodes colored based on instruction classes

[1] "Polymorphic Worm Detection Using Structural Information of Executables", RAID 2005

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 20

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 21

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 22

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 23

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 24

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 25

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 26

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 27

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 28

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 29

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 30

Vienna University of Technology IEEE Symposium on Security & Privacy, May 17 2010 31