Jian Pei: CMPT 459/741 Clustering (4) 1

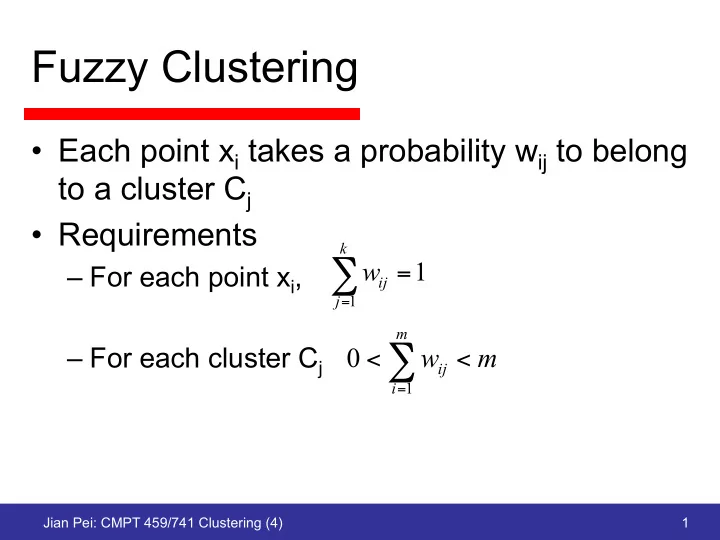

Fuzzy Clustering

- Each point xi takes a probability wij to belong

to a cluster Cj

- Requirements

– For each point xi, – For each cluster Cj

1

1

=

∑

= k j ij

w

m w

m i ij <

<∑

=1

Fuzzy Clustering Each point x i takes a probability w ij to belong - - PowerPoint PPT Presentation

Fuzzy Clustering Each point x i takes a probability w ij to belong to a cluster C j Requirements k w 1 For each point x i , = ij j 1 = m For each cluster C j 0 w m < ij < i = 1 Jian Pei: CMPT 459/741

Jian Pei: CMPT 459/741 Clustering (4) 1

1

= k j ij

m i ij <

=1

Jian Pei: CMPT 459/741 Clustering (4) 2

Jian Pei: CMPT 459/741 Clustering (4) 3

= =

k j m i j i p ij k

1 1 2 1

= =

m i p ij m i i p ij j

1 1

= − −

k q p q i p j i ij

1 1 1 2 1 1 2

=

k q q i j i ij

1 2 2

Jian Pei: CMPT 459/741 Clustering (4) 4

Jian Pei: CMPT 459/741 Clustering (4) 5

Jian Pei: CMPT 459/741 Clustering (4) 6

Jian Pei: CMPT 459/741 Clustering (4) 7

=

= Θ

k j j j j

x p w x prob

1

) | ( ) | ( θ

= = =

m i k j j i j j m i i

1 1 1

Jian Pei: CMPT 459/741 Clustering (4) 8

2 2

2 ) (

σ µ

− −

x i

2 1

8 ) 4 ( 8 ) 4 (

2 2

− − + −

x x

Jian Pei: CMPT 459/741 Clustering (4) 9

= − −

m j x i

1 2 ) (

2 2

σ µ

=

m i i

1 2 2

Jian Pei: CMPT 459/741 Clustering (4) 10

Jian Pei: CMPT 459/741 Clustering (4) 11

Jian Pei: CMPT 459/741 Clustering (4) 12

Jian Pei: CMPT 459/741 Clustering (4) 13

Jian Pei: CMPT 459/741 Clustering (4) 14

Jian Pei: CMPT 459/741 Clustering (4) 15

Jian Pei: CMPT 459/741 Clustering (4) 16

Salary (10,000) age Vac atio n 30 50 20 30 40 50 60 age 5 4 3 1 2 6 7 Vacation (week) 20 30 40 50 60 age 5 4 3 1 2 6 7

Jian Pei: CMPT 459/741 Clustering (4) 17

Jian Pei: CMPT 459/741 Clustering (4) 18

Jian Pei: CMPT 459/741 Clustering (4) 19

Jian Pei: CMPT 459/741 Clustering (4) 20

1 ) (

1 2

− − = ∑

=

n X X s

n i i

1 ) (

1 2 2

− − = ∑

=

n X X s

n i i

1 ) )( ( ) , cov(

1

− − − = ∑

=

n Y Y X X Y X

n i i i

Jian Pei: CMPT 459/741 Clustering (4) 21

Art work and example from http://csnet.otago.ac.nz/cosc453/student_tutorials/principal_components.pdf

Jian Pei: CMPT 459/741 Clustering (4) 22

Jian Pei: CMPT 459/741 Clustering (4) 23

2 1 2 2 2 1 2 1 2 1 1 1 n n n n n n

Jian Pei: CMPT 459/741 Clustering (4) 24

Jian Pei: CMPT 459/741 Clustering (4) 25

Jian Pei: CMPT 459/741 Clustering (4) 26

NewData = RowFeatureVector x RowDataAdjust

The first principal component is used

Jian Pei: CMPT 459/741 Clustering (4) 27

Y X O

Jian Pei: CMPT 459/741 Clustering (4) 28

cluster the original data ij

Data Affinity matrix k eigenvectors of A A = f(W) Av = \lamda v Clustering in the new space Computing the leading Projecting back to W

Jian Pei: CMPT 459/741 Clustering (4) 29

dist(oi,oj ) σw

Jian Pei: CMPT 459/741 Clustering (4) 30

n

j=1

2 WD− 1 2

Jian Pei: CMPT 459/741 Clustering (4) 31

Jian Pei: CMPT 459/741 Clustering (4) 32

Jian Pei: CMPT 459/741 Clustering (4) 33

Jian Pei: CMPT 459/741 Clustering (4) 34

v∈D{dist(pi, v)}

Jian Pei: CMPT 459/741 Clustering (4) 35

v2D,v6=qi{dist(qi, v)}

n

i=1

n

i=1

n

i=1

Jian Pei: CMPT 459/741 Clustering (4) 36

n

X

i=1

yi

n

X

i=1

xi

n

X

i=1

yi

Jian Pei: CMPT 459/741 Clustering (4) 37

k = rn 2

Jian Pei: CMPT 459/741 Clustering (4) 38

Jian Pei: CMPT 459/741 Clustering (4) 39

Jian Pei: CMPT 459/741 Clustering (4) 40

Jian Pei: CMPT 459/741 Clustering (4) 41

Jian Pei: CMPT 459/741 Clustering (4) 42

n

n

Jian Pei: CMPT 459/741 Clustering (4) 43

Jian Pei: CMPT 459/741 Clustering (4) 44

Cj:o62Cj{

Jian Pei: CMPT 459/741 Clustering (4) 45

Jian Pei: CMPT 459/741 Clustering (4) 46