1

1

School of Computer Science

Receptor A Kinase C TF F Gene G Gene H Kinase E Kinase D Receptor B X1 X2 X3 X4 X5 X6 X7 X8 Receptor A Kinase C TF F Gene G Gene H Kinase E Kinase D Receptor B X1 X2 X3 X4 X5 X6 X7 X8 X1 X2 X3 X4 X5 X6 X7 X8

Probabilistic Graphical Models (10 Probabilistic Graphical Models (10-

- 708)

708)

Lecture 5, Sep 31, 2007

Eric Xing Eric Xing

The Belief Propagation (Sum-Product) Algorithm

Reading: J-Chap 4

Eric Xing 2

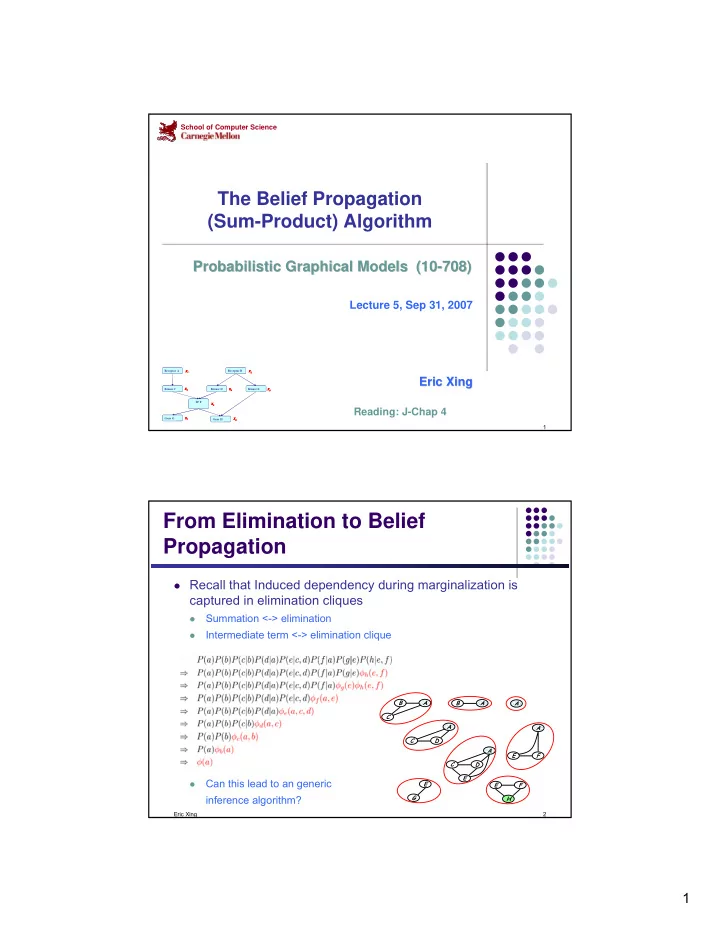

From Elimination to Belief Propagation

Recall that Induced dependency during marginalization is

captured in elimination cliques

- Summation <-> elimination

- Intermediate term <-> elimination clique

- Can this lead to an generic

inference algorithm?

E F H A E F B A C E G A D C E A D C B A A