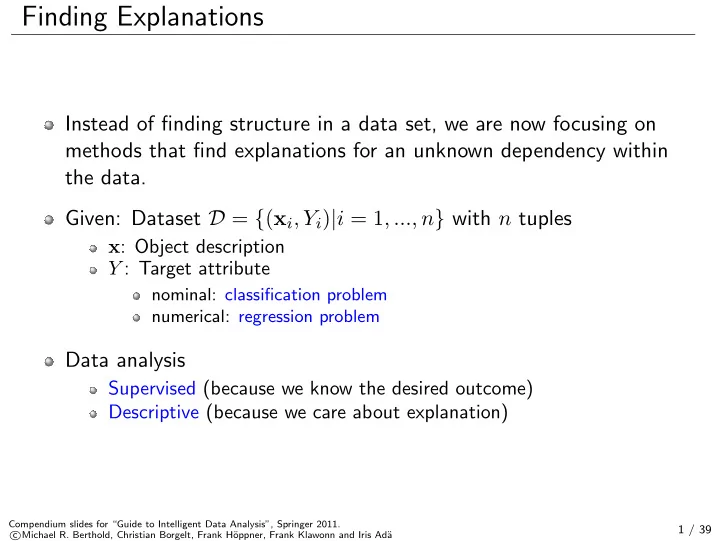

Finding Explanations

Instead of finding structure in a data set, we are now focusing on methods that find explanations for an unknown dependency within the data. Given: Dataset D = {(xi, Yi)|i = 1, ..., n} with n tuples

x: Object description Y : Target attribute

nominal: classification problem numerical: regression problem

Data analysis

Supervised (because we know the desired outcome) Descriptive (because we care about explanation)

Compendium slides for “Guide to Intelligent Data Analysis”, Springer 2011. c Michael R. Berthold, Christian Borgelt, Frank H¨

- ppner, Frank Klawonn and Iris Ad¨

a

1 / 39