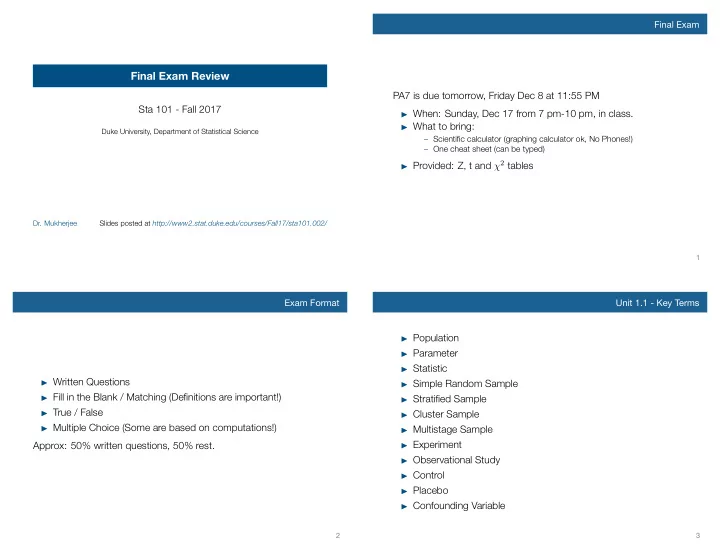

Final Exam Review

Sta 101 - Fall 2017

Duke University, Department of Statistical Science

- Dr. Mukherjee

Final Exam Review PA7 is due tomorrow, Friday Dec 8 at 11:55 PM Sta - - PowerPoint PPT Presentation

Final Exam Final Exam Review PA7 is due tomorrow, Friday Dec 8 at 11:55 PM Sta 101 - Fall 2017 When: Sunday, Dec 17 from 7 pm-10 pm, in class. What to bring: Duke University, Department of Statistical Science Scientific calculator

Design

Probability Bayesian inference Frequentist inference (CLT & simulation) Modeling (numerical response) 1 explanatory numerical categorical

many explanatory Exploratory data analysis

two means & medians many means two proportions many proportions

Design

Probability Bayesian inference Frequentist inference (CLT & simulation) Modeling (numerical response) 1 explanatory numerical categorical

many explanatory Exploratory data analysis

two means & medians many means two proportions many proportions

Design

Probability Bayesian inference Frequentist inference (CLT & simulation) Modeling (numerical response) 1 explanatory numerical categorical

many explanatory Exploratory data analysis

two means & medians many means two proportions many proportions

identical, 0.3 males, 0.5 0.3*0.5 = 0.15 females, 0.5 0.3*0.5 = 0.15 male&female, 0.0 0.3*0 = 0 fraternal, 0.7 males, 0.25 0.7*0.25 = 0.175 females, 0.25 0.7*0.25 = 0.175 male&female, 0.50 0.7*0.5 = 0.35

Design

Probability Bayesian inference Frequentist inference (CLT & simulation) Modeling (numerical response) 1 explanatory numerical categorical

many explanatory Exploratory data analysis

two means & medians many means two proportions many proportions

Design

Probability Bayesian inference Frequentist inference (CLT & simulation) Modeling (numerical response) 1 explanatory numerical categorical

many explanatory Exploratory data analysis

two means & medians many means two proportions many proportions

s2 1 n1 + s2 2 n2

ˆ p(1−ˆ p) n

p0(1−p0) n

ˆ p1(1−ˆ p1) n1

p2(1−ˆ p2) n2

ˆ ppool(1−ˆ ppool) n1

ˆ ppool(1−ˆ ppool) n2

Design

Probability Bayesian inference Frequentist inference (CLT & simulation) Modeling (numerical response) 1 explanatory numerical categorical

many explanatory Exploratory data analysis

two means & medians many means two proportions many proportions

16 18 20 22 24 26 5 10 15 20 25 30 35 40 % in poverty annual murders per million

40 50 60 70 80 90 8 10 12 14 16 18 % white % female householder Hawaii DC

Design

Probability Bayesian inference Frequentist inference (CLT & simulation) Modeling (numerical response) 1 explanatory numerical categorical

many explanatory Exploratory data analysis

two means & medians many means two proportions many proportions