SLIDE 1 (LMCS, p. 133) III.1

Equational Logic

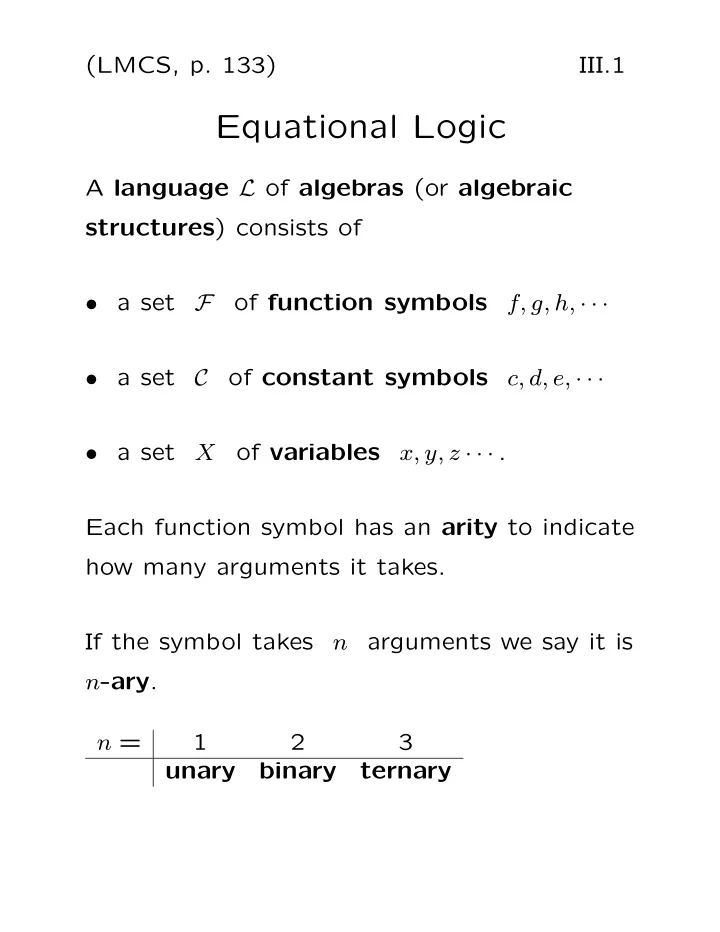

A language L of algebras (or algebraic structures) consists of

F

f, g, h, · · ·

C

c, d, e, · · ·

X

x, y, z · · · . Each function symbol has an arity to indicate how many arguments it takes. If the symbol takes n arguments we say it is n-ary. n = 1 2 3 unary binary ternary

SLIDE 2 (LMCS, p. 133) III.2 Example The language LBA

has F = {∨, ∧,′ } C = {0, 1} ∨ and ∧ are binary function symbols,

′

is a unary function symbol.

symbol name

∨

join

∧

meet

′

complement

The constants are just called by the usual names zero and one.

SLIDE 3 (LMCS, p. 134) III.3 The meaning of the symbols Given a set A:

- Function symbols are interpreted as

functions on the set.

- Constant symbols are interpreted as

elements of the set. A function f that maps n-tuples of elements of A to A is called an n-ary function on A. We write f : An → A to say f is n-ary on A.

SLIDE 4 (LMCS, p. 134) III.4 We can describe a single unary function on a small set either with a table, e.g., f 1 1 2 3 3 3

- r with a directed graph representation:

1 2 3

SLIDE 5 (LMCS, p. 134) III.5 Cayley Tables We can also describe small binary functions

A using a table, called a Cayley table. To describe the integers mod 4, with the binary operation of multiplication mod 4, we have the Cayley table: · 1 2 3 1 1 2 3 2 2 2 3 3 2 1

SLIDE 6 (LMCS, p. 135) III.6 Function Tables We can also describe functions on a small set A using a table that is similar to the truth tables used to describe the connectives. To describe the ternary function f(x, y, z) = 1 + xyz

we could use x y z f 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

SLIDE 7 (LMCS, p. 135) III.7 Interpretations An interpretation I

L

a nonempty set A assigns to each symbol from L a function or constant as follows:

is an element of A for each constant symbol c in C.

is an n–ary function on A for each n–ary function symbol f in F.

SLIDE 8 (LMCS, p. 136) III.8 Visualizing an interpretation I

A:

I(c) = c A I Symbol Interpretation f I I( f ) = x x

1

f

n

SLIDE 9 (LMCS, p. 135) III.9 Algebras An L-algebra (or L-structure)

A

is a pair (A, I) where I is an interpretation of L

A. Given an algebra A: the interpretations of the constant symbols are called the constants of the algebra the interpretations of the function symbols are called the fundamental operations of the algebra.

SLIDE 10 (LMCS, p. 136) III.10 Notation We prefer to write cA for I(c) fA for I(f),

c for I(c) f for I(f), Also, rather than writing (A, I) we prefer to write (A, F, C)

- r to simply list the symbols in

F and C, e.g., the algebra (R, +, ·, 0, 1),

SLIDE 11

(LMCS, p. 137) III.11 Example Let L = LBA = {∨, ∧,′ , 0, 1} Let Su(U) be the collection of all subsets of a given set U (U is called the universe). Interpret join as union (∪) meet as intersection (∩) complement as complement (′) in U as the empty set (Ø) 1 as the universe (U). Then Su(U) = (Su(U), ∪, ∩,′ , Ø, U) is the Boolean algebra of subsets of U.

SLIDE 12

(LMCS, p. 138) III.12 Example Let L = LBA = {∨, ∧,′ , 0, 1} Let A = {0, 1}, and let the function symbols be interpreted as follows: ∨ 1 1 1 1 1 ∧ 1 1 1

′

1 1 and 0, 1 are interpreted in the obvious manner (as 0, 1). This is the best known of all the Boolean algebras.

SLIDE 13

(LMCS, p. 140) III.13 Terms are used to make the sides of equations. Examples of terms using familiar infix notation for the language {+, ·, −, 0, 1}: 1 x y −0 − 1 − x − y 1 + 0 x · y − (−x) x + 1 x · (y + z) (−x) · (−y) 1 + (0 + 1)

SLIDE 14 (LMCS, p. 140) III.14 Examples

- f terms using familiar infix notation for the

language of Boolean algebra {∨, ∧,′ , 0, 1}: 1 x y 0′ 1′ x′ y′ 1 ∨ 0 x ∧ y x′′ x ∨ 1 x ∧ (y ∨ z) (x′) ∧ (y′) 1 ∨ (0 ∨ 1)

SLIDE 15 (LMCS, p. 140) III.15 Examples

- f terms using prefix notation.

If f is a unary function symbol: x fx, ffx are terms. If c is a constant symbol then c fc ffc are terms. If g is a binary function symbol then gcx gyy ggfzcgcx are terms. If h is a ternary function then hxyz hccx hfgxcgxcggxyfc are terms.

SLIDE 16 (LMCS, p. 140) III.16 Terms The L-terms over X are (inductively) defined by the following:

X is an L–term.

is an L–term.

t1, . . . , tn are L–terms and f is an n–ary function symbol in F, then ft1 · · · tn is an L–term.

SLIDE 17 (LMCS, p. 141) III.17 A Parsing Algorithm (for terms in prefix form) Define γ

- n the symbols of a string

s = fs1 · · · sn by:

at the first symbol f is 0.

γ by one on variables and constants.

γ by arity − 1

remaining function symbols.

f x c g γ = 0 γ ++ γ − arity(g) +1

SLIDE 18

(LMCS, p. 141) III.18 Then s is a term iff the value of γ is always less than arity(f) except at the last symbol, where γ has the value arity(f). If s is a term then s = ft1 · · · tk where k = arity(f). The end of ti is the first symbol where γ is i.

SLIDE 19

(LMCS, p. 141) III.19 Example Let L = {f, g, c} with f unary g binary c a constant. Determine if s = ggcxfz is a term. If so find the subterms t1, t2 such that gt1t2 = ggcxfz. Here is the computation of γ: i 1 2 3 4 5 si g g c x f z γi −1 1 1 2

SLIDE 20 (LMCS, p. 142-143) III.20 Examples

(f is ternary, g binary)

fxgxyz + 1 (x y + ) ( ( y z)) +

+ y + y + z 1 x f x z g x y

SLIDE 21

(LMCS, p. 143) III.21 Looking at the tree of a term we see that it is built up in stages called subterms. Example Using infix notation, the subterms of ((x + y) · (y + z)) + 1 are x y z 1 x + y y + z (x + y) · (y + z) ((x + y) · (y + z)) + 1

+ x y z + y + 1

SLIDE 22

(LMCS, p. 143) III.22 Example Using prefix notation, the subterms of fxgxyz , where f is ternary and g is binary, are x y z gxy fxgxyz

f x z g x y

SLIDE 23 (LMCS, p. 143) III.23 Subterms The subterms of a term t are defined inductively:

- The only subterm of a variable

x is the variable x itself.

- The only subterm of a constant symbol

c is the symbol c itself.

ft1 · · · tn are ft1 · · · tn itself and all the subterms of the ti, for 1 ≤ i ≤ n.

SLIDE 24

(LMCS, p. 145) III.24 Term Functions We interpret terms in an algebra as functions: terms t(x1, . . . , xn)

define

functions tA : An = ⇒ A Example Using the usual language for the natural numbers, consider the term t(x, y, z) given by (x · (y + 1)) + z The corresponding term function tN : N3 = ⇒ N maps the triple (1, 0, 2) to 3 as tN(1, 0, 2) = (1 · (0 + 1)) + 2 = 3

SLIDE 25 (LMCS, p. 145) III.25 Term functions tA for terms t(x1, . . . , xn) are the functions on the algebra A defined inductively by the following:

t is xi then tA(a1, . . . , an) = ai.

c ∈ C then tA(a1, . . . , an) = cA.

t is ft1 · · · tk then tA = fA(tA

1 , . . . , tA k ).

SLIDE 26 (LMCS, p. 145) III.26 Evaluation Tables Example Let A be the two–element Boolean algebra

{0, 1}, and let t(x, y, z) be the term x ∨ (y ∧ z′). Then the term function tA can be described by: x y z t 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

in more detail x y z z′ y ∧ z′

t

1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1

SLIDE 27

(LMCS, p. 149) III.27 Equations An L-equation is an expression of the form s ≈ t where s and t are L–terms. For example: x + y ≈ y + x. We think of this as saying for all x and y, x + y = y + x. = simply means “identical to”. ≈ expresses equality in formulas of our logics.

SLIDE 28

(LMCS, p. 149) III.28 The Basic Question: When does an equation follows from other equations? From x + y ≈ y + x we can conclude x + (y + z) ≈ (z + y) + x but not x + x ≈ x + y

SLIDE 29 (LMCS, p. 149) III.29 Two vantage points:

- (semantic) a notion of when an equation

is true in an algebra;

- (syntactic) axioms and rules of inference

for deriving equational consequences of equations. When you see the word “syntatic” think strings of symbols. Axioms are strings of symbols. Rules of Inference tell you how to take strings

- f symbols and make new strings of symbols.

SLIDE 30

(LMCS, p. 149) III.30 The semantic and syntatic notions of equational logic are equivalent! This equivalence is the soundness and completness of equational logic. Soundness means that one can only derive equations that correspond to valid arguments. Completeness means that one can derive all equations that correspond to valid arguments.

SLIDE 31 (LMCS, p. 149) III.31 Semantics Suppose L is a language of algebras.

an L–algebra and s ≈ t an L–equation we write

A |

= s ≈ t and say

A

satisfies s ≈ t or s ≈ t holds in A if sA = tA, that is, s and t define the same term function on A.

SLIDE 32 (LMCS, p. 149) III.32

A

is an L–algebra and S is a set of L–equations, then we write

A |

= S and say

A

satisfies S

S holds in A if A | = s ≈ t for every equation s ≈ t in S.

SLIDE 33 (LMCS, p. 149) III.33

S is a set of L–equations and s ≈ t is an L–equation, then we write S | = s ≈ t and say s ≈ t is a consequence of S

s ≈ t follows from S if

A |

= s ≈ t whenever

A |

= S. For satisfies we also use the word models, and we say A is a model of an equation or a set of equations.

SLIDE 34

(LMCS, p. 149) III.34 Laws of Binary Algebras A binary algebra A = (A, ·) is associative if it satisfies the associative law x · (y · z) ≈ (x · y) · z In such case it is also called a semigroup . It is commutative if it satisfies the commutative law x · y ≈ y · x And it is idempotent if it satisfies the idempotent law x · x ≈ x.

SLIDE 35 (LMCS, p. 150) III.35 The idempotent law is easy to check:

- ne looks down the main diagonal of the

table of the operation to see that x · x always has the value x.

a b c a b c a b c

SLIDE 36

(LMCS, p. 150) III.36 The commutative law is also easy to check: look at the table of the operation to see if it is symmetric about the main diagonal.

a b c a b c d d

SLIDE 37

(LMCS, p. 151) III.37 Example The binary algebra A given by · a b a a a b a b is idempotent, commutative, and associative:

A

| = x · x ≈ x

A

| = x · y ≈ y · x

A

| = x · (y · z) ≈ (x · y) · z. The first two properties follow by the preceding remarks and diagrams. To check the associative law we construct an evaluation table for the terms x · (y · z) and (x · y) · z to show the term functions are equal:

SLIDE 38 (LMCS, p. 151) III.38 From the binary algebra given by · a b a a a b a b we have the following table: x y z y · z

s

x · y

t

1. a a a a a a a 2. a a b a a a a 3. a b a a a a a 4. a b b b a a a 5. b a a a a a a 6. b a b a a a a 7. b b a a a b a 8. b b b b b b b Since the columns for x · (y · z) and (x · y) · z are the same, the associative law holds.

SLIDE 39 (LMCS, pp. 151-152) III.39 Example The binary algebra A given by · a b a b a b b b is not idempotent nor commutative nor associative. To see the failure of the associative law we use an evaluation table: x y z y · z

s

x · y

t

1. a a a b a b b 2. a a b a b b b 3. a b a b a a b 4. a b b b a a a 5. b a a b b b b 6. b a b a b b b 7. b b a b b b b 8. b b b b b b b Indeed, associativity fails precisely on lines 1 and 3.

SLIDE 40 (LMCS, pp. 151-152) III.40 However, this binary algebra · a b a b a b b b does satisfy an interesting equation, namely,

A |

= (x · y) · z ≈ (x · z) · y. We can see this by using an evaluation table: x y z x · y

s

x · z

t

a a a b b b b a a b b b a b a b a a b b b a b b a a a a b a a b b b b b a b b b b b b b a b b b b b b b b b b b

SLIDE 41 (LMCS, p. 153) III.41 Many interesting classes of algebras are defined by equations. The text gives examples of:

- Rings

- Boolean Algebras

- Semigroups

- Monoids

- Groups

You will NOT be required to memorize the equations defining these classes. They are for reference only.

SLIDE 42

(LMCS, p. 154) III.42 Rings The language LR is {+, ·, −, 0, 1}. R is the following set of equations in this language: R1. x + 0 ≈ x additive identity R2. x + (−x) ≈ additive inverse R3. x + y ≈ y + x + is commutative R4. x + (y + z) ≈ (x + y) + z + is associative R5. x · 1 ≈ x right mult. identity R6. 1 · x ≈ x left mult. identity R7. x · (y · z) ≈ (x · y) · z · is associative R8. x · (y + z) ≈ (x · y) + (x · z) left distributive R9. (x + y) · z ≈ (x · z) + (y · z) right distributive. All algebras R = (R, +, ·, −, 0, 1) that satisfy R are called rings. R is called a set of axioms or a set of defining equations for rings.

SLIDE 43

(LMCS, p. 154-155) III.43 Boolean Algebras We choose BA to be the following set of equations in the language LBA: B1. x ∨ y ≈ y ∨ x commutative B2. x ∧ y ≈ y ∧ x commutative B3. x ∨ (y ∨ z) ≈ (x ∨ y) ∨ z associative B4. x ∧ (y ∧ z) ≈ (x ∧ y) ∧ z associative B5. x ∧ (x ∨ y) ≈ x absorption B6. x ∨ (x ∧ y) ≈ x absorption B7. x ∧ (y ∨ z) ≈ (x ∧ y) ∨ (x ∧ z) distributive B8. x ∨ x′ ≈ 1 B9. x ∧ x′ ≈ B10. x ∨ 1 ≈ 1 B11. x ∧ 0 ≈ 0. All algebras B = (B, ∨, ∧,′ , 0, 1) that satisfy BA are called Boolean algebras. BA is called a set of axioms or a set of defining equations for Boolean algebra.

SLIDE 44

(LMCS, p. 156) III.44 Semigroups The language LSG = {·} consists of a single binary operation. SG has only one equation, the associative law: SG1 : (x · y) · z ≈ x · (y · z). Models of SG are called semigroups. SG axiomatizes or defines the class of semigroups.

SLIDE 45

(LMCS, p. 156-157) III.45 Monoids LM is {·, 1}, where · is binary and 1 is a constant symbol. M consists of: MO1 : x · 1 ≈ x MO2 : 1 · x ≈ x MO3 : (x · y) · z ≈ x · (y · z) . Any algebra A that satisfies M is called a monoid. M is a set of axioms or defining equations for monoids.

SLIDE 46

(LMCS, p. 157-158) III.46 Groups LG = {·, −1, 1}, where · is binary,

−1

is unary, and 1 is a constant symbol. G is the set of equations: G1 : x · 1 ≈ x G2 : x · x−1 ≈ 1 G3 : (x · y) · z ≈ x · (y · z). There is also an additive notation for groups, namely, the language is {+, −, 0} and is usually reserved for groups that are commutative: G1′ : x + 0 ≈ x G2′ : x + (−x) ≈ G3′ : (x + y) + z ≈ x + (y + z) G4′ : x + y ≈ y + x.

SLIDE 47 (LMCS, p. 158-159) III.47 Three Very Basic Properties of Equations (≈ behaves like an equivalence relation)

= s ≈ s

= s ≈ t implies

A |

= t ≈ s

= s1 ≈ s2 and

A |

= s2 ≈ s3 implies A | = s1 ≈ s3.

SLIDE 48 (LMCS, p. 158-159) III.48 Similar results hold for consequences. (Recall the definition of S | = s ≈ t from slide III.33, namely that any algebra that satisfies S will also satisfy s ≈ t.)

= s ≈ s

= s ≈ t implies S | = t ≈ s

= s1 ≈ s2 and S | = s2 ≈ s3 implies S | = s1 ≈ s3.

SLIDE 49 (LMCS, p. 161) III.49 Arguments that are Valid in

A

Given an algebra A, an argument s1 ≈ t1, · · · , sn ≈ tn

∴ s ≈ t,

is valid in A (or correct in A) provided either

si ≈ ti does not hold in A,

holds in A, i.e., if all the premisses hold in A, then so does the conclusion.

SLIDE 50

(LMCS, p. 163) III.50 Let A be the two–element Boolean algebra. To check the validity of the argument x ∨ y ≈ y ∨ x, x ∧ (y ∨ x) ≈ x

∴ x ∨ x′ ≈ x ∧ x′

we form an evaluation table: s1 t1 s2 t2 s t x y x ∨ y y ∨ x x ∧ (y ∨ x) x x ∨ x′ x ∧ x′ 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 1 Thus this is not a valid argument in A.

SLIDE 51

(LMCS, p. 163) III.51 Valid Equational Arguments An argument s1 ≈ t1, · · · , sn ≈ tn

∴ s ≈ t,

is valid (or correct) provided it is valid in every algebra A. Of course this becomes impossible to check using evaluation tables because there are an infinite number of algebras to examine.

SLIDE 52

(LMCS, p. 163) III.52 So how do we ever verify that an equational argument is valid? There are two ways. One is to use a proof system that we will soon study. The other is to study abstract algebra to learn special methods that can aid in a semantic analysis of validity.

SLIDE 53 (LMCS, p. 164) III.53 Refuting an Equational Argument An equational argument s1 ≈ t1, · · · , sn ≈ tn

∴ s ≈ t

is not valid iff one can find an algebra A such that

- all the premisses hold in A,

- but the conclusion does not hold.

Such an A is called a counterexample to the argument.

SLIDE 54 (LMCS, p. 164) III.54 One-Element Algebras We can never use a one–element algebra to refute an equational argument because in a

- ne–element algebra all equations are true

(there is only one value for the term functions to take). So to find a counterexample to an equational argument one must look to algebras with more than one element.

SLIDE 55

(LMCS, p. 164) III.55 Example Show that the argument x · y ≈ y · x

∴ x · x ≈ x

is not valid. We need to find a binary algebra that is commutative but not idempotent. The following two–element binary algebra does the job: · a b a a a b a a

SLIDE 56

(LMCS, p. 164-165) III.56 Example Show that the argument fffx ≈ fx

∴ ffx ≈ fx

is not valid. The following two–element monounary algebra provides a counterexample: f a b b a Such examples we usually discover by drawing pictures such as the following: f

a b

SLIDE 57 (LMCS, p. 168) III.57 What is Substitution? The word substitution encompasses two quite distinct kinds of substitution. One is for uniform substitution for

- variables. For example from

x + y ≈ y + x (*) we can deduce (x · y) + (z + z) ≈ (z + z) + (x · y) by substituting in (*) as follows: the term x · y for every occurrence of x the term z + z for every occurrence of y.

SLIDE 58 (LMCS, p. 168) III.58 The other use of substitution is to substitute

For example from x + y ≈ y + x we can also deduce (u · (x + y)) + (z + z) ≈ (u · (y + x)) + (z + z) Notice that we replaced the underlined term

- n the left with the underlined term on the

right.

SLIDE 59 (LMCS, p. 168) III.59 Since these two kinds of substitution are so different we will use the word substitution

- nly for the first kind, that is, for the uniform

substitution for variables; and call the second kind of substitution replacement.

SLIDE 60

(LMCS, p. 168) III.60 Substitution Given a term s(x1, . . . , xn) and terms t1, . . . , tn, the expression s(t1, . . . , tn) denotes the result of simultaneously substituting ti for xi in s. The notation we use for the substitution is

x1 ← t1 . . . xn ← tn

.

SLIDE 61 (LMCS, p. 168) III.61 Example Let s(x, y) be x + y . Applying the substitution

y ← x + y

s(x, y) gives s(x · z, x + y) which is (x · z) + (x + y)

SLIDE 62

(LMCS, p. 168-169) III.62 We can illustrate the substitution procedure with a picture.

+ x y y x z x + + s(x,y) = x+y s(x z , x+y) = (x z) + (x+y)

As you can see, substitution makes the tree grow downwards from the bottom. Substitution can only increase the tree size.

SLIDE 63

(LMCS, p. 169) III.63 Substitution Theorems

A |

= p(x1, . . . , xn) ≈ q(x1, . . . , xn) implies

A |

= p(t1, . . . , tn) ≈ q(t1, . . . , tn). S | = p(x1, . . . , xn) ≈ q(x1, . . . , xn) implies S | = p(t1, . . . , tn) ≈ q(t1, . . . , tn).

SLIDE 64 (LMCS, p. 171-172) III.64 Replacement Starting with a term p(· · · s · · · ) with an

s in it, we replace s with another term t and

p(· · · t · · · ). Example Replacing the second occurrence (from the left) of x + y in ((x + y

first

) · y) + (y + (x + y

second

)) with the term u · v gives the term ((x + y) · y) + (y + (u · v))

SLIDE 65

(LMCS, p. 171-172) III.65 We can visualize this by the use of trees:

+ y y + + + x y x y

+ ((x+y) ) y x ( y+( )) y +

y y + + + y x u v

((x+y) y) + ( y+( )) u v

Replacement has the effect of replacing one part of the tree. The replacement part can be larger or smaller than the original. Replacement can increase or decrease the size of the tree.

SLIDE 66

(LMCS, p. 173-174) III.66 Replacement Theorems

A |

= s ≈ t implies

A |

= p(· · · s · · · ) ≈ p(· · · t · · · ). S | = s ≈ t implies S | = p(· · · s · · · ) ≈ p(· · · t · · · ).

SLIDE 67

(LMCS, p. 175) III.67 The Syntactic Viewpoint Birkhoff’s Rules

Rule Name Example s ≈ s Reflexive x + y ≈ x + y s ≈ t t ≈ s Symmetric x ≈ x · x x · x ≈ x r ≈ s, s ≈ t r ≈ t Transitive x ≈ x · x, x · x ≈ 1 x ≈ 1 r( x) ≈ s( x) r( t ) ≈ s( t ) Substitution x · 1 ≈ x (x · x) · 1 ≈ x · x s ≈ t r(· · · s · · · ) ≈ r(· · · t · · · ) Replacement x · x ≈ x (x · x) + x ≈ x + x

SLIDE 68 (LMCS, p. 175) III.68 Derivation of Equations A derivation of an equation s ≈ t from S is a sequence s1 ≈ t1, . . . , sn ≈ tn

- f equations such that each equation is either

(1) a member of S, or (2) is the result of applying a rule of inference to previous members of the sequence, and the last equation is s ≈ t. We write S ⊢ s ≈ t if such a derivation exists.

SLIDE 69

(LMCS, p. 176) III.69 Example A derivation to witness fffx ≈ fy ⊢ fx ≈ fy is given by 1. fffx ≈ fy given 2. fffx ≈ fx subs 1 3. fx ≈ fffx symm 2 4. fx ≈ fy trans 1,3

SLIDE 70

(LMCS, p. 176) III.70 Example Show fgx ≈ x, ggx ≈ x ⊢ fx ≈ gx. 1. fgx ≈ x given 2. ggx ≈ x given 3. fggx ≈ gx subs 1 4. fggx ≈ fx repl 2 5. fx ≈ fggx symm 4 6. fx ≈ gx trans 3,5

SLIDE 71 (LMCS, p. 176-177) III.71 Example Show R ⊢ x · 0 ≈ 0.

1. x + 0 ≈ x given (R1) 2. 0 + 0 ≈ 0 subs 1 3. x · (0 + 0) ≈ x · 0 repl 2 4. x · (y + z) ≈ x · y + x · z given (R8) 5. x · (0 + 0) ≈ x · 0 + x · 0 subs 4 6. x · 0 + x · 0 ≈ x · (0 + 0) symm 5 7. x · 0 + x · 0 ≈ x · 0 trans 3,6 8. (x · 0 + x · 0) + (−(x · 0)) ≈ x · 0 + (−(x · 0)) repl 7 9. x + (−x) ≈ 0 given (R2)

≈ 0 subs 9

- 11. (x · 0 + x · 0) + (−(x · 0)) ≈ 0

trans 8,10

≈ (x + y) + z given (R4)

- 13. x · 0 + (x · 0 + (−(x · 0))) ≈ (x · 0 + x · 0) + (−(x · 0)) subs 12

- 14. x · 0 + (x · 0 + (−(x · 0))) ≈ 0

trans 11,13

≈ 0 subs 9

- 16. x · 0 + (x · 0 + (−(x · 0))) ≈ (x · 0) + 0

repl 15

≈ x · 0 subs 1

- 18. x · 0 + (x · 0 + (−(x · 0))) ≈ x · 0

trans 16,17

≈ x · 0 + (x · 0 + (−(x · 0))) symm 18

≈ 0 trans 14,19

SLIDE 72 (LMCS, p. 183-185) III.72 Soundness and Completeness Birkhoff’s Rules are

S ⊢ s ≈ t implies S | = s ≈ t

S | = s ≈ t implies S ⊢ s ≈ t Propositional logic has many competing proof systems. In equational logic we prefer Birkhoff’s rules.

SLIDE 73

(LMCS, p. 183-185) III.73 Determining Validity In the propositional logic we have (often very slow) methods to check the validity of an argument. Equational logic has no such algorithm! This does not mean that we have not yet found an algorithm, but rather that it simply does not exist.

SLIDE 74 (LMCS, p. 185-186) III.74 Summary of Our Methods for Analyzing Arguments

s1 ≈ t1, · · · , sn ≈ tn

∴ s ≈ t

is valid iff there is a derivation of the conclusion from the premisses, that is, iff s1 ≈ t1, · · · , sn ≈ tn ⊢ s ≈ t. Thus we look for a derivation to show an argument is valid.

SLIDE 75 (LMCS, p. 185-186) III.75

s1 ≈ t1, · · · , sn ≈ tn

∴ s ≈ t

is invalid iff there is a counterexample A, that is, iff some

A

makes the premisses true and the conclusion false. Thus we can show an argument is not valid by finding a counterexample.

SLIDE 76 (LMCS, p. 190) III.76 Unification One of the most popular and powerful tools

- f automated theorem proving is an algorithm

to find most general unifiers. Let s(x1, . . . , xn) and s′(x1, . . . , xn) be two

- terms. A unifier of s and s′ is a substitution

x1 ← t1 . . . xn ← tn

such that s(t1, . . . , tn) = s′(t1, . . . , tn). After the substitution has been carried out, the two terms have become the same term. If a unifier can be found for s and t, we say they can be unified, or they are unifiable.

SLIDE 77 (LMCS, p. 190) III.77 Example Let s(x, y) = (x + y) · x t(x, y) = (y + x) · y. There are many unifiers of s and t, e.g.,

y ← 1

y ← u · u

However the substitution

y ← y

- is a unifier that is more general than the

preceding two examples.

SLIDE 78 (LMCS, p. 191) III.78 Most General Unifiers If two terms s, t have a unifier µ such that every unifier of s, t is an instance of µ then we say µ is a most general unifier of s, t. Theorem (Robinson, 1965)

- If two terms are unifiable then they have a

most general unifier.

- The most general unifier of two terms is

(essentially) unique.

- There is an algorithm to determine if two

terms are unifiable, and if so the algorithm produces the most general unifier.

SLIDE 79

(LMCS, p. 192) III.79 Critical Subterms of s, t are the first subterms where s, t disagree. Example For the terms s = (x · y) + ((x + y) · x) t = (x · y) + ((y · (x + y)) · y) the critical subterms are s′ = x + y t′ = y · (x + y), x y x y x y x y y y x + + + + t = s =

SLIDE 80 (LMCS, p. 192-193) III.80 Critical Subterm Condition (CSC) The critical subterm condition (CSC) is satisfied by s and t if their critical subterms s′ and t′ consist of:

x, and

r, that has no

x in it.

SLIDE 81

(LMCS, p. 192-193) III.81 Unification Algorithm: let µ be the identity substitution WHILE s = t find the critical subterms s′, t′ if the CSC fails, return NOT UNIFIABLE else with {s′, t′} = {x, r} apply (x ← r) to both s and t apply (x ← r) to µ ENDWHILE return (µ)

SLIDE 82

(LMCS, p. 193) III.82 A picture of a possible single step of the unification algorithm when the CSC holds:

s = t = r x ) ( x r r r

In this single step the substitution µ changes to (x ← r)µ.

SLIDE 83

(LMCS, p. 195) III.83 Example Apply the unification algorithm to x + (y · x) and (y · y) + z: s = t =

+ x y x + y y z x y y + y y z + y y y y y z y (y y) + y y y y y + y y y y y z y (y y) µ = x y y

SLIDE 84

(LMCS, p. 198-199) III.84 Composition of Substitutions Given two substitutions σ, σ′, the composition of the two is defined by (σ′σ)(t) = σ′(σ(t)) Example σ =

x ← x + y y ← x · y

and σ′ =

x ← y + x y ← x + x

leads to σ′σ =

x ← (y + x) + (x + x) y ← (y + x) · (x + x)

.

SLIDE 85 (LMCS, p. 208) III.85 Term Rewrite Systems (TRS’s) A term rewrite (rule) is an expression s − → t, sometimes called a directed equation, where s and t are terms. A term rewrite system, abbreviated TRS, is a set R

Example A simple system R

rules used for monoids is R =

(x · y) · z − → x · (y · z) 1 · x − → x x · 1 − → x

.

SLIDE 86 (LMCS, p. 208) III.86 Elementary Rewrites An elementary rewrite obtained from s − → t is a rewrite of the form r(· · · σs · · · ) − → r(· · · σt · · · ), where σ is a substitution, r a term, and

σs

replaced by σt

Example 1 · (x · (y · z)) − → (x · (y · z)) is an elementary rewrite obtained from 1 · x − → x by using the substitution

SLIDE 87 (LMCS, p. 208-209) III.87 If R is a set of term rewrite rules then s − →R t means s − → t is an elementary rewrite of some term rewrite p − → q in R. We write s − →+

R t

if there is a finite sequence of elementary rewrites such that s = t1 − →R t2 − →R · · · − →R tn = t. The notation s − →∗

R t

means s = t

s − →+

R t

holds.

SLIDE 88

(LMCS, p. 208-209) III.88 Example Using the monoid rules R we have ((x · 1) · (1 · y)) · (z · 1) − →+

R x · (y · z),

since ((x · 1) · (1 · y)) · (z · 1) − →R (x · (1 · y)) · (z · 1) (x · (1 · y)) · (z · 1) − →R (x · y) · (z · 1) (x · y) · (z · 1) − →R (x · y) · z (x · y) · z − →R x · (y · z)

SLIDE 89 (LMCS, p. 209) III.89 Terminating TRS’s

R is terminating if every sequence of elementary rewrites s1 − →R s2 − →R · · · eventually stops.

s is terminal if no elementary rewrite s − →R t is possible.

SLIDE 90 (LMCS, p. 209) III.90 Example The monoid example R is terminating. The terminal terms are either 1

form x · (y · (z · · · w) · · · )) For example we have the terminating sequence ((x · y) · u) · v − →R (x · y) · (u · v) − →R x · (y · (u · v))

SLIDE 91 (LMCS, p. 209) III.91 Two Necessary Conditions for Terminating

R is of the form x − → t. For otherwise this could be applied to any term s, so no term would be terminal.

s − → t is in R then the variables of t are also variables of s. For otherwise a substitution into s − → t would give an elementary rewrite of the form s − → t′ that has s as a subterm of t′, and this would permit an infinitely long sequence

SLIDE 92 (LMCS, p. 210) III.92 The Length of a Term |t| is the length of t (the number of symbols in the prefix form of t). |t|x is the x–length of t, the number of times the variable x

t. Thus for t = (x · y) + (x · z) we have |t| = 7 |t|x = 2

SLIDE 93 (LMCS, p. 210) III.93 Theorem Let R be a term rewrite system with the property that for each s − → t ∈ R we have

and

for every variable x. Then R is a terminating TRS. (In this situation all of the elementary rewrites s − →R t are length-reducing.)

SLIDE 94 (LMCS, p. 211) III.94 Applications The following TRS’s are terminating:

→ 0}

→ x, 1 · x − → x}

→ x}

→ x ∧ y}

→ x ∨ x′}

→ gfx, fgx − → fx}.

SLIDE 95

(LMCS, p. 211) III.95 Normal Form TRS’s A normal form TRS is a uniquely terminating TRS. For such a TRS the terminating form for any given term s is called the normal form of s, and written nR(s). A normal form TRS R is a normal form TRS for a set E of equations if E | = s ≈ t iff nR(s) = nR(t).

SLIDE 96

(LMCS, p. 211) III.96 Example R = {ffx − → x} is a normal form TRS. The normal form of fffx is fx. Example R =

(x · y) · z − → x · (y · z) x · 1 − → x 1 · x − → x

is a normal form TRS (for monoids). The normal form of ((x · 1) · (1 · y)) · z is x · (y · z).

SLIDE 97

(LMCS, p. 212-213) III.97 A Normal Form TRS for Groups (Knuth and Bendix, 1967) E R 1 · x ≈ x 1−1 − → 1 x−1 · x ≈ 1 x · 1 − → x (x · y) · z ≈ x · (y · z) 1 · x − → x (x−1)−1 − → x x−1 · x − → 1 x · x−1 − → 1 x−1 · (x · y) − → y x · (x−1 · y) − → y (x · y)−1 − → y−1 · x−1 (x · y) · z − → x · (y · z) In this case it is not immediate that the system R is terminating, much less a normal form TRS.

SLIDE 98

(LMCS, p. 213) III.98 Converting R into an equational theory Let E(R) = {s ≈ t : s − → t ∈ R}. Proposition If R is a normal form TRS, then it is a normal form TRS for E(R). Thus, for example, once we show that R = {(x · y) · z − → x · (y · z)} is a normal form TRS, then it follows that it is a normal form TRS for semigroups.

SLIDE 99 (LMCS, p. 215) III.99 Critical Pairs Given s1 − → t1 s2 − → t2. Rename the variables in one of them (if necessary) so that s1 and s2 have no variables in common. Choose an occurrence of a nonvariable subterm s′

1

s1 such that s′

1, s2

are unifiable. Find the most general unifier µ

s′

1

and s2. Two rewrites of µs1 give a critical pair.

SLIDE 100

(LMCS, p. 216) III.100 t 2

1

s s = s = t 1

1 2

s =

2

µ t 1 µ µ 1 s s =

1

µ t 2 µ

Given: Two Rewrite Rules with disjoint variables

2 1

s s µ Then apply it to both rules. Find , the most general unifier of and .

SLIDE 101

(LMCS, p. 216) III.101 Creating the Critical Pair

t 1 µ µ 2 t = µ 1 s µ s

2

THIS IS A CRITICAL PAIR

Use the second rule

Use the first rule

SLIDE 102

(LMCS, p. 216) III.102 Example: Find the critical pair for y + (f(x) + y) − → f(x + y) x + g(y) − → g(x + y) Changing variables we have y + (f(x) + y) − → f(x + y) u + g(v) − → g(u + v) A most general unifier: µ =

u ← f(x) y ← g(v)

.

Apply this to the original rewrites: g(v) + (f(x) + g(v)) − → f(x + g(v)) f(x) + g(v) − → g(f(x) + v). Thus the critical pair is: (f(x + g(v)) and g(v) + g(f(x) + v)).

SLIDE 103

(LMCS, p. 223-224) III.103 A TRS R is confluent on critical pairs if, given any critical pair (s, t), there is a term r such that s − →∗

R

r t − →∗

R

r. Critical Pairs Lemma (Knuth and Bendix) Suppose R is a terminating TRS. Then R is a normal form TRS iff R is confluent on critical pairs.

SLIDE 104 (LMCS, p. 223-224) III.104 Corollary Let R be a term rewrite system with the property that for each s − → t ∈ R we have

for every variable x, and

is confluent on critical pairs. Then R is a normal form TRS.

SLIDE 105

(LMCS, p. 224) III.105 Example Consider R = {fgfx − → gx}, a terminating TRS. fg fx − → gx fgfu − → gu has µ = (x ← gfu). Applying µ: fg fgfu − → ggfu fgfu − → gu The critical pair is: (ggfu, fggu). We do not have confluence for this critical pair, so the system R is not a normal form TRS.