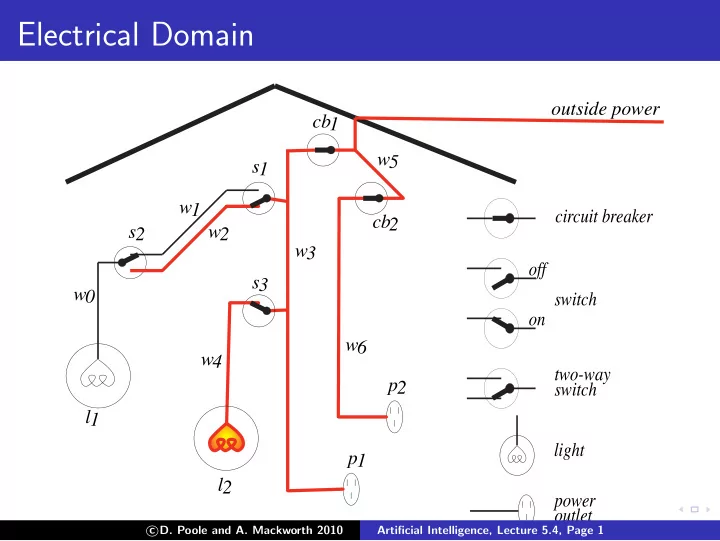

Electrical Domain

light two-way switch switch

- ff

- n

power

- utlet

circuit breaker

- utside power

cb1 s1 w1 s2 w2 w0 l1 w3 s3 w4 l2 p1 w5 cb2 w6 p2

c

- D. Poole and A. Mackworth 2010

Artificial Intelligence, Lecture 5.4, Page 1

Electrical Domain outside power cb1 w5 s1 w1 circuit breaker - - PowerPoint PPT Presentation

Electrical Domain outside power cb1 w5 s1 w1 circuit breaker cb2 s2 w2 w3 off s3 w0 switch on w6 w4 two-way p2 switch l1 light p1 l2 power outlet D. Poole and A. Mackworth 2010 c Artificial Intelligence, Lecture 5.4,

c

Artificial Intelligence, Lecture 5.4, Page 1

c

Artificial Intelligence, Lecture 5.4, Page 2

◮ They don’t know what information is needed. ◮ They don’t know what vocabulary to use. c

Artificial Intelligence, Lecture 5.4, Page 3

◮ Goals for which the user isn’t expected to know the

◮ Askable atoms that may be useful in the proof. ◮ Askable atoms that the user has already provided

c

Artificial Intelligence, Lecture 5.4, Page 4

◮ Goals for which the user isn’t expected to know the

◮ Askable atoms that may be useful in the proof. ◮ Askable atoms that the user has already provided

c

Artificial Intelligence, Lecture 5.4, Page 5

c

Artificial Intelligence, Lecture 5.4, Page 6

c

Artificial Intelligence, Lecture 5.4, Page 7

◮ one of the ai is false in the intended interpretation or ◮ all of the ai are true in the intended interpretation.

c

Artificial Intelligence, Lecture 5.4, Page 8

light two-way switch switch

power

circuit breaker

cb1 s1 w1 s2 w2 w0 l1 w3 s3 w4 l2 p1 w5 cb2 w6 p2

c

Artificial Intelligence, Lecture 5.4, Page 9

c

Artificial Intelligence, Lecture 5.4, Page 10

◮ One of the ai is true in the interpretation and could not

c

Artificial Intelligence, Lecture 5.4, Page 11