SLIDE 1

1

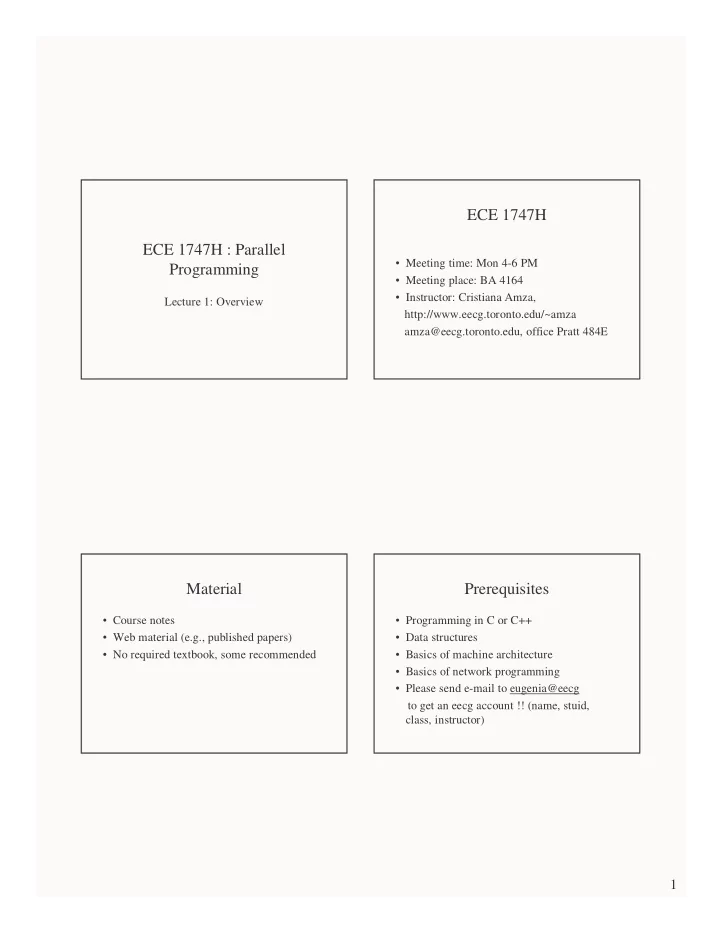

ECE 1747H : Parallel Programming

Lecture 1: Overview

ECE 1747H

- Meeting time: Mon 4-6 PM

- Meeting place: BA 4164

- Instructor: Cristiana Amza,

http://www.eecg.toronto.edu/~amza amza@eecg.toronto.edu, office Pratt 484E

Material

- Course notes

- Web material (e.g., published papers)

- No required textbook, some recommended

Prerequisites

- Programming in C or C++

- Data structures

- Basics of machine architecture

- Basics of network programming

- Please send e-mail to eugenia@eecg