Jian Pei: CMPT 459/741 Clustering (3) 1

Distance-based Methods: Drawbacks

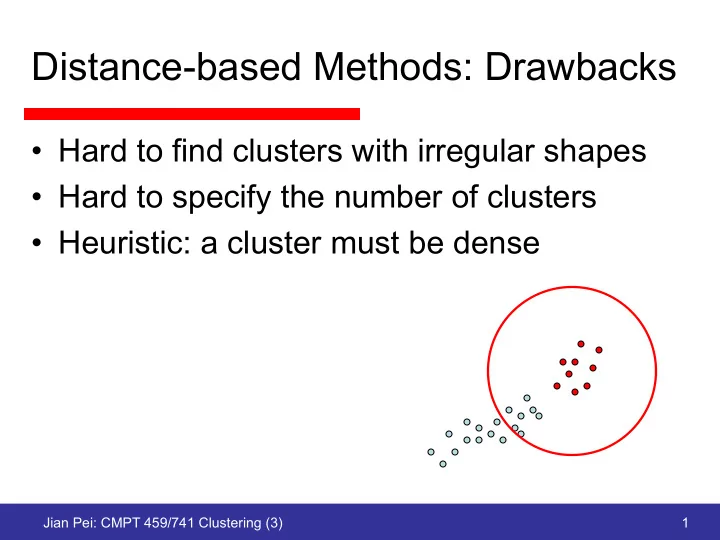

- Hard to find clusters with irregular shapes

- Hard to specify the number of clusters

- Heuristic: a cluster must be dense

Distance-based Methods: Drawbacks Hard to find clusters with - - PowerPoint PPT Presentation

Distance-based Methods: Drawbacks Hard to find clusters with irregular shapes Hard to specify the number of clusters Heuristic: a cluster must be dense Jian Pei: CMPT 459/741 Clustering (3) 1 How to Find Irregular Clusters? Divide

Jian Pei: CMPT 459/741 Clustering (3) 1

Jian Pei: CMPT 459/741 Clustering (3) 2

Jian Pei: CMPT 459/741 Clustering (3) 3

Jian Pei: CMPT 459/741 Clustering (3) 4

Jian Pei: CMPT 459/741 Clustering (3) 5

Jian Pei: CMPT 459/741 Clustering (3) 6

Jian Pei: CMPT 459/741 Clustering (3) 7

Jian Pei: CMPT 459/741 Clustering (3) 8

Jian Pei: CMPT 459/741 Clustering (3) 9

Jian Pei: CMPT 459/741 Clustering (3) 10

Jian Pei: CMPT 459/741 Clustering (3) 11

Jian Pei: CMPT 459/741 Clustering (3) 12

Jian Pei: CMPT 459/741 Clustering (3) 13

Jian Pei: CMPT 459/741 Clustering (3) 14

Jian Pei: CMPT 459/741 Clustering (3) 15

Jian Pei: CMPT 459/741 Clustering (3) 16

Jian Pei: CMPT 459/741 Clustering (3) 17

2 2 1

Jian Pei: CMPT 459/741 Clustering (3) 18

Jian Pei: CMPT 459/741 Clustering (3) 19

Multi- attribute hyperspac e

Jian Pei: CMPT 459/741 Clustering (3) 20

Jian Pei: CMPT 459/741 Clustering (3) 21

Jian Pei: CMPT 459/741 Clustering (3) 22

Jian Pei: CMPT 459/741 Clustering (3) 23

Jian Pei: Big Data Analytics -- Clustering 24

Jian Pei: Big Data Analytics -- Clustering 25

nm

w

w

11

w

21

w

31

w

n1

w

12

w

32

w

22

w

n2

w

1m

w

3m

w

2m

Jian Pei: Big Data Analytics -- Clustering 26

· · · b6 · · · b12 · · · b36 · · · b99 · · · a1 · · · 60 · · · 60 · · · 60 · · · 60 · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · a33 · · · 60 · · · 60 · · · 60 · · · 60 · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · a86 · · · 60 · · · 60 · · · 60 · · · 60 · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · · ·

Jian Pei: Big Data Analytics -- Clustering 27

Jian Pei: Big Data Analytics -- Clustering 28

Jian Pei: Big Data Analytics -- Clustering 29

Jian Pei: Big Data Analytics -- Clustering 30

Jian Pei: Big Data Analytics -- Clustering 31

v y v x u y u x v y u y v x u x

v y u y v x u x

Jian Pei: Big Data Analytics -- Clustering 32

Jian Pei: Big Data Analytics -- Clustering 33

Jian Pei: Big Data Analytics -- Clustering 34

Jian Pei: Big Data Analytics -- Clustering 35

Attribute Objects a b c d e f g h x 13 11 9 7 9 13 2 15 y 7 4 10 1 12 3 4 7 x - y 6 7

6

10

8

Jian Pei: Big Data Analytics -- Clustering 36

– For each attribute subset, find the maximal subsets of

pCluster – Check whether (R, D) is maximal

– # of objects >> # attributes

Jian Pei: Big Data Analytics -- Clustering 37

Jian Pei: Big Data Analytics -- Clustering 38

gene1 gene2 sample1 time1 time2 sample2 Time Sample Gene Gene-Time Matrix Gene-Sample Matrix Sample-Time Matrix expression level of gene i on sample j at time k

Jian Pei: Big Data Analytics -- Clustering 39

Jian Pei: Big Data Analytics -- Clustering 40

Jian Pei: Big Data Analytics -- Clustering 41

Jian Pei: Big Data Analytics -- Clustering 42

Informative Genes Non- informative Genes gene1 gene6 gene7 gene2 gene4 gene5 gene3 1 2 3 4 5 6 7

Jian Pei: Big Data Analytics -- Clustering 43

Jian Pei: Big Data Analytics -- Clustering 44

Jian Pei: CMPT 459/741 Clustering (3) 45