Random walks and branching processess

Bo Friis Nielsen1

1DTU Informatics

02407 Stochastic Processes 2, September 6 2016

Bo Friis Nielsen Random walks and branching processess

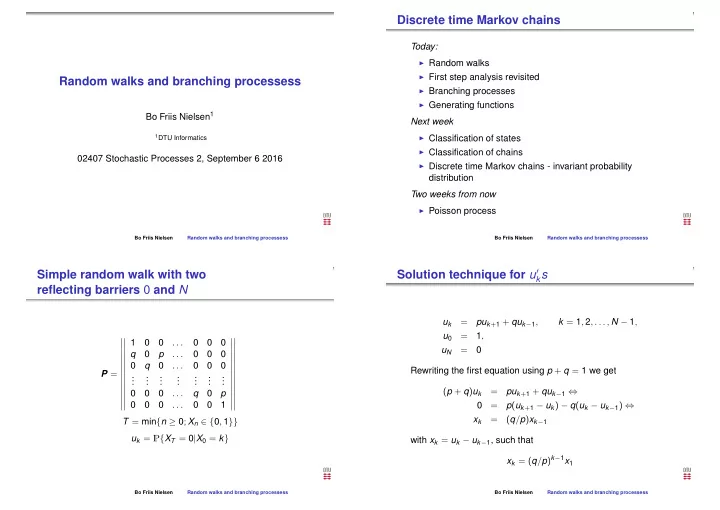

Discrete time Markov chains

Today:

◮ Random walks ◮ First step analysis revisited ◮ Branching processes ◮ Generating functions

Next week

◮ Classification of states ◮ Classification of chains ◮ Discrete time Markov chains - invariant probability

distribution Two weeks from now

◮ Poisson process

Bo Friis Nielsen Random walks and branching processess

Simple random walk with two reflecting barriers 0 and N

P =

- 1

. . . q p . . . q . . . . . . . . . . . . . . . . . . . . . . . . . . . q p . . . 1

- T = min{n ≥ 0; Xn ∈ {0, 1}}

uk = P{XT = 0|X0 = k}

Bo Friis Nielsen Random walks and branching processess

Solution technique for u′

ks

uk = puk+1 + quk−1, k = 1, 2, . . . , N − 1, u0 = 1, uN = Rewriting the first equation using p + q = 1 we get (p + q)uk = puk+1 + quk−1 ⇔ = p(uk+1 − uk) − q(uk − uk−1) ⇔ xk = (q/p)xk−1 with xk = uk − uk−1, such that xk = (q/p)k−1x1

Bo Friis Nielsen Random walks and branching processess