Dependency Grammars

Data structures and algorithms for Computational Linguistics III Çağrı Çöltekin ccoltekin@sfs.uni-tuebingen.de

University of Tübingen Seminar für Sprachwissenschaft

Winter Semester 2019–2020

Where were we? Constituency overview Dependency grammars Closing remarks

So far …

(second part of the course)

- Preliminaries: (formal) languages, grammars and

automata

– Chomsky hierarchy of language classes – Expressivity and computational complexity – Learnability

- Finite state automata, regular languages, regular

grammars and regular expressions

– DFA, NFA, determinization – Closure properties of regular languages – Minimization

- Finite state transducers and their applications in CL

- Constituency parsing (CKY, Earley)

Ç. Çöltekin, SfS / University of Tübingen WS 19–20 1 / 27 Where were we? Constituency overview Dependency grammars Closing remarks

Next …

- Dependency grammars, and dependency treebanks

- Dependency parsing

– Transition based dependency parsing (with a short introduction to classifjcation) – Graph based dependency parsing

Ç. Çöltekin, SfS / University of Tübingen WS 19–20 2 / 27 Where were we? Constituency overview Dependency grammars Closing remarks

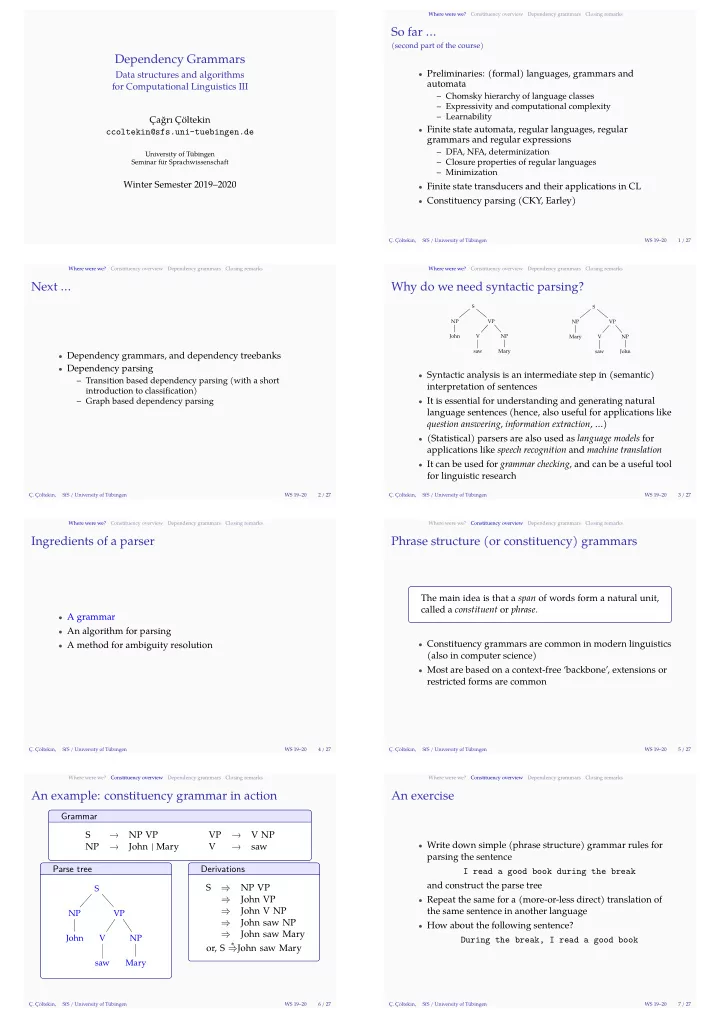

Why do we need syntactic parsing?

S NP John VP V saw NP Mary S NP Mary VP V saw NP John

- Syntactic analysis is an intermediate step in (semantic)

interpretation of sentences

- It is essential for understanding and generating natural

language sentences (hence, also useful for applications like question answering, information extraction, …)

- (Statistical) parsers are also used as language models for

applications like speech recognition and machine translation

- It can be used for grammar checking, and can be a useful tool

for linguistic research

Ç. Çöltekin, SfS / University of Tübingen WS 19–20 3 / 27 Where were we? Constituency overview Dependency grammars Closing remarks

Ingredients of a parser

- A grammar

- An algorithm for parsing

- A method for ambiguity resolution

Ç. Çöltekin, SfS / University of Tübingen WS 19–20 4 / 27 Where were we? Constituency overview Dependency grammars Closing remarks

Phrase structure (or constituency) grammars

The main idea is that a span of words form a natural unit, called a constituent or phrase.

- Constituency grammars are common in modern linguistics

(also in computer science)

- Most are based on a context-free ‘backbone’, extensions or

restricted forms are common

Ç. Çöltekin, SfS / University of Tübingen WS 19–20 5 / 27 Where were we? Constituency overview Dependency grammars Closing remarks

An example: constituency grammar in action

Grammar S → NP VP VP → V NP NP → John | Mary V → saw Parse tree

S NP John VP V saw NP Mary

Derivations S ⇒ NP VP ⇒ John VP ⇒ John V NP ⇒ John saw NP ⇒ John saw Mary

- r, S

∗

⇒John saw Mary

Ç. Çöltekin, SfS / University of Tübingen WS 19–20 6 / 27 Where were we? Constituency overview Dependency grammars Closing remarks

An exercise

- Write down simple (phrase structure) grammar rules for

parsing the sentence I read a good book during the break and construct the parse tree

- Repeat the same for a (more-or-less direct) translation of

the same sentence in another language

- How about the following sentence?

During the break, I read a good book

Ç. Çöltekin, SfS / University of Tübingen WS 19–20 7 / 27