09/23/2020 Introduction to Data Mining, 2nd Edition 1

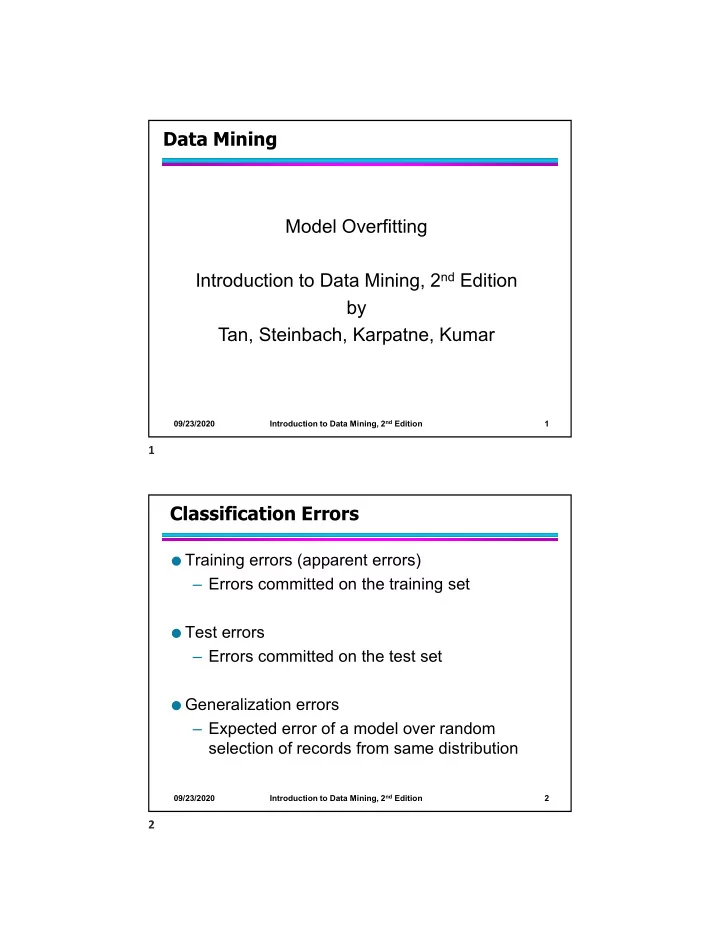

Data Mining Model Overfitting Introduction to Data Mining, 2nd Edition by Tan, Steinbach, Karpatne, Kumar

09/23/2020 Introduction to Data Mining, 2nd Edition 2

Data Mining Model Overfitting Introduction to Data Mining, 2 nd - - PDF document

Data Mining Model Overfitting Introduction to Data Mining, 2 nd Edition by Tan, Steinbach, Karpatne, Kumar Introduction to Data Mining, 2 nd Edition 09/23/2020 1 1 Classification Errors Training errors (apparent errors) Errors

09/23/2020 Introduction to Data Mining, 2nd Edition 1

09/23/2020 Introduction to Data Mining, 2nd Edition 2

09/23/2020 Introduction to Data Mining, 2nd Edition 3

from a Gaussian centered at (10,10)

distribution

09/23/2020 Introduction to Data Mining, 2nd Edition 4

09/23/2020 Introduction to Data Mining, 2nd Edition 5

Decision Tree Decision boundaries on Training data

09/23/2020 Introduction to Data Mining, 2nd Edition 6

Decision Tree Decision Tree Decision boundaries on Training data

09/23/2020 Introduction to Data Mining, 2nd Edition 7

Decision Tree with 4 nodes Decision Tree with 50 nodes

Which tree is better ?

09/23/2020 Introduction to Data Mining, 2nd Edition 8

increasing even though training error may be decreasing

09/23/2020 Introduction to Data Mining, 2nd Edition 9

Using twice the number of data instances

09/23/2020 Introduction to Data Mining, 2nd Edition 10

Using twice the number of data instances

Decision Tree with 50 nodes Decision Tree with 50 nodes

09/23/2020 Introduction to Data Mining, 2nd Edition 11

09/23/2020 Introduction to Data Mining, 2nd Edition 12

10

09/23/2020 Introduction to Data Mining, 2nd Edition 13

50

09/23/2020 Introduction to Data Mining, 2nd Edition 14

09/23/2020 Introduction to Data Mining, 2nd Edition 15

Use additional 100 noisy variables generated from a uniform distribution along with X and Y as attributes. Use 30% of the data for training and 70% of the data for testing Using only X and Y as attributes

09/23/2020 Introduction to Data Mining, 2nd Edition 16

09/23/2020 Introduction to Data Mining, 2nd Edition 17

09/23/2020 Introduction to Data Mining, 2nd Edition 18

09/23/2020 Introduction to Data Mining, 2nd Edition 19

09/23/2020 Introduction to Data Mining, 2nd Edition 20

Relative cost of adding a leaf node

09/23/2020 Introduction to Data Mining, 2nd Edition 21

09/23/2020 Introduction to Data Mining, 2nd Edition 22

09/23/2020 Introduction to Data Mining, 2nd Edition 23

A? B? C? 1 1 Yes No B1 B2 C1 C2

09/23/2020 Introduction to Data Mining, 2nd Edition 24

Stop if all instances belong to the same class Stop if all the attribute values are the same

Stop if number of instances is less than some user-specified

Stop if class distribution of instances are independent of the

Stop if expanding the current node does not improve impurity

Stop if estimated generalization error falls below certain threshold

09/23/2020 Introduction to Data Mining, 2nd Edition 25

09/23/2020 Introduction to Data Mining, 2nd Edition 26

Class = Yes 8 Class = No 4 Class = Yes 3 Class = No 4 Class = Yes 4 Class = No 1 Class = Yes 5 Class = No 1

09/23/2020 Introduction to Data Mining, 2nd Edition 27

09/23/2020 Introduction to Data Mining, 2nd Edition 28

09/23/2020 Introduction to Data Mining, 2nd Edition 29

09/23/2020 Introduction to Data Mining, 2nd Edition 30