CS535 Big Data 1/29/2020 Week 2- B Sangmi Lee Pallickara http://www.cs.colostate.edu/~cs535 Spring 2020 Colorado State University 1

CS535 Big Data | Computer Science | Colorado State University

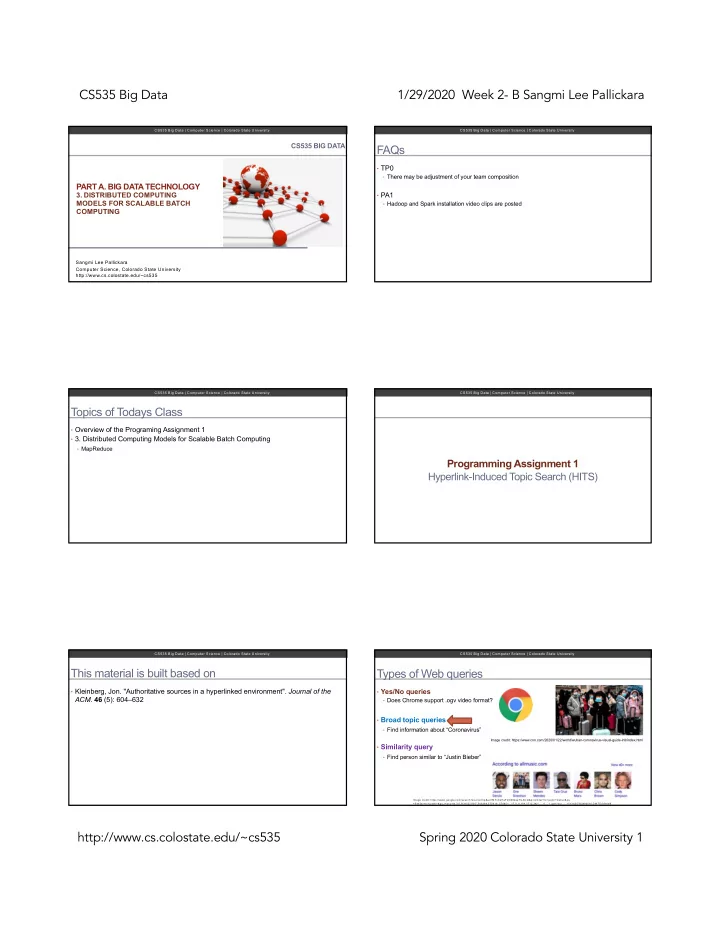

CS535 BIG DATA

PART A. BIG DATA TECHNOLOGY

- 3. DISTRIBUTED COMPUTING

MODELS FOR SCALABLE BATCH COMPUTING

Sangmi Lee Pallickara Computer Science, Colorado State University http://www.cs.colostate.edu/~cs535

CS535 Big Data | Computer Science | Colorado State University

FAQs

- TP0

- There may be adjustment of your team composition

- PA1

- Hadoop and Spark installation video clips are posted

CS535 Big Data | Computer Science | Colorado State University

Topics of Todays Class

- Overview of the Programing Assignment 1

- 3. Distributed Computing Models for Scalable Batch Computing

- MapReduce

CS535 Big Data | Computer Science | Colorado State University

Programming Assignment 1 Hyperlink-Induced Topic Search (HITS)

CS535 Big Data | Computer Science | Colorado State University

This material is built based on

- Kleinberg, Jon. "Authoritative sources in a hyperlinked environment". Journal of the

- ACM. 46 (5): 604–632

CS535 Big Data | Computer Science | Colorado State University

Types of Web queries

- Yes/No queries

- Does Chrome support .ogv video format?

- Broad topic queries

- Find information about “Coronavirus”

- Similarity query

- Find person similar to “Justin Bieber”

Image credit: https://www.cnn.com/2020/01/22/world/wuhan-coronavirus-visual-guide-intl/index.html

Im age credit: https://w w w .google.com /search?source=hp&ei=tM YxXsH aFZO 4tQ ae7ILAC Q &q=sim ilar+to+justin+bieber&oq =Sim ilar+to+justin+&gs_l=psy-ab.3.0.0l3j0i22i30l7.546394.575419..576451...17.0..0.184.1712.34j1......0....1..gw s-w iz.......0i131j0i70i249j0i10.D W TD 5rf16d8