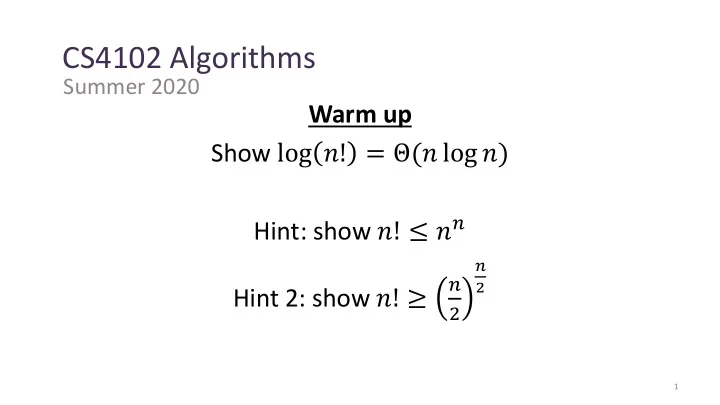

Warm up Show log 𝑜! = Θ(𝑜 log 𝑜) Hint: show 𝑜! ≤ 𝑜𝑜 Hint 2: show 𝑜! ≥

𝑜 2

𝑜 2

1

CS4102 Algorithms Summer 2020 Warm up Show log ! = ( log ) Hint: - - PowerPoint PPT Presentation

CS4102 Algorithms Summer 2020 Warm up Show log ! = ( log ) Hint: show ! 2 Hint 2: show ! 2 1 log ! = log ! = 1 2 2 1 = <

𝑜 2

1

2

3

𝑜 2 = 𝑜

𝑜 2

𝑜 2

4

5

Master theorem Case 3! Because 𝑇 𝑜 = Ω(𝑜)

6

2 5 1 3 6 4 7 8 10 9 11 12 2 1 3 5 6 4 7 8 9 10 11 12

7

8

10

th order statistic:

𝑜 𝑜/10 9𝑜/10 𝑜/100 9𝑜/100 9𝑜/100 81𝑜/100 1 1 1 1

𝑜 𝑜 𝑜 + + + + + + +

9

10

10

th order statistic:

11

1 5 2 3 6 4 7 8 10 9 11 12 1 2 3 5 6 4 7 8 10 9 11 12

12

𝑒 𝑜

𝑒 𝑜−𝑒

13

14

1 2 3 4 5 6 7 8 9 10 11 12

15

1 2 3 4 5 6 7 8 9 10 11 12

12

first pivot

1 𝑘−𝑗+1

1 𝑘−𝑗+1 𝑗<𝑘

16

1 𝑘 − 𝑗 + 1

𝑗<𝑘

17

2 2

1 2 3 4 5 6 7 8 9 10 11 12

1 𝑘 − 𝑗 + 1

𝑗<𝑘

18

2 2 + 2 3

1 2 3 4 5 6 7 8 9 10 11 12

1 𝑘 − 𝑗 + 1

𝑗<𝑘

19

2 2 + 2 3 + 2 4

1 2 3 4 5 6 7 8 9 10 11 12

1 𝑘 − 𝑗 + 1

𝑗<𝑘

20

2 2 + 2 3 + 2 4 + 2 5 + ⋯ + 2 𝑜

1 2 3 4 5 6 7 8 9 10 11 12

1 𝑘 − 𝑗 + 1

𝑗<𝑘

21

When 𝑗 = 1: 2

1 2 + 1 3 + 1 4 + ⋯ + 1 𝑜

𝑜 terms overall 1 𝑘 − 𝑗 + 1

𝑗<𝑘

≤ 2𝑜 1 2 + 1 3 + ⋯ + 1 𝑜

22

𝑃(𝑜 log 𝑜) 𝑃(𝑜 log 𝑜) 𝑃(𝑜 log 𝑜) 𝑃(𝑜2) 𝑃(𝑜2) Can we do better than 𝑃(𝑜 log 𝑜)?

23

24

>or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? [1,2,3,4,5] [2,1,3,4,5] [5,2,4,1,3] [5,4,3,2,1]

… … … … … … > < < > < > > > > < < < < > One comparison Result of comparison Permutation

Possible execution path

25

>or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? [1,2,3,4,5] [2,1,3,4,5] [5,2,4,1,3] [5,4,3,2,1]

… … … … … … > < < > < > > > > < < < < > One comparison Result of comparison Permutation

Possible execution path 𝑜! Possible permutations

log 𝑜! Θ(𝑜 log 𝑜)

– There is no (comparison-based) sorting algorithm with run time 𝑝(𝑜 log 𝑜)

26

>or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? >or<? [1,2,3,4,5] [2,1,3,4,5] [5,2,4,1,3] [5,4,3,2,1]

… … … … … … > < < > < > > > > < < < < > One comparison Result of comparison Permutation

Possible execution path 𝑜! Possible permutations

log 𝑜! Θ(𝑜 log 𝑜)

27

𝑃(𝑜 log 𝑜) 𝑃(𝑜 log 𝑜) 𝑃(𝑜 log 𝑜) 𝑃(𝑜2) 𝑃(𝑜2) Optimal! Optimal! Optimal!

28

– Break 𝑜-element list into two lists of 𝑜 2 elements

– If 𝑜 > 1: Sort each sublist recursively – If 𝑜 = 1: List is already sorted (base case)

– Merge together sorted sublists into one sorted list

– 2 sorted lists (𝑀1, 𝑀2) – 1 output list (𝑀𝑝𝑣𝑢)

Stable: If elements are equal, leftmost comes first

30

– Break 𝑜-element list into two lists of 𝑜 2 elements

– If 𝑜 > 1: Sort each sublist recursively – If 𝑜 = 1: List is already sorted (base case)

– Merge together sorted sublists into one sorted list

31

32

Parallelizable: Allow different machines to work

𝑈 𝑜 = 2𝑈(𝑜 2 ) + 𝑜

𝑜 𝑜 2 𝑜 2 𝑜 4 𝑜 4 𝑜 4 𝑜 4 1 1 1 1 1 1

𝑈 𝑜 = 𝑈(𝑜 2 ) + 𝑜

𝑜 𝑜 2 𝑜 2 𝑜 4 𝑜 4 𝑜 4 𝑜 4 1 1 1 1 1 1

𝑜 2 𝑜 4 1

two sublists around that element

36

8 5 7 9 12 10 1 2 4 3 6 11 5 8 7 9 12 10 1 2 4 3 6 11 5 7 8 9 12 10 1 2 4 3 6 11 5 7 8 9 12 10 1 2 4 3 6 11

elements if out of order, repeat until sorted

38

1 2 3 4 5 6 7 8 9 10 11 12 1 2 3 4 5 6 7 8 9 10 11 12

Only makes one “pass”

2 3 4 5 6 7 8 9 10 11 12 1

2 3 4 5 6 7 8 9 10 11 1 12

Requires 𝑜 passes, thus is 𝑃(𝑜2)

elements if out of order, repeat until sorted

"the bubble sort seems to have nothing to recommend it, except a catchy name and the fact that it leads to some interesting theoretical problems” –Donald Knuth, The Art of Computer Programming

40

3 5 7 8 10 12 9 2 4 6 1 11 Sorted Prefix 3 5 7 8 10 9 12 2 4 6 1 11 3 5 7 8 9 10 12 2 4 6 1 11 3 5 7 8 9 10 12 2 4 6 1 11 Sorted Prefix

(but with very small constants) Great for short lists!

prefix by “inserting” the next element

42

1 2 3 4 5 6 7 8 9 10 11 12 Sorted Prefix 1 2 3 4 5 6 7 8 9 10 11 12 Sorted Prefix

prefix by “inserting” the next element

(but with very small constants) Great for short lists!

44

3 5 7 8 10 12 10’ 2 4 6 1 11 Sorted Prefix 3 5 7 8 10 10’ 12 2 4 6 1 11 3 5 7 8 10 10’ 12 2 4 6 1 11 Sorted Prefix

The “second” 10 will stay to the right

prefix by “inserting” the next element

“All things considered, it’s actually a pretty good sorting algorithm!” –Nate Brunelle

(but with very small constants) Great for short lists!

46

10 9 6 8 7 5 2 4 1 3 10 9 6 8 7 5 2 4 1 3

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8 9 10

47

3 9 6 8 7 5 2 4 1 3 9 6 8 7 5 2 4 1

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8 9

48

9 3 6 8 7 5 2 4 1 9 3 6 8 7 5 2 4 1

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8 9

49

9 8 6 3 7 5 2 4 1 9 8 6 3 7 5 2 4 1

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8 9

50

9 8 6 4 7 5 2 3 1 9 8 6 4 7 5 2 3 1

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8 9

element from the heap to build sorted list Right- to-Left

When removing an element from the heap, move it to the (now unoccupied) end of the list

52

10 9 6 8 7 5 2 4 1 3 10 9 6 8 7 5 2 4 1 3

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8 9 10

53

3 9 6 8 7 5 2 4 1 3 9 6 8 7 5 2 4 1 10

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8 9

54

9 8 6 4 7 5 2 3 1 9 8 6 4 7 5 2 3 1 10

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8 9

55

8 7 6 4 1 5 2 3 8 7 6 4 1 5 2 3 9 10

Max Heap Property: Each node is larger than its children

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7 8

56

7 4 6 3 1 5 2 7 4 6 3 1 5 2 8 9 10

1 2 3 4 5 6 7 8 9 10 1 2 3 4 6 5 7

element from the heap to build sorted list Right- to-Left

58

59

Range is [1, 𝑙] (here [1,6]) make an array 𝐷 of size 𝑙 populate with counts of each value

3 6 6 1 3 4 1 6 1 2 3 4 5 6 7 8 2 2 1 3 1 2 3 4 5 6

𝐷 = For 𝑗 in 𝑀: + +C 𝑀 𝑗 1. 𝑀 = Take “running sum” of 𝐷 to count things less than each value

2 2 4 5 5 8 1 2 3 4 5 6

𝐷 = For 𝑗 = 1 to len(𝐷): 𝐷 𝑗 = 𝐷 𝑗 − 1 + 𝐷[𝑗] 2.

running sum To sort: last item of value 3 goes at index 4

60

3 6 6 1 3 4 1 6 1 2 3 4 5 6 7 8

𝑀 = For each element of 𝑀 (last to first): Use 𝐷 to find its proper place in 𝐶 Decrement that position of C

2 2 4 5 5 8 1 2 3 4 5 6

𝐷 =

Last item of value 6 goes at index 8

1 2 3 4 5 6 7 8

7 6

61

3 6 6 1 3 4 1 6 1 2 3 4 5 6 7 8

𝑀 = For each element of 𝑀 (last to first): Use 𝐷 to find its proper place in 𝐶 Decrement that position of C

2 2 4 5 5 7 1 2 3 4 5 6

𝐷 =

Last item of value 1 goes at index 2

6 1 2 3 4 5 6 7 8

1 1

Run Time: 𝑃 𝑜 + 𝑙 Memory: 𝑃 𝑜 + 𝑙

62

One Exabyte = 1018 bytes 1 million terabytes (TB) 1 billion gigabytes (GB) 100,000 x Library of Congress (print)

63 https://en.wikipedia.org/wiki/Utah_Data_Center

64

103 801 401 323 255 823 999 101 1 2 3 4 5 6 7

999 018 255 555 245 103 323 823 113 512 113 901 555 512 245 800 018 121 8 9 10 11 12 13 14 15 1 2 3 4 5 6 7 801 401 101 901 121 800 8 9

65

999 018 255 555 245 103 323 823 113 512 1 2 3 4 5 6 7 801 401 101 901 121 800 8 9 999 255 555 245 121 323 823 1 2 3 4 5 6 7 512 113 018 800 801 401 101 901 103 8 9

66

999 255 555 245 121 323 823 1 2 3 4 5 6 7 512 113 018 800 801 401 101 901 103 8 9 901 999 800 801 823 512 555 401 323 245 255 1 2 3 4 5 6 7 101 103 113 121 018 8 9