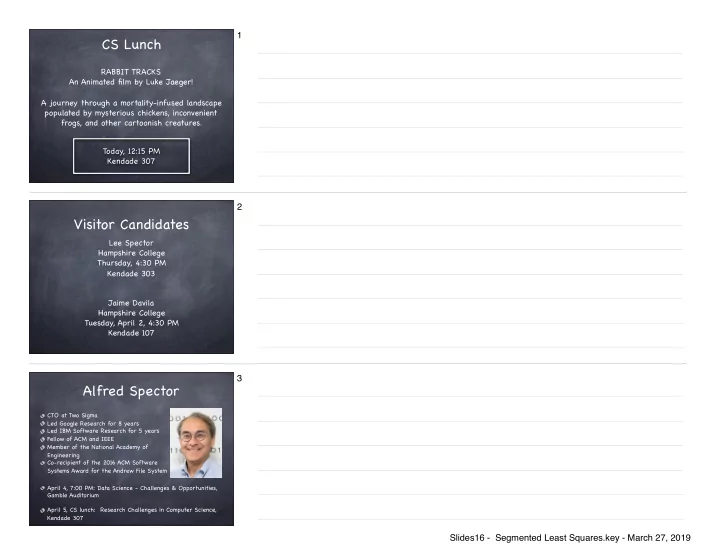

SLIDE 1 CS Lunch

Today, 12:15 PM Kendade 307 RABBIT TRACKS An Animated film by Luke Jaeger! A journey through a mortality-infused landscape populated by mysterious chickens, inconvenient frogs, and other cartoonish creatures.

1

Visitor Candidates

Lee Spector Hampshire College Thursday, 4:30 PM Kendade 303 Jaime Davila Hampshire College Tuesday, April 2, 4:30 PM Kendade 107

2

Alfred Spector

April 4, 7:00 PM: Data Science - Challenges & Opportunities, Gamble Auditorium April 5, CS lunch: Research Challenges in Computer Science, Kendade 307 CTO at Two Sigma Led Google Research for 8 years Led IBM Software Research for 5 years Fellow of ACM and IEEE Member of the National Academy of Engineering Co-recipient of the 2016 ACM Software Systems Award for the Andrew File System

3 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 2 Midterm 2!

Monday, April 8 In class Covers Greedy Algorithms and Divide and Conquer Closed book

4

Dynamic Programming Formula

Divide a problem into a polynomial number of smaller subproblems Solve subproblem, recording its answer Build up answer to bigger problem by using stored answers of smaller problems

5

Weighted Interval Scheduling

Job j starts at sj, finishes at fj, and has weight or value vj . Two jobs compatible if they don't overlap. Goal: find maximum weight subset of mutually compatible jobs.

Time

1 2 3 4 5 6 7 8 9 10 11

4 3 1 3 2 4

6 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 3 Optimal Substructure

OPT( j) = if j= 0 max v j + OPT( p( j)), OPT( j −1)

{ }

# $ %

OPT(j) = value of optimal solution to the problem consisting of job requests 1, 2, ..., j. Case 1: OPT selects job j. Case 2: OPT does not select job j.

Case 1 Case 2

7

Input: n, s1,…,sn , f1,…,fn , v1,…,vn Sort jobs by finish times so that f1 ≤ f2 ≤ ... ≤ fn. Compute p(1), p(2), …, p(n) Compute-Opt(j) { if (j = 0) return 0 else return max(vj + Compute-Opt(p(j)), Compute-Opt(j-1)) }

Straightforward Recursive Algorithm 8

Iterative Solution

Bottom-up dynamic programming. Unwind recursion. Sort jobs by finish times so that f1 ≤ f2 ≤ ... ≤ fn. Compute p(1), p(2), …, p(n) Iterative-Compute-Opt { M[0] = 0 for j = 1 to n M[j] = max(vj + M[p(j)], M[j-1]) }

9 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 4 Memoization

3 3 4 4 6 8 9 9

M

Time

1 2 3 4 5 6 7 8 9 10 11

4 3 1 3 3 2 4 1

1 2 3 4 5 6 7 8

Iterative-Compute-Opt { M[0] = 0 for j = 1 to n M[j] = max(vj + M[p(j)], M[j-1]) }

10-1

Memoization

3 3 4 4 6 8 9 9

M

Time

1 2 3 4 5 6 7 8 9 10 11

4 3 1 3 3 2 4 1

1 2 3 4 5 6 7 8

Iterative-Compute-Opt { M[0] = 0 for j = 1 to n M[j] = max(vj + M[p(j)], M[j-1]) }

Great! The value is 9, but which jobs should we select????

10-2

Memoization

3 3 4 4 6 8 9 9

M

Time

1 2 3 4 5 6 7 8 9 10 11

4 3 1 3 3 2 4 1

1 2 3 4 5 6 7 8

Find-Solution(j) { if (j = 0) return else if (M[j] == M[j-1]) Find-Solution(j-1) else Find-Solution(p(j)) include j }

11 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 5 Memoization

3 3 4 4 6 8 9 9

M

Time

1 2 3 4 5 6 7 8 9 10 11

4 3 1 3 3 2 4 1

1 2 3 4 5 6 7 8

Find-Solution(j) { if (j = 0) return else if (M[j] == M[j-1]) Find-Solution(j-1) else Find-Solution(p(j)) include j }

12

Memoization

3 3 4 4 6 8 9 9

M

Time

1 2 3 4 5 6 7 8 9 10 11

4 3 1 3 3 2 4 1

1 2 3 4 5 6 7 8

Find-Solution(j) { if (j = 0) return else if (M[j] == M[j-1]) Find-Solution(j-1) else Find-Solution(p(j)) include j }

13

Memoization

3 3 4 4 6 8 9 9

M

Time

1 2 3 4 5 6 7 8 9 10 11

4 3 1 3 3 2 4 1

1 2 3 4 5 6 7 8

Find-Solution(j) { if (j = 0) return else if (M[j] == M[j-1]) Find-Solution(j-1) else Find-Solution(p(j)) include j }

14 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 6

Dynamic Programming Formula

Divide a problem into a polynomial number of smaller subproblems We often think recursively to identify the subproblems Solve subproblem, recording its answer Build up answer to bigger problem by using stored answers of smaller problems We develop algorithm that builds up the answer iteratively

15

Iterative Solution

Bottom-up dynamic programming. Unwind recursion. Sort jobs by finish times so that f1 ≤ f2 ≤ ... ≤ fn. Compute p(1), p(2), …, p(n) Iterative-Compute-Opt { M[0] = 0 for j = 1 to n M[j] = max(vj + M[p(j)], M[j-1]) }

Subproblems

16

Iterative Solution

Bottom-up dynamic programming. Unwind recursion. Sort jobs by finish times so that f1 ≤ f2 ≤ ... ≤ fn. Compute p(1), p(2), …, p(n) Iterative-Compute-Opt { M[0] = 0 for j = 1 to n M[j] = max(vj + M[p(j)], M[j-1]) }

Record subproblem answers

17 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 7 Iterative Solution

Bottom-up dynamic programming. Unwind recursion. Sort jobs by finish times so that f1 ≤ f2 ≤ ... ≤ fn. Compute p(1), p(2), …, p(n) Iterative-Compute-Opt { M[0] = 0 for j = 1 to n M[j] = max(vj + M[p(j)], M[j-1]) }

Combine subproblem answers

18

Least Squares

Foundational problem in statistic and numerical analysis. Given n points in the plane: (x1, y1), (x2, y2) , . . . , (xn, yn). Find a line y = ax + b that minimizes the sum of the squared error:

SSE = (yi − axi −b)2

i=1 n

∑

x y

19

Least Squares Solution

Result from calculus, least squares achieved when:

a = n xi yi − ( xi)

i

∑ ( yi)

i

∑

i

∑ n xi

2 − (

xi)2

i

∑

i

∑ , b = yi − a xi

i

∑

i

∑ n

20 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 8 Least Squares

Sometimes a single line does not work very well.

x y

21

Segmented Least Squares

Points lie roughly on a sequence of several line segments. Given n points in the plane (x1, y1), (x2, y2) , . . . , (xn, yn) with x1 < x2 < ... < xn, find a sequence of lines that fits well.

x y

22

Segmented Least Squares

Find a sequence of lines that minimizes: the errors E in each segment the number of lines L Tradeoff function: E + c L, for some constant c > 0.

x y

23 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 9 Calculating Line Segment Error

First calculate slope and y-intercept for least squares line: Then calculate the error associated with that line

SSE = (yi − axi −b)2

i=1 n

∑

a = n xi yi − ( xi)

i

∑ ( yi)

i

∑

i

∑ n xi

2 − (

xi)2

i

∑

i

∑ , b = yi − a xi

i

∑

i

∑ n

24

Multiway Choice

OPT(j) = minimum cost for points p1, p2 , . . . , pj. e(i, j) = minimum error for the segment using points pi, pi+1 , . . . , pj. To compute OPT(j): Last segment uses points pi, pi+1 , . . . , pj for some i. Cost = e(i, j) + c + OPT(i-1).

OPT( j) = if j= 0 min

1≤ i ≤ j

e(i, j) + c + OPT(i −1) { }

$ % & ' &

25

Segmented Least Squares: Algorithm

Segmented-Least-Squares() { sort p1..pn by their x values for j = 1 to n for i = 1 to j compute the least square error e[i][j] for

the segment pi,…, pj M[0] = 0 for j = 1 to n M[j] = min 1 ≤ i ≤ j (e[i][j] + c + M[i-1]) return M[n] }

26-1 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 10

Segmented Least Squares: Algorithm

Segmented-Least-Squares() { sort p1..pn by their x values for j = 1 to n for i = 1 to j compute the least square error e[i][j] for

the segment pi,…, pj M[0] = 0 for j = 1 to n M[j] = min 1 ≤ i ≤ j (e[i][j] + c + M[i-1]) return M[n] }

Cost

26-2

Segmented Least Squares: Algorithm

Segmented-Least-Squares() { sort p1..pn by their x values for j = 1 to n for i = 1 to j compute the least square error e[i][j] for

the segment pi,…, pj M[0] = 0 for j = 1 to n M[j] = min 1 ≤ i ≤ j (e[i][j] + c + M[i-1]) return M[n] }

Cost O(n3)

26-3

Segmented Least Squares: Algorithm

Segmented-Least-Squares() { sort p1..pn by their x values for j = 1 to n for i = 1 to j compute the least square error e[i][j] for

the segment pi,…, pj M[0] = 0 for j = 1 to n M[j] = min 1 ≤ i ≤ j (e[i][j] + c + M[i-1]) return M[n] }

Cost O(n3) O(n2)

26-4 Slides16 - Segmented Least Squares.key - March 27, 2019

SLIDE 11

Segmented Least Squares: Algorithm

Segmented-Least-Squares() { sort p1..pn by their x values for j = 1 to n for i = 1 to j compute the least square error e[i][j] for

the segment pi,…, pj M[0] = 0 for j = 1 to n M[j] = min 1 ≤ i ≤ j (e[i][j] + c + M[i-1]) return M[n] }

Cost O(n3) O(n2) Total = O(n3)

26-5

Segmented Least Squares: Algorithm

Segmented-Least-Squares() { sort p1..pn by their x values for j = 1 to n for i = 1 to j compute the least square error e[i][j] for

the segment pi,…, pj M[0] = 0 for j = 1 to n M[j] = min 1 ≤ i ≤ j (e[i][j] + c + M[i-1]) return M[n] }

Cost O(n3) O(n2) Total = O(n3) O(n log n)

26-6 Slides16 - Segmented Least Squares.key - March 27, 2019