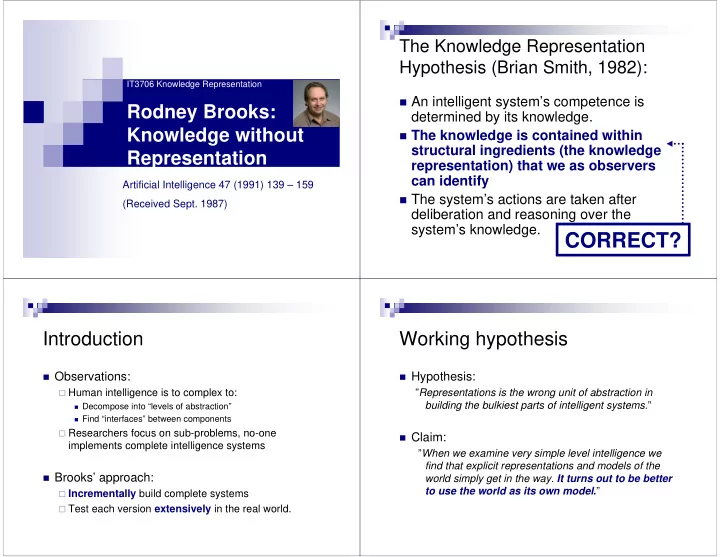

IT3706 Knowledge Representation

Rodney Brooks: Knowledge without Representation

Artificial Intelligence 47 (1991) 139 – 159 (Received Sept. 1987)

The Knowledge Representation Hypothesis (Brian Smith, 1982):

An intelligent system’s competence is

determined by its knowledge.

The knowledge is contained within

structural ingredients (the knowledge representation) that we as observers can identify

The system’s actions are taken after

deliberation and reasoning over the system’s knowledge.

CORRECT?

Introduction

Observations:

Human intelligence is to complex to:

Decompose into “levels of abstraction” Find “interfaces” between components

Researchers focus on sub-problems, no-one

implements complete intelligence systems

Brooks’ approach:

Incrementally build complete systems Test each version extensively in the real world.

Working hypothesis

Hypothesis:

”Representations is the wrong unit of abstraction in building the bulkiest parts of intelligent systems.”

Claim:

”When we examine very simple level intelligence we find that explicit representations and models of the world simply get in the way. It turns out to be better to use the world as its own model.”